Estimating

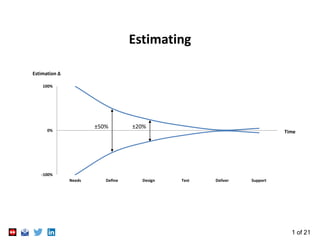

- 1. 1 of 21 Estimating -100% 0% 100% Needs Define Design Test Deliver Support Estimation Δ Time ±50% ±20%

- 2. 2 of 21 What is Estimating? Roughly calculate or judge the value, number, quantity, or extent of Oxford English Dictionary The process of finding an estimate – a value that is used because it is derived from the best information available – even if input data is incomplete, uncertain, or unstable Wikipedia The process of combining the results of experience, metrics and measurements to arrive at an approximate judgement of time, cost or effort

- 3. 3 of 21 Good Estimating is Critical to Success Good estimates enable Sound business decision making – is the project worth doing? Sound project planning – when will the project deliver? – what will it cost? Design optimisation – is this the optimum design and delivery strategy? Performance monitoring Cash flow management 20% into a project lifecycle 80% of the total cost is already committed Decisions based on early estimates are critical 0% 100% Define Design Test Deliver Cost Time Committed Costs Actual Costs

- 4. 4 of 21 Estimating Best Practice Association for Project Management Planning, Scheduling, Monitoring & Control [2015] Project Management Institute Practice Standard for Project Estimating [2010] UK Government Ministry of Defence Acquisition System Guidance: Forecasting & Estimating NASA Cost Estimating Handbook, v4.0 [2008]

- 5. 5 of 21 Estimating Methods Transparent Box Analogical Group Technologies Knowledge Based Case Based Reasoning Detailed Product Attributes Function Costing Feature Costing Accumulation of Parts Generative Activity Based Reasoning visible Black Box Statistical Parametric Neural Network Expert Judgement Source: Reference 1 Reasoning hidden Method Group Reference 2

- 6. 6 of 21 Estimating Methods II Transparent Box Advantages Limitations Analogical Group Technologies Compares the work to one or more similar but complete projects or parts thereof Quick Intuitive Case bias Large case history required Poor for innovations Knowledge Based Attempts to imitate the reasoning of experts to estimate Visible logic Structured Knowledge obsolescence Large database required Case Based Reasoning An estimate based on similar situations from a library of previous cases Quick Collective memory Large reliable case base Poor for innovations

- 7. 7 of 21 Estimating Methods III Transparent Box Advantages Limitations Detailed: Product Attributes Function Costing A product or system is estimated directly from a specification of its performance Integrates requirements with costs Need to allocate cost to function Accuracy Feature Costing The integration of CAD/CAM with cost information for cost estimation early in the design process via feature-based modelling Integrates with CAD/CAM Can be automated No feature consensus Large database required Detailed: Accumulation of Parts Generative or Analytical Cost Estimation Estimating by aggregating the processes involved to create the product Can be accurate Detail useful for negotiation Time consuming Detailed data may not be available Activity Based or ABC (Activity Based Costing) As Generative, but the overheads are allocated where they are incurred Allocates costs to sources String indication of profitability Time consuming Detailed data may not be available Allocation of overheads

- 8. 8 of 21 Estimating Methods IV Black Box Advantages Limitations Expert Judgement Domain expert uses previous similar experience Delphi – several experts independent estimates rationalised to single result Mini-Delphi – two experts independently estimate and then agree a single result Quick Flexible Bias Unstructured Repeatability Statistical Parametric Uses Cost Estimating Relationships (CERs) and algorithms or logic CERs are extracted from historical data for similar systems and define correlations between cost drivers and other system parameters such as size or performance. As defined in the Parametric Cost Estimating Handbook Clear influences Objective Repeatable Large database required to define CERs Simplistic Missing parameters Neural Network Learns the impact of attributes on cost by automatic analysis of historical data Accurate Updateable Hidden logic Complex Large database required

- 9. 9 of 21 Estimating Psychology Motivational Bias an estimate that serves the estimator more than it is objective e.g. making a plan fit the desired target Optimism Bias considering only positive previous experiences people always under-estimate, even if aware (wishful thinking) Cognitive Bias using heuristics to estimate sometimes leads to poor estimates Heuristics, or mental shortcuts, often work but can lead to cognitive bias e.g. Anchor heuristic: estimates are biased towards an early erroneous estimate Availability heuristic: poor estimates as estimators remember a limited data set Biases of different estimators can reinforce each other Rule of Pi the actual time to do the work will be the time estimated multiplied by π Student Syndrome only starting work towards the end of the estimated period

- 10. 10 of 21 Expert Judgement: for both Top-Down & Bottom-up High levels of innovation: no database of past estimates & results Often little detail about the task being estimated & little time to do estimating Mitigate expert judgement weaknesses Template to gather estimates – consistency between estimators & reduced bias Get buy-in by using experts from delivery team to estimate Use experts with domain knowledge Mini Delphi further reduces bias Give separate estimators the same information Estimate for normal conditions – work hours, seniority of staff Don’t allow for estimation uncertainty in individual estimates

- 11. 11 of 21 Managing Estimation Uncertainty Concurrency Plans often feature concurrent tasks with minimal float, and single point (deterministic) estimates Higher concurrency means greater impact when some tasks finish late Range Estimates Analysis shows deterministic outcomes have very low probability Range estimates are more realistic Even better, 3 points: minimum, most likely, maximum Dates and costs then become ranges Difference in cost between outcomes can be used to define case-by-case estimation uncertainty contingency Typical plan analysis: Probability of achieving the deterministic cost Reference 3

- 12. 12 of 21 Potential Weakness of 3 Point Estimates Outcomes may be pessimistic if most likely estimates are not 50% likely Typical 3 point estimate Probability of Most Likely estimate PML PML = 2/(10-4) = 1/3 or 33% …NOT 50%! Avoid this weakness by asking for the probability of the Most Likely estimate Example: for PML = 70% Create double triangle distribution and calculate points A & B: 0.7 = 0.5*(6-4)*A so A = 0.7 0.3 = 0.5*(10-6)*B so B = 0.15 Area either side is not equal and will vary task by task as defined by the 4th point In practice need only do this for 10 to 20 most critical tasks – found by sensitivity analysis

- 13. 13 of 21 Selective 4 Point Estimation: Simulation Results Result for 3 point estimates alone Result including selected 4 point estimates: Higher probability of deterministic outcome Smaller, more realistic risk budget Tornado Graph: Sensitivity analysis to select tasks to estimate with 4 points Risk Budget: £21,125 Risk Budget: £13,696

- 14. 14 of 21 Estimation Uncertainty Contingency One strategy: Estimation uncertainty ‘project risk pot’ = 95% cost – deterministic cost The business Risk Appetite can inform what probability to use, e.g.: 10% Team Target (likely risks do not occur) 50% Best Estimate (as many risks occur as not) 90% ‘Safe’ Estimate (several unlikely major risks occur) Reward using less of this risk pot, but recognise that a proportion is likely to be required This encourages behaviour that enhances results whilst recognising estimation uncertainty and setting realistic expectations PMs use project risk pot to drive delivery to the deterministic end date Drives the right behaviour in the team – to deliver on their Most Likely estimates Selective 4 point estimating maintains competitive pricing

- 15. 15 of 21 Other Methods Price to Win estimate based on supposition or knowledge of the budget Do tailor the scope of work to suit a budget Don’t force an estimate to fit a budget Parkinson 'Work expands to fill the time available' equates the estimate to available resources These are not estimating methods – they are price and cost management methods

- 16. 16 of 21 Estimating Process Contingency Zero Risk (Deterministic) Cost Held at project & programme level Project Risk Pot Estimation Uncertainty Top-Down Estimate Rationalise Estimates PDP Project Delivery Process Product Breakdown Structure Work Breakdown Structure Work Packages & Tasks Bottom-up 3pt Estimates I N F O R M Need Strategy Opportunities Enhancement Tasks Secondary Risks Inform / Offset Threats Mitigation Tasks Risk Register Held at portfolio level If cost effective Contingency For project risks other than estimation uncertainty

- 17. 17 of 21 Practical Advice & Tips Choose estimating method case-by-case to suit project and environment No perfect estimating method, suitable for all environments Many methods rely on a database or case history of past estimates and results Often need a combination of methods Risk Management for project risks other than estimation uncertainty Use the proposed delivery team to create the estimates in order to get buy-in Base estimates on normal conditions: 5 day week, 8 hour day Use consistent time units Do a reality check on the result and rationalise bottom-up with top-down Range estimates are more realistic than single point (deterministic estimate) Don’t pad individual estimates as a means to handle estimation uncertainty If project type & environment always same – use one-size-fits-all estimation uncertainty contingency otherwise use 3 point estimating to derive estimation uncertainty contingency

- 18. 18 of 21 Practical Advice & Tips II Estimation uncertainty is a significant project risk, but not the only project risk Use Risk Management to handle the other types of project risk Bottom-up estimating: avoid long tasks and tasks where progress hard to assess Educated Guess: beware early estimates often based on little information Can become base against which future estimates are judged (anchor heuristic) Cautions delivered with that first estimate rarely remembered Don’t confuse uncertainty with a lack of knowledge Large ranges generally indicate guessing Experience is required to estimate rather than guess It’s still just an estimate Project starts late: provide estimates from time T0 rather than a fixed date Team members late to start: avoid ‘brick-wall’ starts where possible Incorrect assumptions: list assumptions, track changes & adjust plans

- 19. 19 of 21 Summary Estimating is a critical competence Be aware of impact of estimator psychology Significant innovation in projects limits useful estimation methods Build historical data records (estimate & result) where possible Combine estimation methods for best results 3 point range estimates offer case-by-case estimation uncertainty contingency 4 point estimates for few most influential tasks eradicates potential 3 point weakness One-size-fits-all estimation uncertainty contingency suitable if projects all similar Risk Management to handle project risk other than estimation uncertainty

- 20. 20 of 21 1. Cost Estimation Method Selection: Matching User Requirements and Knowledge Availability to Methods DK Evans, Dr. JD Lanham, Dr. R Marsh, Paper for ICEC (International Cost Engineering Council), 2006 SEEDS (Systems Engineering Estimation for Decision Support), AMRC (Aerospace Manufacturing Research Centre) University of West of England, Bristol, UK 2. Delphi Estimation Method Wikipedia 3. Tool used for planning and 3 & 4 point estimation uncertainty calculations: Oracle Primavera Risk Analysis or Safran Risk References

- 21. 21 of 21 Author Profile In my board role I led a team of 22 Project Managers and 5 Quality Engineers, and ensured Roke’s £79m project portfolio delivered better than budget profit. I set-up and ran a virtual PMO and created REP, the Roke Engineering Process, also managing the engineering tools to support it. After 4 years as an electronics engineer for Siemens, achieving Chartered Engineer, I moved into project management for 14 years, at Siemens and Roke Manor Research. Successfully delivering Roke’s most challenging whole lifecycle product developments on time and under budget led to a role as Director and board member for 6 years. In 2013 I returned to hands-on project management as Programme Director at Cambridge Consultants, founder member of the Cambridge Science Park. Creator of the APM corporate accredited PM Excellence Programme, I chaired a quarterly PM forum to share best practice and built a supportive PM community. I coached seven PMs to RPP, five to PQ, and all passed APMP. These investments in PM professionalism led to a turn-around and annual improvement in project results across a 400 project portfolio and delivered an above budget performance in five consecutive years with profits totalling £7.9m above budget. Passionate advocate of PM professionalism, Fellow of the APM and the IET and author of articles published in Project and PM Today. Professional Development Winning Project Work Planning Estimating Risk Management Earned Value Management Change Control Stakeholder Management 3 Steps to Professional Project Management: Case Study ProjectManagementTopics