Diagnosis the missing ingredient in RTI assessment

- 1. 204 The Reading Teacher Vol. 65 Issue 3 pp. 204–208 DOI:10.1002/TRTR.01031 © 2011 International Reading AssociationR T P E R S P E C T I V E S O N R T I DIAGNOSIS D escriptions and advice about Response to Intervention (RTI) implementations often focus on assessment. In an earlier column, Wixson and Valencia (2011) described the multiple purposes for assessment in an RTI system: ■ Screening ■ Diagnostics ■ Formative progress monitoring ■ Benchmark progress monitoring ■ Summative outcome assessment Because RTI is an approach to identifying students as learning disabled, it is important to attend to the quality of the measurements used to make high- stakes decisions about student placements. However, RTI is also intended to reduce the number of students who become identified as having a learning disability by preventing reading difficulties. This is an instructional problem and, as Scanlon and Anderson (2010) noted, too little attention is given to “the nature and qualities of the instruction that is offered” (p. 21). All students deserve high-quality first instruction (we will address this topic in an upcoming column). However, good teaching requires thoughtful assessment. Furthermore, some students continue to struggle, even when they receive very good classroom instruction. These students need more tailored instruction that is responsive to their specific strengths and areas of need. Screening measures designed to identify struggling students are usually quite general or sample only a snippet of skilled reading performance. They rarely provide the specific information needed to determine the most appropriate intervention or instruction. By itself, this would not be problematic. However, many schools and districts have created RTI systems that move straight from screening to instruction without looking more closely at the individual student, an approach called the direct route (Johnson, Jenkins, & Petscher, 2010). Of course, when the direct route decision is based on only one screening instrument, there is quite a grave possibility of error in classification (false negatives or false positives). As well, these single measures do not provide enough information to make good instructional decisions about individuals or small groups of students (Valencia et al., 2010). This problem is exacerbated when the school adopts a one-size-fits-all intervention, a practice sometimes called standard protocol (Fuchs et al., 2003). Although Fuchs and his colleagues were clear that a standard protocol was to be employed with groups of students with similar reading difficulties, a single screening measure often invites only one possible response, and, Marjorie Y. Lipson and Pam Chomsky-Higgins provide research and professional development support to schools through the Vermont Reads Institute at the University of Vermont, Montpelier, USA; e-mail mlipson@ vriuvm.org and pamela.chomsky-higgins@uvm.edu. Jane Kanfer is a reading specialist at Milton Elementary School in Vermont; e-mail JKanfer@mtsd-vt.org. The department editors welcome reader comments. Karen K. Wixson is dean of the school of education at the University of North Carolina at Greensboro, USA; e-mail kwixson@uncg.edu. Marjorie Y. Lipson is Professor Emerita at the University of Vermont, Burlington, USA; e-mail mlipson@uvm.edu. The Missing Ingredient in RTI Assessment MarjorieY. Lipson with Pam Chomsky-Higgins ■ Jane Kanfer TRTR_1031.indd 204TRTR_1031.indd 204 11/7/2011 4:05:47 PM11/7/2011 4:05:47 PM

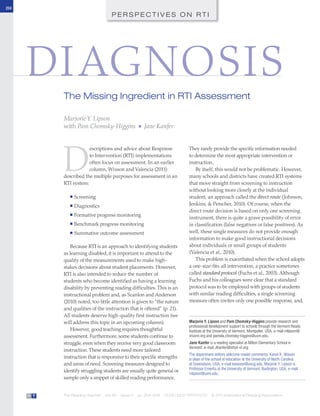

- 2. DIAGNOSIS: THE MISSING INGREDIENT IN RTI ASSESSMENT www.reading.org R T 205 for many schools, the direct route leads to just one instructional option. A very large body of research is emerging to confirm what good teachers and specialists have always known: the underlying roots of students’ reading difficulties are diverse (Aaron et al., 2008; Valencia & Buly, 2004). In addition, it is becoming quite clear that instruction focused on the wrong thing not only does not help students, but it may actually be harmful (Connor, Morrison, & Katch, 2004). It should be a very high priority for teachers and specialists to gather specific information about individual students to make appropriate instructional decisions. So, we turn our attention to an essential, but often neglected, type of assessment: diagnosis. Using Assessment for Diagnostic Purposes Because the word diagnosis is so often associated with identifying disease or its symptoms, we want to be clear that we do not assume a medical model of reading difficulties. Instead, we want to promote the original meaning of the word diagnosis, from the Greek to discern the nature and cause of anything. According to Merriam-Webster, a diagnosis is “an investigation or analysis of the cause or nature of a condition, situation or problem” (www.aolsvc .merriam-webster.aol.com/dictionary/ diagnosis). Without very good diagnostic information and/or a flexible formative assessment system, our instructional programs and student performance will not improve, and RTI will simply be an alternate route to special education placement or to permanent membership in Title I classrooms. We have been collaborating with many Vermont schools to improve literacy success for all students and have recently turned our attention to helping schools adopt a systemic approach to RTI. Pam and Marge started to work at Jane’s school 2 years ago. This large Vermont school was entering year 3 of corrective action. Despite several years of attention to literacy, too many students were identified for special education, and too few were benefitting from the literacy instruction they received. The reading teachers in the building were knowledgeable and committed. However, they were relying on a conventional approach to services. Using universal assessment data, they identified students near the beginning of the year, created a schedule for the students on “their caseload,” and then pulled those students from the classroom for the remainder of the year. The nature of the instruction provided varied from teacher to teacher and was not well documented. More troubling were the longitudinal data suggesting that students who participated in reading services (provided by reading teachers or special educators) were not gaining ground against their peers. Although there were exceptions, students typically continued to qualify for reading services from year to year, and their overall performance was below the state standards. During year 1 of our collaboration, Jane and her colleagues began to examine their available assessment data much more closely. They moved from global reading levels to looking at the component areas of reading (e.g., word recognition, fluency, comprehension), and they constructed “typical profiles” of student difficulties (see Figure for the framework). The first profiles focused not Figure Template for Analyzing Student Data and Creating Profiles Intervention Plan for Literacy—Template Student Profile: [Describe or name the type of profile—for example, Struggling Grade 1 Student or Intermediate Comprehension Difficulty] Intervention Focus: [Describe or name primary focus for intervention] Area(s) of Primary Concern Evidence and Data Source Phonological awareness Letter ID Sight vocabulary Fluency Decoding Vocabulary Comprehension Motivation Stamina Writing about reading Text level Other Goal(s) of the Intervention: Describe the Intervention: [Include Information about: • Duration • Frequency • Materials to be used • Strategies, techniques, approaches, etc. • Instructional framework • Person responsible • Relationship to classroom instruction] Progress Monitoring: [How will instructional impact and effectiveness be assessed?] TRTR_1031.indd 205TRTR_1031.indd 205 11/7/2011 4:05:48 PM11/7/2011 4:05:48 PM

- 3. DIAGNOSIS: THE MISSING INGREDIENT IN RTI ASSESSMENT 206 The Reading Teacher Vol. 65 Issue 3 November 2011R T on the students with the most severe (and least frequent) types of difficulties, but on the most common types of difficulties— arguing that these would have the broadest impact and might also provide insights into classroom practices that could be strengthened. These students are not necessarily the so-called “bubble kids,” those whose test results suggest that they are very nearly proficient (or “on the bubble”). Cynical practices that direct teachers to focus on those students so that schools can realize “improvement” have been criticized roundly elsewhere (Koretz, 2008). In some schools, the most common profile might be students who are close to proficiency; in others, it could be other students. The most common profile when we started involved struggling grade 1 students who showed very limited proficiency. We called this profile “beginning struggling word learner,” and the intervention focus was strategic decoding. These were students who had, with good instruction in kindergarten, come to grade 1 knowing all or most of the letter names and many/most letter sounds. They typically knew a small collection of high-frequency sight words. However, they had not met the leveled text standard for end of kindergarten (Level B, guided reading level) and were making very slow progress in letter- sound blending and in problem solving. These students were an early focus for us because we knew that helping them was pivotal in preventing reading failure later on, especially because another common profile in the school involved students in grades 3 through 6 who had acquired good decoding skills but whose comprehension was weak. These students were especially worrisome because their performance actually declined as they moved through the grades. As we created diagnostic profiles, Jane and her colleagues detailed the research-based intervention plans appropriate for each student. The result was similar to what Dorn and Henderson (2010) called a “portfolio of interventions.” Although Jane’s school has not fully implemented the portfolio of interventions, the results of the early interventions have been excellent. By the middle of the second year of collaboration, every student in grade 1 who had been identified as most struggling met or exceeded the standard on the periodic benchmark assessment used by the school, and most were released from intervention. Importantly, these students did not receive more intervention than they had in the past; they simply received more tailored and focused instruction based on careful and comprehensive assessment and the research-based interventions, confirming what others have observed: More intervention is not necessarily better (Wanzek & Vaughn, 2008). Diagnostic Assessment Practices Classroom teachers and reading specialists may already have excellent assessment information available to them collected for screening, periodic benchmarking, or outcomes evaluation. In these cases, the challenge is to adopt systems that permit effective use of that information for instructional decision making. Designing data-team formats and/or profiles can facilitate that considerably. Other schools and districts either have few data or have restricted teachers’ access to the information. There are two actions that may be needed: (1) disaggregating existing data to mine it for insights and/ or (2) planning to gather additional information. In either event, it is important to start with a matrix of the most important component areas of reading (and writing). These are visible on the profile planner (see Figure). Of course, not all information is equally relevant for all students or all ages/ grades. One of the major difficulties with a standard protocol approach is that it may not recognize that there “The challenge is to adopt systems that permit effective use of that information for instructional decision making.” TRTR_1031.indd 206TRTR_1031.indd 206 11/7/2011 4:05:49 PM11/7/2011 4:05:49 PM

- 4. DIAGNOSIS: THE MISSING INGREDIENT IN RTI ASSESSMENT www.reading.org R T 207 are developmental changes in the relationships between and among various factors and reading performance (Valencia et al., 2010). Whenever possible, it is efficient to gather observations about multiple types of reading performance with one tool. At the very least, this can help narrow the need for further assessment. For example, Jane’s school, like many we work with, uses one of the many structured inventories or benchmark assessment systems available (i.e., DRA, Benchmark Assessment System, QRI-4), which yields a text level for each student. Over the past 2 years, the reading professionals in that building have begun to examine the results more closely for evidence of word recognition accuracy, fluency, and comprehension. Whereas they had previously used only the text level to make decisions about student placements and programs, they now look more closely at their data. They now examine (and keep track of) differences between students’ word recognition accuracy as distinct from fluency. They also consider whether students’ accuracy is affected by phonics decoding, sight word recognition, or both. Finally, of course (and especially for older students), they examine differences between and among results in comprehension, fluency, and word identification. They have differentiated instruction for those students who were struggling with comprehension even though they were quite fluent and vice versa. It is important to examine assessment results in more than one way because different measures can provide different insights. As Valencia and her colleagues (2010) demonstrated, we might get quite a different picture of a student’s strength and areas of need with an array of information than if we relied on only one score. The student profile form was intended to encourage teachers to look at multiple measures to identify areas of concern that were not at all evident with only word-level assessment data. This comprehensive range of information is important for all struggling readers, but it is especially important to make good instructional decisions for older students and English learners (Klingner, Soltero- Gonzales, & Lesaux, 2010; Vaughn & Fletcher, 2010). Every bit of this diagnostic information can be gathered during comprehensive benchmarking events. Once we have looked at the data, we can make additional decisions about both assessment and instruction. Good diagnosis can sometimes lead to more assessment. For example, if a student has made many miscues during reading, further examination using a modified miscue analysis can be helpful (Lipson & Wixson, 2009). If the student is exceptionally accurate, there is no need to explore that component. Similarly, if the student’s comprehension is strong, we probably do not need to look to vocabulary. This might change, of course, if we have evidence that the student performs less well on expository text than narrative. Well- planned diagnostic teaching episodes can be helpful as well (Fuchs et al., 2007; Lipson & Wixson, 1986, 2009). Using Assessment to Differentiate We want to emphasize that this type of closer look, engaging in diagnostic assessment, is not necessary for everyone. One of the advantages of an effective overall assessment system is that it can identify the students who are benefitting from classroom instruction and who are doing well. If we test those children just a bit less (or use good, formative assessment effectively), then it frees up time to assess the students who really have us worried. Of course, diagnostic assessment will not make any difference if it does not lead to action. Matching students’ needs with instruction by using “if-then” thinking (Strickland, 2005) is at the heart of effective and efficient assessment instruction and is essential if RTI is going to make a difference. Students who are struggling for success in the classroom deserve differentiated instruction in the classroom and tailored interventions in supplemental settings. Fortunately, there is an increasingly strong body of research that points to the types of instruction “Of course, diagnostic assessment will not make any difference if it does not lead to action.” TRTR_1031.indd 207TRTR_1031.indd 207 11/7/2011 4:05:50 PM11/7/2011 4:05:50 PM

- 5. DIAGNOSIS: THE MISSING INGREDIENT IN RTI ASSESSMENT 208 The Reading Teacher Vol. 65 Issue 3 November 2011R T and intervention that can help to prevent reading disabilities and/or improve students’ abilities during later years. REFERENCES Aaron, P.G., Joshi, R.M., Gooden, R., & Bentum, K.W. (2008). Diagnosis and treatment of reading disabilities based on the component model of reading: An alternative to the discrepancy model of LD. Journal of Learning Disabilities, 41(1), 67–84. doi:10.1177/0022219407310838 Connor, C.M., Morrison, F.J., & Katch, L.E. (2004). Beyond the reading wars: Exploring the effect of child-instruction interactions on growth in early reading. Scientific Studies of Reading, 8(4), 305–336. doi:10.1207/ s1532799xssr0804_1 Dorn, L. J., & Henderson, S. C. (2010). The comprehensive intervention model: A systems approach to RTI. In M.Y. Lipson & K.W. Wixson (Eds.), Successful approaches to RTI (pp. 88-120). Newark, DE: International Reading Association Fuchs, D., Fuchs, L.S., Compton, D.L., Bouton, B., Caffrey, E., & Hill, L. (2007). Dynamic assessment as responsiveness to intervention. Teaching Exceptional Children, 39(5), 58–63. Fuchs, D., Mock, D., Morgan, P.L., & Young, C. (2003). Responsiveness to intervention: Definitions, evidence, and implications for the learning disabilities construct. Learning Disabilities Research & Practice, 18(3), 157– 171. doi:10.1111/1540-5826.00072 Johnson, E.S., Jenkins, J.R., & Petscher, Y. (2010). Improving the accuracy of a direct route screening process. Assessment for Effective Intervention, 35(3), 131–140. doi:10.1177/1534508409348375 Klingner, J.K., Soltero-Gonzales, L., & Lesaux, N. (2010). RTI for English-language learners. In M.Y. Lipson & K.K. Wixson (Eds.), Successful approaches to RTI: Collaborative practices for improving K-12 literacy (pp. 134– 162). Newark, DE: International Reading Association. IRA Books ■ RTI and the Adolescent Reader by William G. Brozo (copublished with Teachers College Press) ■ RTI in Literacy—Responsive and Comprehensive edited by Peter H. Johnston ■ Successful Approaches to RTI: Collaborative Practices for Improving K–12 Literacy edited by Marjorie Y. Lipson and Karen K. Wixson IRA Journal Articles ■ “Response to Intervention (RTI): What Teachers of Reading Need to Know” by Eric M. Mesmer and Heidi Anne E. Mesmer, The Reading Teacher, December 2008 ■ “Responsiveness to Intervention: Multilevel Assessment and Instruction as Early Intervention and Disability Identification” by Douglas Fuchs and Lynn S. Fuchs, The Reading Teacher, November 2009 MORE TO EXPLORE Koretz, D. (2008). Measuring up: What educational testing really tells us. Boston: Harvard University Press. Lipson, M.Y., & Wixson, K.K. (1986). Reading disability research: An interactionist perspective. Review of Educational Research, 56(1), 111–136. Lipson, M.Y., & Wixson, K.K. (2009). Assessment and instruction of reading and writing difficulties: An interactive approach (4th ed.). New York: Pearson Education. Scanlon, D.M., & Anderson, K.L. (2010). Using the interactive strategies approach to prevent reading difficulties in an RTI context. In M.Y. Lipson & K.W. Wixson (Eds.), Successful approaches to RTI (pp. 20–65). Newark, DE: International Reading Association. Strickland, K. (2005). What’s after assessment? Follow-up instruction for phonics, fluency, and comprehension. Portsmouth, NH: Heinemann. Valencia, S.W., & Buly, M.R. (2004). Behind test scores: What struggling readers really need. The Reading Teacher, 57(6), 520–531. Valencia, S.W., Smith, A.T., Reece, A.M., Li, M., Wixson, K.K., & Newman, H. (2010). Oral reading fluency assessment: Issues of construct, criterion, and consequential validity. Reading Research Quarterly, 45(3), 270–291. doi:10.1598/RRQ.45.3.1 Vaughn, S., & Fletcher, J. (2010). Thoughts on rethinking response to intervention with secondary students. School Psychology Review, 39(2), 296–299. Wanzek, J., &Vaughn, S. (2008). Response to varying amounts of time in reading intervention for students with low response to intervention. Journal of Learning Disabilities, 41(2), 126–142. doi:10.1177/0022219407313426 Wixson, K.K., & Valencia, S.W. (2011). Assessment in RTI: What teachers and specialists need to know. The Reading Teacher, 64(6), 466–469. TRTR_1031.indd 208TRTR_1031.indd 208 11/7/2011 4:05:52 PM11/7/2011 4:05:52 PM

- 6. Copyright of Reading Teacher is the property of International Reading Association and its content may not be copied or emailed to multiple sites or posted to a listserv without the copyright holder's express written permission. However, users may print, download, or email articles for individual use.