Spie2006 Paperpdf

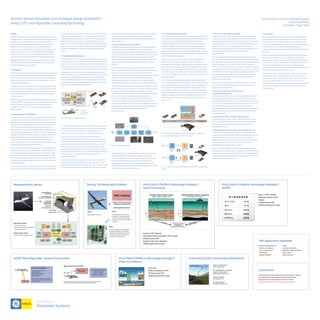

- 1. Avionics sensor simulation and prototype design workstation using COTS reconfigurable computing technology James Falasco, GE Fanuc Embedded Systems 1240 Campbell Road Richardson, Texas 75081 Abstract This paper reviews hardware and software solutions that allow for rapid prototyping of new or modified embedded avionics sensor designs, mission payloads and functional sub assemblies. We define reconfigurable computing in the context of being able to place various PMC modules depending upon mission scenarios onto a base SBC (Single Board Computer). This SBC could be either a distributed or shared memory archi- tecture concept and have either two or four PPC7447 A/7448 processor clusters. In certain scenarios, various combinations of boards could be combined in order to provide a heterogeneous computing environment. Keywords: Sensor simulation, reconfigurable computing, video compres- sion, video, reflective memory, payload integration, sensor fusion, high speed data acquisition, video surveillance 1. Introduction The design of avionics sensors and mission payloads can be a complex and time-consuming process. However, the design cycle can be significantly shortened if the system designer has access to a flexible, reconfigurable development environment that closely mimics the capabilities and tech- nologies of the deployed system. By integrating various PMC modules with a scalable, reconfigurable multi- processor architecture, it is possible to create a development tool that will allow the system designer to rather quickly and accurately simulate and test with real data sets the various sensor designs and mission compo- nents to be fielded. Specifically, we present a rapid prototyping and rapid evaluation system that will simplify the establishment of performance requirements, and allow the quick evaluation of hardware and software components being considered for inclusion in a new sensor system design or a legacy platform upgrade. 2. An Open Systems, COTS Platform The system hardware and software used to evaluate sensor designs and mission payload components and algorithms should be open and recon- figurable to allow for the mixing and matching of various vendor offerings. Provision for hardware independence is critical, since the hardware is very likely to rapidly evolve at the pace of new computer technology. The software infrastructure should be scalable and flexible allowing the algo- rithm developers the ability to spend their time and budget addressing the important functionality and usability aspects of the systems design. The system proposed here, a test and evaluation workstation built around reconfigurable hardware and a component-based software toolset, provides the necessary tools to ensure the success and cost effectiveness of initial sensor design and payload development. At the center of any scalable prototyping system is a reconfigurable multi- processing CPU engine with associated memory. The system depicted in Fig. 1 provides developers a scalable environment for application design and test. A COTS single board computer (SBC) tightly integrated to a PMC FPGA card for design experimentation forms the core system. Other PMC modules can then be selected depending on the type of sensor input that needs to be processed. One of the main advantages of this approach is the ability to rapidly proto- type mockups for test and evaluation without a concern for the limitations of embedded development at this early stage. The COTS SBC shown has the ability to host two PMC modules per card. The Video card can efficiently manage real sensor input coming in from an E/O sensor/camera, and also display that data in a fused fashion. This approach allows the system builder to work in the lab and the field using the same hardware/software environment. This system configuration also provides the developer with a test bed to define/design new hardware requirements and processing streams. 3. Prototyping System Structure Modern embedded avionics sensor design is primarily driven by the goal of providing a net-centric flow of data from platform to platform. The achieve- ment of this vision depends on transferring and processing vast amounts of data from multiple sources. Let’s examine how one could use this approach to control and manage various sensors in a rapid prototyping environment. As depicted in Figure 1, the host server for the embedded avionics sensor design workstation is a COTS Dual 7447A PowerPC VMEbus SBC. The board’s architecture provides a distributed processing environment that allows scaling of multiple processing nodes to achieve high performance for the most demanding signal and imaging applications associated with various sensor and mission payload design requirements. Fig. 1. FPGA vision and graphics platform The COTS SBC architecture combined with the FPGA/Video cards allows for seamless mapping of imaging applications oriented toward change detec- tion and sensor fusion which will allow the systems designer to view multiple data streams in a simulations real time display environment. The SBC implements processing nodes using the latest Motorola 7447A/7448 PowerPC® processors running at up to a 1.4 GHz clock rate. Using the distributed processing architecture of the SBC, one bridge chip per node allows the Front Side Bus (FSB) of each PowerPC to run in MPX mode up to its maximum rate of 133 MHz. The high performance data transfer mechanism facilitated by the built-in 64-bit full duplex crossbar in the Discovery II bridge permits concurrent data transfers between different interfaces as well as transaction pipelining for same source and destination transactions. The prototyping architecture outlined here is based on a combination of tightly integrated reconfigurable computing, video and graphics. It will place the embedded avionics sensor design community in position to utilize the proposed system architecture in development of the actual controller for payload packages as well as the sensor itself and in its actual deploy- ment integration. Most importantly it will allow for cost-effectively maintaining and extending the system when new technologies are avail- able in the future. 3.1 An Example: Infrared (IR) scene projection Increased sensor performance and bandwidth has begun to overwhelm existing backend processing capability. Current test and evaluation methods are not adequate for fully assessing the operational performance of imaging infrared sensors while they are installed on the weapon system platform. However, the use of infrared (IR) scene projection in test and evaluation can augment and redefine test methodologies currently being used to test and evaluate forward looking infrared (FLIR) and imaging IR sensors. One could project accurate, dynamic and realistic IR imagery into the entrance aperture of the sensor, such that the sensor would perceive and respond to the imagery as it would to the real-world scenario. This approach includes development, analysis, integration, exploitation, training, and test and evaluation of ground and aviation based imaging IR sensors/subsystems/systems. This applies to FLIR systems, imaging IR missile seekers/guidance sections, as well as non-imaging thermal sensors. The systems approach proposed in this paper has the scalability to accom- plish this type of reconfigurable sensor mix and match. Algorithms such as the one depicted in Figure 2 could be transformed into a Mathlab® Simulink® Blockset for easy execution in a reconfigurable system utilizing FPGA- and PPC-based computing elements. Embedded avionics HWIL Simulation demands include low latency, high data rates and interfacing. It is essential to have a capable platform for handling and processing of the data streams. Tools must also complement this so that a systems designer is able to construct the final system leveraging design tools, such as Mathlab and Simulink, with a reconfigurable computing platform. Fig. 2. Example: sensor images This approach allows one to demonstrate how algorithms can be imple- mented and simulated in a familiar rapid application development environment before they are automatically transposed for downloading directly to the distributed multiprocessing computing platform. This complements the established control tools, which usually handle the configuration and control of the processing systems leading to a tool suite for system development and implementation. 3.2 The advantages of FPGA Computing As the development tools have evolved, the core-processing platform has also been enhanced. These improved platforms are based on dynamically reconfigurable computing, utilizing FPGA technologies and parallel processing methods that more than double the performance and data bandwidth capabilities. This offers support for processing of images in Infrared Scene Projectors with 1024 X 1024 resolutions at 400 Hz frame rates. Simulink Blocksets could add the programming involved to organize algorithms that could then be partitioned to operate on a multiprocessor based FPGA/PPC based configuration. Another key component of reconfigurable scalable embedded avionics sensor design and prototyping capability is access to FPGA based processing. FPGA (Field Programmable Gate Array) is defined as an array of logic blocks that can be ‘glued’ together (or configured) to produce higher level functions in hardware. Based on SRAM technology, i.e., configurations are defined on power up and when power is removed the configuration is lost – until it is ‘reconfigured’ again. Since an FPGA is a hardware device, it is faster than software. The FPGA can best be described as a parallel device that makes it faster than software. FPGAs as programmable “ASICs” can be configured for high performance processing, excelling at continuous, high bandwidth applica- tions. FPGAs can provide inputs from digital and analog sensors —LVDS, Camerink, RS170 — with which the designer can interactively apply filters, do processing ,compression, image reconstruction and encryption time of applications. Examples of the flexibility of this approach using COTS PMC Modules hosted by a COTS multiprocessing base platform are shown in Figures 3 & 4. Fig. 3. Example: FPGA application. PMC FPGA processor for capture and compression. PMC module for graphics and display. Fig. 4. RS-170/MPEG-4 video PMC. Integrated bundle of PMC, mezzanine and MPEG-4. 3.3 The ‘Mix and Match’ Platform Advantage Today’s soldier, who is the ultimate customer of the embedded systems designer, is faced with a continuously fluid chain of world events. These changing events are closely mapped into the deployment of various air, sea and land platforms which contain the reconfigurable embedded systems architecture under discussion in this paper. For example, based on changing mission requirements, any of the following three different areas might be needed by the soldier: sonar processing; SIGINT; or video surveillance. The system designer addressing these defined application areas could place various PMC modules together in the prototyping system with relative ease. Specifically, these applications might collectively require the following list of PMC modules: PMC #1—Graphics; PMC#2—Video Compression; PMC#3—Reflective Memory; PMC#4—1553; PMC#5—High Speed Data Acqui- sition; PMC#6—Race++. A key cornerstone of the ‘mix and match’ strategy is that while the PMC module may change from application to application, the core SBC ‘multiprocessor’ engine and its associated software tool chain remains constant. The same can be said for the VME enclosure that contains the processing cards. Let’s now examine the application areas and understand how we could place various PMC combinations together to address the processing requirements of each application. 3.4 Sonar Processing: PMC #1—Graphics, PMC #5 —High Speed Data Acquisition In this example the PMC Graphics card would be utilized to display sonar waterfall display data perhaps with an overlay of tactical positions. The High Speed Data Acquisition PMC could be used to facilitate the information coming in from a high speed sensor interface. The combination of the two modules hosted by the multiprocessor-enabled SBC could move from this application area to another type of sonar processing, triggered by software or the base modules could be switched to those shown in the SIGINT example below. 3.5 SIGINT: PMC #6—Race ++, PMC #3—Reflective memory In a SIGINT application scenario a RACE ++ PMC module is tied to other Race++ peripherals allowing for interfacing while the reflective memory module stores real time acquired data that could be used for post processing data analysis. 3.6 Video Surveillance: PMC #2—Video Compression, PMC #4—1553 In the video surveillance application area, the video compression PMC module would pre-process and reduce the incoming data stream and pass it to the multiprocessor base system for potential change detection analysis. Then using the PMC 1553 module, one could communicate to an external avionics platform to perhaps control or guide ordnance being placed upon the target under surveillance. In each of the three examples above, the modules are interchangeable depending upon the specific mission. This approach would allow the soldier to move module packages between platforms achieving different mission scenarios through software loading while maintaining the core base multi- processing and packaged environment. 4. Conclusions The goal of integrating a COTS system such as the one depicted here is to allow designers to use the same environment in the lab that they could then take to the field for live data collection activity. This is of particular value to a system engineer who could use such an environment to perform new development activities. Because of these efficiencies, the outlined prototyping system would pay off in accelerated product develop- ment time. Systems designers are traditionally faced with the challenge that today’s sensors are generating data at a rate far faster than the backend end systems are configured to process. Combine this fact with the reality that a sensor fusion “paradigm” is now mandatory for designers wishing to turn concepts into reality rapidly. A key component to a flexible hardware system, of course, is a software structure that enables designers to go from their ideas and algorithmic concepts to code or HDL. Packaging of the system is largely dependent on the user’s requirements for flexibility. Should the user desire a system that can be scaled up by adding additional cards, then a larger slot chassis could be configured to allow for the addition of other cards. The key point is that the core hardware outlined not only has the potential for scalability by adding addi- tional modules to the base processing units, but the entire system has scalability as well. PPC to PCI-X Bridge (64360) PPC to PCI-X Bridge (64360) 1 GHz 7448 or 7447A PowerPC 32 MB User Flash 32 MB User Flash VME Bus P1 Up to 320 MB/s Peak Up to 1064 MB/s Peak PMC 1 PMC 2 PCI-X Bridge PCI-X Bridge NVRAM NVRAM 256 MB DDR 256 MB DDR 64-bit/133 MHz 64-bit/133 MHz Gigabit Ethernet Multifunction Serial Gigabit Ethernet Multifunction Serial Up to 1064 MB/s Peak 64-bit/ 133 MHz 64-bit/ 133 MHz 2eSST VME Bridge 1 GHz 7448 or 7447A PowerPC OPTICAL FILTER FILTER WHEEL INTENSIFIER FOCAL BACKPLANE INTENSIFIER HIGH VOLTAGE POWER SUPPLY MICROCONTROLLER ELECTRONICS CCD IMAGING CHIP AND VIDEO ELECTRONICS Video Rate Camera • 30 frames per second • Average image size (after registration): 642 x 343 pixels (8 bit) Spinning Filter Wheel • Creates 6-band multispectral image Representative Sensor Graphics PMC Module High Speed Data Acquisition PMC Module Multiprocessor BSP Graphics SW Layer (OpenGL) Adding Applications Layer Mix & Match Platform Advantage Example 1 Sonar Processing SIGINT Reconfigurable System Environment RF Receiver Real-Time Capabilities Real-time Signal Detection Signal Tracking Operator Event Marking Signal Classification Fusion of Data Incoming Information Pre-Processed- Processed & Post Processed and stored for future analysis Digital Capture of Signal Data Near-Real-Time Capabilities Automatic Detection Signal Localization Display and Analyze Results Operator in the Loop Data Tapes Processing & analysis may be performed on the ground at near-real-time rates by transfer of information from platform to ground station. Signal Analysis Function (SAF) Mix & Match Platform Advantage Example 3 Video Surveillance 1553 PMC Video Compression PMC Multiprocessor BSP Adding Applications Layer Battle Damage Assessment Electronic Surveillance Cross-cueing Sensors FOPEN Radar Minefield Detection LADAR Data Link Compression All Weather Target Acquisition Sensor Fusion Mission Planning UAV Application Examples Existing Platforms & New Designs Will Continue Flying For Decades Incremental Mission Payload Responsibilities Will Be Added Requirements For Interoperability Continue To Increase Developers Will Need To Shrink Payload Processing System Conclusions Overcoming Data Compression Bottlenecks Pentium enabled SBC’s VMIC Product Line PPC enabled SBC’s single,dual & quad configurations Richardson Product Line Display Capability CDI Product Line Storage Capability Camarillo Product Line 1553 Data link Step 3 The process of the first two steps allows the system developer to combine real world data with simulated scenarios and compare and contrast in order to hone overall system design efficiency Step 1 Data acquired in real time Step 2 Data is passed to PMC module to be processed & on Nexus Single Board Computer for backend processing & matching for decisions (mission driven scenarios) PMC Module Collect & Process Data from suspension subassembly Data Acquisition Sensor Solving The Bandwidth Problem PPC to PCI-X Bridge (64360) PPC to PCI-X Bridge (64360) 1 GHz 7448 or 7447A PowerPC 32 MB User Flash 32 MB User Flash VME Bus P1 Up to 320 MB/s Peak Up to 1064 MB/s Peak PMC 1 PMC 2 PCI-X Bridge PCI-X Bridge NVRAM NVRAM 256 MB DDR 256 MB DDR 64-bit/133 MHz 64-bit/133 MHz Gigabit Ethernet Multifunction Serial Gigabit Ethernet Multifunction Serial Up to 1064 MB/s Peak 64-bit/ 133 MHz 64-bit/ 133 MHz 2eSST VME Bridge 1 GHz 7448 or 7447A PowerPC RS170 PMC Graphics PMC FPGA PMC 1553 Technology Chassis Technology Display Technology Video Compression PMC Input Format Matrix Processor Demean Window Averager Target Window Average Background/ Guard Window Averager Cross Product Generator Band Interleaved Image Input RX Image Output Video Input Processing Video Server Application WAVE Software Drivers (PCI Bus) MPEG-4 Bit-stream Input 1 MPEG-4 Compression Input 2 MPEG-4 Compression TS-PMC with Stratix FPGA RTP Encapsulated MPEG-4 Bit-stream Server Configuration via TCP/IP Video Server CPU Dual RS-170 MezzanineFPGA PMC Video Compression PMC Integrated Bundle Video Compression Encoder with Host Decode Multiprocessor SBC + =+ Video Camera Audio Input • Capture • Filter, Format • Encrypt • Compress GPU PMC Overlay and Display TS-PMC Capture and Compress Video Camera Audio Input TS-PMC Capture and Compress TS-PMC Capture and Compress TS-PMC Capture and Compress • Capture • Filter, Format • Encrypt • Compress • Graphic Generation • Overlay • Display • Graphic Generation • Overlay • Display Thin Pipe Network Encrypter Wireless Network Multiprocessor SBC Race ++ PMC Module Reflective Memory PMC Module Multiprocessor BSP Adding Applications Layer Mix & Match Platform Advantage Example 2 SIGINT