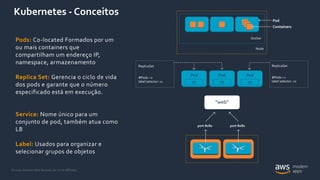

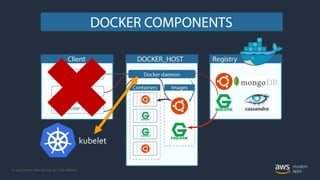

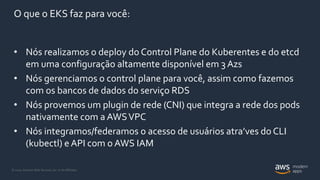

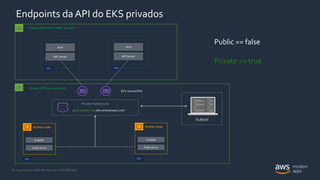

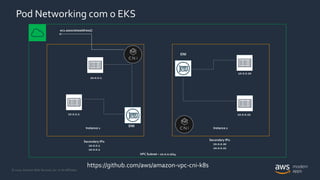

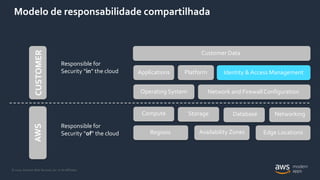

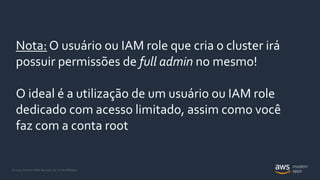

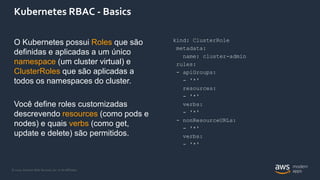

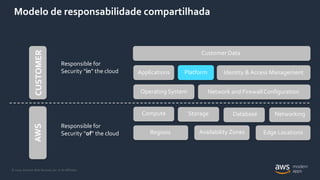

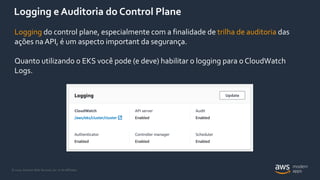

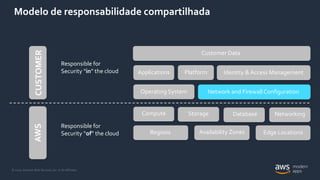

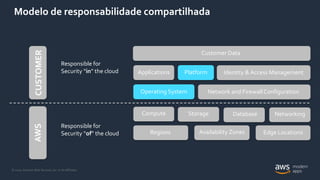

O documento introduz o Amazon Elastic Kubernetes Service (EKS), um serviço gerenciado de Kubernetes na AWS. Ele descreve os principais conceitos do EKS, incluindo como ele executa e gerencia o plano de controle do Kubernetes, integra os nós dos trabalhadores com a VPC da AWS e fornece autenticação e autorização baseadas em IAM. O documento também discute considerações importantes de segurança, como log e auditoria, RBAC e redes no EKS.