Case studies of Test Driven Development

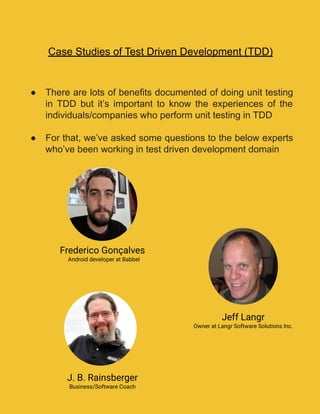

- 1. Case Studies of Test Driven Development (TDD) ● There are lots of benefits documented of doing unit testing in TDD but it’s important to know the experiences of the individuals/companies who perform unit testing in TDD ● For that, we’ve asked some questions to the below experts who’ve been working in test driven development domain Frederico Gonçalves Android developer at Babbel Jeff Langr Owner at Langr Software Solutions Inc. J. B. Rainsberger Business/Software Coach

- 2. Case Study 1 Expert- Frederico Gonçalves What’s the difference you’ve noticed in defect rate in unit testing when you started working in TDD? Before using TDD there were a lot of things that were caught later on by QA and thrown back to developers. I started at Babbel right away doing TDD, so here there’s no improvement measured. In my old company, after we adopted TDD the issues reported by QA decreased considerably. We used to have on average 5 issues popping up on QA and this went down to 1 or 2. We also used to have a 95% free-crash rate and it increased to 98%. According to Bhat and Nagappan’s case study at Microsoft showed that development time using TDD grew by 15-35%. What is your experience with development time? The development time increased for sure, but what we lose in developing time we gain in less QA and fewer bugs in the long run. Both Defect rate and Development time impacts on Cost of development. Have you noticed cost reduction after implementing TDD at your organization? Hard to say. We develop for ourselves, meaning we don’t really build apps for other clients where we would charge per hour. As explained, you definitely notice an increase in developing time and a decrease in the number of issues reported by QA. In general, we spend less time releasing new features and therefore I guess one could say it’s cheaper to develop. 1

- 3. In most cases, TDD results in good test coverage and a lasting regression test suite. Did you experience any increase in test coverage? If yes, then how much? Yes definitely. This is actually something we actively measure here at Babbel. It increased by about 40%. Case Study 2 Expert- Jeff Langr What’s the difference you’ve noticed in defect rate in unit testing when you started working in TDD? I can relate a couple of stories. I worked with an insurance company who deployed a moderately-sized (~100,000 SLOC) test-driven Java app to production. In its first 12 months, they uncovered only 15 production defects in total. This is dramatically less than a typical production application. I worked as a Clojure programmer from 2013-2016 using TDD. I did not code any “logic” defects during this time. We did have integration-related defects and defects related to the misunderstanding of customer interests. In the work I do with customers, the code that we test-drive does not exhibit logic defects for intended behaviour. 2

- 4. TDD does not remove all defects. Among other classes of defects, you will still have integration-related defects, defects related to the misunderstanding of requirements or missed requirements, and defects for unexpected interactions, but even these begin to reduce in number when TDD is employed. According to Bhat and Nagappan’s case study at Microsoft showed that development time using TDD grew by 15-35%. What is your experience with development time? I believe the studies indicate that TDD creates an increase in “initial development time” in comparison to other projects. These figures, as far as I know, do not include the costs of rework due to defects, or other costs associated with defects; nor do they consider the increased cost in the long-term development of code when the quality of the code (in absence of continual refactoring) decreases. I take little stock in any one study. Most of the studies show similar results, however, suggests that the costs/values attributed to TDD are reasonably in line with reality. From a personal stance, I firmly believe TDD has allowed me to increase my development speed over time as the codebase for any given project grows in size. I can and have related anecdotes about how not practising TDD on efforts increased even after a very short amount of time. During my Clojure development years, we created a significant amount of code in a short time using TDD. I do not believe we would have gone any faster by abandoning TDD, particularly given the dynamic nature of changing requirements. 3

- 5. Both Defect rate and Development time impacts on Cost of development. Have you noticed cost reduction after implementing TDD at your organization? Things like this are always hard to quantify. That our Clojure codebase had few logic defects indeed meant we had reduced costs in the areas of rework, defect management, and support. I also believe that we had reduced costs in terms of time required to understand current code behaviour. In most cases, TDD results in good test coverage and a lasting regression test suite. Did you experience any increase in test coverage? If yes, then how much? Since we were doing TDD on most of the Clojure code, our coverage per cent, at least on the system I worked on, was likely in the high 90% range by definition. We never measured it, as it was not a relevant number (if everyone is practising TDD correctly, there is no need to track the coverage). In the past, I’ve test-driven systems and later come back to measure code coverage out of curiosity; in these cases, the numbers have always been in the high 90% range. Certain areas of code–pure view code with no real logic–end up having low or no coverage, but that’s the point of removing all logic from it. 4

- 6. Case Study 3 Expert- J. B. Rainsberger What’s the difference you’ve noticed in defect rate in unit testing when you started working in TDD? When I started practising TDD, my personal defect rate decreased by over 90%. Clearly, I had a very high defect rate at the time. More importantly, though, I noticed that when I practised test-first programming (even without the emphasis on refactoring), I produced different kinds of defects from what I had done before. I would still produce the occasional very silly mistake and I would still produce very complicated, difficult-to-diagnose defects from unexpected behaviour emerging from integrating larger parts of the system, but 99% of the non-trivial-but-easy-to-understand defects disappeared because I find those mistakes myself. I now think more in terms of the cost of mistakes instead of the frequency of mistakes. Practising TDD helps me reduce the cost of mistakes by reducing the time between when I make the mistake and when I notice the mistake. Herein lies a lot of the cost-saving of test-first programming: the sooner I see the mistake, the more easily I fix it. According to Bhat and Nagappan’s case study at Microsoft showed that development time using TDD grew by 15-35%. What is your experience with development time? I don’t know the case study well, so I don’t know whether they compare apples to apples. When I worked at IBM, I worked in a common enterprise environment that had “code freezes”. Before code freeze, programmers built new features, and after code freeze, programmers only fixed the most urgent manager-approved defects. After I started practising TDD, I 5

- 7. delivered probably 20% less value up to code freeze, but after code freeze, I earned the trust of the managers to deliver more features right up until the date of release. I can’t quantify the additional value of that trust, but I found it more intuitively valuable over the long term than squeezing out 10% more code over the short term. Moreover, given the evolutionary design aspect of TDD, I noticed greater longer-term benefits, since I could build version 4 on top of the same code from version 1. I didn’t need to throw everything away every 2-3 years. This savings certainly compensates for going even 20% slower over the short term. Both Defect rate and Development time impacts on Cost of development. Have you noticed cost reduction after implementing TDD at your organization? I mostly noticed increased cost certainty, meaning a large decrease in unexpected/unplanned costs. Although I didn’t stay at IBM long enough to witness it myself, I know that when cost uncertainty decreases, then cost itself also gradually decreases. In most cases, TDD results in good test coverage and a lasting regression test suite. Did you experience any increase in test coverage? If yes, then how much? The difference was night and day. When I practice TDD, I get the 85% coverage that matters most like a natural part of the process of writing code. 6