情報基盤システム学(NAIST)の研究室紹介

- 1. A / / I L The Internet IoT .53 No.4 1349–1359 (Apr. 2012) 口アイコンの対として,階段から上 コンに相当する.このような入口と する.対となるアイコンが水平視野 ,画面の上部の端に矢印を表示し, けると対となるアイコンが存在する る(図 5).Google Street View と 真を撮影した緯度・経度は厳密に のつながりを示すアイコンを提示す は階層間をつなぐ施設とその対応に ことができる.詳細情報はパノラマ ウスオーバ前 ウスオーバ後 内につながりがない場合 e the odd end is not visible. 表 1 パノラマ情報の詳細 Table 1 The details of a panoramic information. 名称 型 詳細 panoId text パノラマ写真の識別子 latlng geometory point パノラマ写真撮影地点の緯度・経度 yawdeg float パノラマ写真撮影方位(0 ∼360 ,0 :北) link panoId text 隣接する panoId 表 2 DB のアイコン情報の詳細 Table 2 The details of a icon information in DB. 名称 型 詳細 id int DB 内の識別子 ontent type int 階層間をつなぐ施設の種類(「0 :上り階段」など) latlng geometory point 階層間をつなぐ施設の緯度・経度 height double アイコンを表示する高さ title text 階層間をつなぐ施設の名称と識別子 comment text 階層間をつなぐ施設の名称 user id int コンテンツ作成者の識別子 created at date コンテンツ作成時刻 ビューを用いて,また周辺の大局的な情報は 2 次元地図を 用いることを想定した提案手法のインタフェースを図 6 に 示す. 地上と地下それぞれのパノラマ写真が持つパノラマ情 報を表 1 に,階層間をつなぐ施設に表示するアイコンが 持つアイコン情報を表 2 に示す.パノラマ写真撮影地点 の座標は緯度・経度で記録されており,実装したパノラマ ビューの球の中心を示す.アイコンにも緯度・経度と高さ による座標が与えられているため,パノラマ写真撮影地点 との相対的な方位と距離,そして仰角を求めることで描画 できる.このときのアイコンの高さについては,パノラマ 写真の大まかな水平線に合わせて 0 としている.1 つの階 層間をつなぐ施設に対し,地上と地下それぞれのパノラマ 写真に合わせて 2 つのアイコンの座標を計算し,描画して いる.地上のアイコンは地上のパノラマ写真上のみに,そ して地下のアイコンは地下のパノラマ写真上のみに表示す ることで,モード切替えにともない球の中心となる緯度・ 経度がずれても,正しい位置にアイコンを描画できる. 図 6 提案手法のインタフェース Fig. 6 The proposed interface. - Smart phone Other vehicle Smart home Security Operation Center/ Insurance company/… Internet Edge server Wi-Fi/ Bluetooth 4G/5G, LTE Domain Controller/IDS IDS ECUECU ECU ECU ECU In-vehicle Infotainment Central Gateway Router ECUECU ECU ECU CAN/CAN FD Ethernet CAN/CAN FD OBD-II port Switch Switch Switch ECU ECU ECUECU Domain Controller Domain Controller/IDS In-vehicle network Ethernet Hardware Trojan, Malware Connected equipments Sensing data Sensors Actuators DoS attack 情報基盤システム学研究室(B206 LAN LAN Wireless LAN H27年度政府総合防災訓練の様子(2015年9月1日) ネットワーク運 Software Defin トラフィック解 超高精細画像IP セキュリティ マルウェア解析 ペアリング暗号 暗号のGPU実装 ユビキタスネットワーク Delay Tolerant Network MANET/VANET Sensor Network

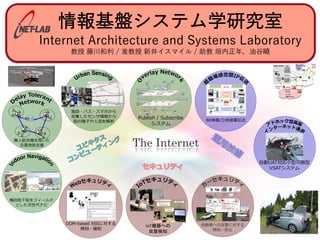

- 2. AKIRA YUTANI MASATOSHI KAKIUCHI M KAZUTOSHI FUJIKAWA 教授 藤川和利 准教授 新井イスマイル 助教 垣内正年 助教 油谷曉 ISMAIL ARAI

- 3. 曼陀羅ネットワーク • 4000+端末 2017/6/20 6 O ・ O ► 2 5 S ・ P b L ► 0 GB FT1 ► 4 ► .4 ► 4 O 0 G G1

- 4. The Internet

- 5. ・

- 6. ・ 3 4 0 5 5 • GPS (Lat., Lng., Alt., Azimuth) • Vehicle speed • Engine speed • Travel distance • Engine pulse • Direction • Atmospheric pressure • Temperature • Humidity • Amount of fuel • Near future • Accelerometer • Gyroscope • CAN data

- 7. ・ Too many operations of drivers 6 1 20

- 8. ・ 1 ・ 7 ! Maintenance inspection work before dispatching Washing car washing and cleaning the bus Dispatching moving towards the first bus stop Waiting waiting at the first bus stop In service bus is in service Taking a break taking less than 4 hours break Out of service moving to the garage after the bus finishes service Refueling refueling at a gas station At garage parking at the garage

- 9. ・ 8 ! 81% 93% 12 11 Takuya Yonezawa, Ismail Arai, Toyokazu Akiyama, Kazutoshi Fujikawa, “Random Forest Based Bus Operation States Classification Using Vehicle Sensor Data,” 2018 International Workshop on Pervasive Flow of Things (PerFot 2018), IEEE PerCom 2018, pp.819--824, Greece, March, 2018.

- 10. ・ ► ► ・ 9

- 11. 10 1 2

- 12. Random Forest Regressor 11 94% 96% 3 Hayato Nakashima, Ismail AraiKazutoshi Fujikawa, “Passenger Counter Based on Random Forest Regressor Using Drive Recorder and Sensors in Buses,” 2019 International Workshop on Pervasive Flow of Things (PerFot 2019), IEEE PerCom 2019, pp. 561—566, Kyoto, March, 2019. t paper Fig. 5: Example of execution with OpenPose Fig. 6: The sample frame with YOLOv3+Deep SORT counting precision was not good because it can not be detected as a human when each part of a body can not be estimated well. Especially during boarding, due to the position of the camera, it often shoots the back of the passengers, so the detection accuracy is not high. Fig. 2: The sample video of drive recorder Fig. 3: The sample video of drive recorder of the last paper Fig. 4: Example of execution with Background Subtraction video has vibrations from the bus engine, and also the angle of view is different(Fig. 4). In the last paper, we counted using passenger’s sideways movement, but in the video of this paper, there are few sideways movements and there are many moves of depth. Occlusion tends to occur in the movement of the depth, and it is difficult to correctly detect each passengers by using the background subtraction method. We also tried detecting human beings using OpenPose(Fig. 5). However, since OpenPose presumes parts of a body, Fig. 5: Example of execution with OpenPose Fig. 6: The sample frame with YOLOv3+Deep SORT counting precision was not good because it can not be detected as a human when each part of a body can not be estimated well. Especially during boarding, due to the position of the camera, it often shoots the back of the passengers, so the detection accuracy is not high. 1) Detecting the human using YOLOv3: We use YOLOv3, a method for object detection. The method regards object detection as a regression problem to spatially separated bound- ing boxes and associated class probabilities. It uses a single neural network, and the network predicts bounding boxes and class probabilities directly from full images in one evaluation. Because it solves as a simple regression problem, processing speed is fast. In other algorithms, we tried to detect a candidate region of an object using techniques such as ”sliding window” and ”Region Proposal”, so we often erroneously detect the background as an object. In YOLOv 3, such erroneous detec- tion is half of ”Fast R-CNN” [8] [9]. According to the detected object, it is possible to obtain the size and the position of the rectangular outline, the type of the object (person, car, food, etc.) and the score of the judged object. The score indicates the accuracy of the judged object in the range of 0 to 1. For these reasons, we used YOLOv3 with pre-trained model to detect humans. When the score of human beings is over 0.2, this method detects humans as the blue box in Fig. 6. 2) Tracking the human using Deep SORT: The proposed method tracks humans detected by YOLOv3 with Deep SORT. YOLOv3+DeepSort +

- 13. ・ 12 1st day 6th day

- 14. ・ 13

- 15. Block fence detection Unwanted fragility against earth quake 14 Treepedia@Senseable City Lab, MIT

- 16. h A A o ・ r ► 24 ► P t ・ c ► ► T ► T 79 ( ・ S T c ) ► c P / 044 ► -1 ► - 0 86 6 ► N P K ・ R O C BG ► d a 15

- 17. ・ ・ 16 0 1 20 0 1 20 1 1

- 18. 1 ・ ・ 17 0 1 20 0 1 20 1 1

- 19. A platform for fixed point observation! (Ex. Atmospheric pressure sensed from the buses on the move in an area of 50m square) 18 Temporal Coverage Frequency Spatial Coverage Density Trash truck Bus 1010 1011 1012 1013 1014 1015 8:00 8:10 8:20 8:30 8:40 8:50 9:00 Atmosphericpressure[hPa] Times of day 5

- 20. J ・ 4 5h 1c 2 0 ・ )) ( ► k )) a e1

- 21. D ・ )) )( MI ► L ) N 92.5%(CAN ID 305) ROC Area . . .Cell1 Cell2 cell3 LSTM Cell CAN Bus Actual packet TH predicted packet MSE calc. >TH . . . <TH Anomaly Benign

- 22. ・ A

- 23. 2 A ・G A A ► ► F P ・ ► ► Attacker IDS CAN bus

- 24. 1 ・ I 1 ・ ・ ►

- 27. C a 3 ・ 3 3 ► / - ► FC H5 6 - k F H S ► - T T ) ( e ► C 3 - c

- 28. UI ・ S S ► PL ► O ► N AT

- 29. T L R ・o ・ A ► ) R M KM ► ( S KM ► RI o ► N Q O ► W ► ) KM ► Q

- 30. C C C T ・ EF TC ► 1( T e - 6 72 2 il C ► a T eC - ・b RcL IOM ► P G 0 2 ► SRc ) -1

- 31. d o ・AOT ► NS PT - 2 1 ► 2 - )( ► I T ► TC T - F T - ESN e 2

- 32. A ・ ► ) L - N O ) (

- 33. o rm ・ I iErmS a N CDAJB ► e - UM - ( - - ( ) - ► - b T i b - v rm - CErm - W ( E s W - n l n y td

- 34. !