HOBBIT Link Discovery Benchmarks at OM2017 ISWC 2017

•

0 gostou•420 visualizações

Poster presented at the OAEI Ontology Matching (OM) workshop at ISWC 2017 (HOBBIT Link Discovery Task). This work was supported by grants from the EU H2020 Framework Programme provided for the project HOBBIT (GA no. 688227).

Denunciar

Compartilhar

Denunciar

Compartilhar

Baixar para ler offline

Recomendados

An RDF Dataset Generator for the Social Network Benchmark with Real-World Coh...

An RDF Dataset Generator for the Social Network Benchmark with Real-World Coh...Holistic Benchmarking of Big Linked Data

Recomendados

An RDF Dataset Generator for the Social Network Benchmark with Real-World Coh...

An RDF Dataset Generator for the Social Network Benchmark with Real-World Coh...Holistic Benchmarking of Big Linked Data

Introducing the HOBBIT platform into the Ontology Alignment Evaluation Campaign

Introducing the HOBBIT platform into the Ontology Alignment Evaluation CampaignHolistic Benchmarking of Big Linked Data

Cloud Validation Suite Presentation for Webinar: Cloud and Earth Observation ...

Cloud Validation Suite Presentation for Webinar: Cloud and Earth Observation ...OCRE | Open Clouds for Research Environments

EARL: Joint Entity and Relation Linking for Question Answering over Knowledge...

EARL: Joint Entity and Relation Linking for Question Answering over Knowledge...Holistic Benchmarking of Big Linked Data

Benchmarking Big Linked Data: The case of the HOBBIT Project

Benchmarking Big Linked Data: The case of the HOBBIT ProjectHolistic Benchmarking of Big Linked Data

Mais conteúdo relacionado

Semelhante a HOBBIT Link Discovery Benchmarks at OM2017 ISWC 2017

Introducing the HOBBIT platform into the Ontology Alignment Evaluation Campaign

Introducing the HOBBIT platform into the Ontology Alignment Evaluation CampaignHolistic Benchmarking of Big Linked Data

Cloud Validation Suite Presentation for Webinar: Cloud and Earth Observation ...

Cloud Validation Suite Presentation for Webinar: Cloud and Earth Observation ...OCRE | Open Clouds for Research Environments

Semelhante a HOBBIT Link Discovery Benchmarks at OM2017 ISWC 2017 (20)

Benchmarking of distributed linked data streaming systems

Benchmarking of distributed linked data streaming systems

Introducing the HOBBIT platform into the Ontology Alignment Evaluation Campaign

Introducing the HOBBIT platform into the Ontology Alignment Evaluation Campaign

Advanced Automated Analytics Using OSS Tools, GA Tech FDA Conference 2016

Advanced Automated Analytics Using OSS Tools, GA Tech FDA Conference 2016

Cloud Validation Suite Presentation for Webinar: Cloud and Earth Observation ...

Cloud Validation Suite Presentation for Webinar: Cloud and Earth Observation ...

Melbourne materials institute miicrc rapid productisation

Melbourne materials institute miicrc rapid productisation

Tool-Driven Technology Transfer in Software Engineering

Tool-Driven Technology Transfer in Software Engineering

Mais de Holistic Benchmarking of Big Linked Data

EARL: Joint Entity and Relation Linking for Question Answering over Knowledge...

EARL: Joint Entity and Relation Linking for Question Answering over Knowledge...Holistic Benchmarking of Big Linked Data

Benchmarking Big Linked Data: The case of the HOBBIT Project

Benchmarking Big Linked Data: The case of the HOBBIT ProjectHolistic Benchmarking of Big Linked Data

Assessing Linked Data Versioning Systems: The Semantic Publishing Versioning ...

Assessing Linked Data Versioning Systems: The Semantic Publishing Versioning ...Holistic Benchmarking of Big Linked Data

SQCFramework: SPARQL Query Containment Benchmarks Generation Framework

SQCFramework: SPARQL Query Containment Benchmarks Generation FrameworkHolistic Benchmarking of Big Linked Data

LargeRDFBench: A billion triples benchmark for SPARQL endpoint federation

LargeRDFBench: A billion triples benchmark for SPARQL endpoint federationHolistic Benchmarking of Big Linked Data

4th Natural Language Interface over the Web of Data (NLIWoD) workshop and QAL...

4th Natural Language Interface over the Web of Data (NLIWoD) workshop and QAL...Holistic Benchmarking of Big Linked Data

Scalable Link Discovery for Modern Data-Driven Applications (poster)

Scalable Link Discovery for Modern Data-Driven Applications (poster)Holistic Benchmarking of Big Linked Data

An Evaluation of Models for Runtime Approximation in Link Discovery

An Evaluation of Models for Runtime Approximation in Link DiscoveryHolistic Benchmarking of Big Linked Data

Extending LargeRDFBench for Multi-Source Data at Scale for SPARQL Endpoint F...

Extending LargeRDFBench for Multi-Source Data at Scale for SPARQL Endpoint F...Holistic Benchmarking of Big Linked Data

SPgen: A Benchmark Generator for Spatial Link Discovery Tools

SPgen: A Benchmark Generator for Spatial Link Discovery ToolsHolistic Benchmarking of Big Linked Data

Benchmarking Link Discovery Systems for Geo-Spatial Data - BLINK ISWC2017.

Benchmarking Link Discovery Systems for Geo-Spatial Data - BLINK ISWC2017. Holistic Benchmarking of Big Linked Data

Instance Matching Benchmarks in the ERA of Linked Data - ISWC2017

Instance Matching Benchmarks in the ERA of Linked Data - ISWC2017Holistic Benchmarking of Big Linked Data

Mais de Holistic Benchmarking of Big Linked Data (20)

EARL: Joint Entity and Relation Linking for Question Answering over Knowledge...

EARL: Joint Entity and Relation Linking for Question Answering over Knowledge...

Benchmarking Big Linked Data: The case of the HOBBIT Project

Benchmarking Big Linked Data: The case of the HOBBIT Project

Assessing Linked Data Versioning Systems: The Semantic Publishing Versioning ...

Assessing Linked Data Versioning Systems: The Semantic Publishing Versioning ...

SQCFramework: SPARQL Query Containment Benchmarks Generation Framework

SQCFramework: SPARQL Query Containment Benchmarks Generation Framework

LargeRDFBench: A billion triples benchmark for SPARQL endpoint federation

LargeRDFBench: A billion triples benchmark for SPARQL endpoint federation

4th Natural Language Interface over the Web of Data (NLIWoD) workshop and QAL...

4th Natural Language Interface over the Web of Data (NLIWoD) workshop and QAL...

Scalable Link Discovery for Modern Data-Driven Applications (poster)

Scalable Link Discovery for Modern Data-Driven Applications (poster)

An Evaluation of Models for Runtime Approximation in Link Discovery

An Evaluation of Models for Runtime Approximation in Link Discovery

Scalable Link Discovery for Modern Data-Driven Applications

Scalable Link Discovery for Modern Data-Driven Applications

Extending LargeRDFBench for Multi-Source Data at Scale for SPARQL Endpoint F...

Extending LargeRDFBench for Multi-Source Data at Scale for SPARQL Endpoint F...

SPgen: A Benchmark Generator for Spatial Link Discovery Tools

SPgen: A Benchmark Generator for Spatial Link Discovery Tools

Benchmarking Link Discovery Systems for Geo-Spatial Data - BLINK ISWC2017.

Benchmarking Link Discovery Systems for Geo-Spatial Data - BLINK ISWC2017.

Instance Matching Benchmarks in the ERA of Linked Data - ISWC2017

Instance Matching Benchmarks in the ERA of Linked Data - ISWC2017

Último

Último (20)

Pests of mustard_Identification_Management_Dr.UPR.pdf

Pests of mustard_Identification_Management_Dr.UPR.pdf

Sector 62, Noida Call girls :8448380779 Model Escorts | 100% verified

Sector 62, Noida Call girls :8448380779 Model Escorts | 100% verified

Justdial Call Girls In Indirapuram, Ghaziabad, 8800357707 Escorts Service

Justdial Call Girls In Indirapuram, Ghaziabad, 8800357707 Escorts Service

Asymmetry in the atmosphere of the ultra-hot Jupiter WASP-76 b

Asymmetry in the atmosphere of the ultra-hot Jupiter WASP-76 b

Connaught Place, Delhi Call girls :8448380779 Model Escorts | 100% verified

Connaught Place, Delhi Call girls :8448380779 Model Escorts | 100% verified

The Mariana Trench remarkable geological features on Earth.pptx

The Mariana Trench remarkable geological features on Earth.pptx

Thyroid Physiology_Dr.E. Muralinath_ Associate Professor

Thyroid Physiology_Dr.E. Muralinath_ Associate Professor

SAMASTIPUR CALL GIRL 7857803690 LOW PRICE ESCORT SERVICE

SAMASTIPUR CALL GIRL 7857803690 LOW PRICE ESCORT SERVICE

FAIRSpectra - Enabling the FAIRification of Analytical Science

FAIRSpectra - Enabling the FAIRification of Analytical Science

Module for Grade 9 for Asynchronous/Distance learning

Module for Grade 9 for Asynchronous/Distance learning

300003-World Science Day For Peace And Development.pptx

300003-World Science Day For Peace And Development.pptx

HOBBIT Link Discovery Benchmarks at OM2017 ISWC 2017

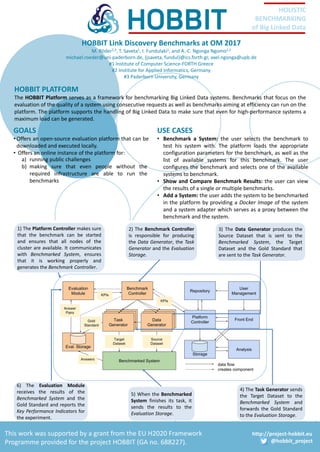

- 1. http://project-hobbit.eu @hobbit_project HOLISTIC BENCHMARKING of Big Linked Data This work was supported by a grant from the EU H2020 Framework Programme provided for the project HOBBIT (GA no. 688227). HOBBIT Link Discovery Benchmarks at OM 2017 M. Röder2,3, T. Saveta1, I. Fundulaki1, and A.-C. Ngonga Ngomo2,3 michael.roeder@uni-paderborn.de, {jsaveta, fundul}@ics.forth.gr, axel.ngonga@upb.de #1 Institute of Computer Science-FORTH Greece #2 Institute for Applied Informatics, Germany #3 Paderborn University, Germany HOBBIT PLATFORM The HOBBIT Platform serves as a framework for benchmarking Big Linked Data systems. Benchmarks that focus on the evaluation of the quality of a system using consecutive requests as well as benchmarks aiming at efficiency can run on the platform. The platform supports the handling of Big Linked Data to make sure that even for high-performance systems a maximum load can be generated. USE CASES • Benchmark a System: the user selects the benchmark to test his system with. The platform loads the appropriate configuration parameters for the benchmark, as well as the list of available systems for this benchmark. The user configures the benchmark and selects one of the available systems to benchmark. • Show and Compare Benchmark Results: the user can view the results of a single or multiple benchmarks. • Add a System: the user adds the system to be benchmarked in the platform by providing a Docker Image of the system and a system adapter which serves as a proxy between the benchmark and the system. 4) The Task Generator sends the Target Dataset to the Benchmarked System and forwards the Gold Standard to the Evaluation Storage. 5) When the Benchmarked System finishes its task, it sends the results to the Evaluation Storage. 6) The Evaluation Module receives the results of the Benchmarked System and the Gold Standard and reports the Key Performance Indicators for the experiment. 1) The Platform Controller makes sure that the benchmark can be started and ensures that all nodes of the cluster are available. It communicates with Benchmarked System, ensures that it is working properly and generates the Benchmark Controller. 2) The Benchmark Controller is responsible for producing the Data Generator, the Task Generator and the Evaluation Storage. 3) The Data Generator produces the Source Dataset that is sent to the Benchmarked System, the Target Dataset and the Gold Standard that are sent to the Task Generator. GOALS • Offers an open-source evaluation platform that can be downloaded and executed locally. • Offers an online instance of the platform for: a) running public challenges b) making sure that even people without the required infrastructure are able to run the benchmarks