Unit 3 – AIML.pptx

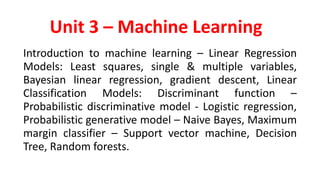

- 1. Unit 3 – Machine Learning Introduction to machine learning – Linear Regression Models: Least squares, single & multiple variables, Bayesian linear regression, gradient descent, Linear Classification Models: Discriminant function – Probabilistic discriminative model - Logistic regression, Probabilistic generative model – Naive Bayes, Maximum margin classifier – Support vector machine, Decision Tree, Random forests.

- 2. Machine Learning • Learning is the process of gaining new knowledge. • Machine learning is a subfield of artificial intelligence that involves the development of algorithms and statistical models that enable computers to improve their performance in tasks through experience.

- 3. Definition of Machine learning • A computer program is said to learn from experience E with respect to some class of tasks T and performance measure P, if its performance at tasks T, as measured by P , improves with experience E. • The goal of machine learning is to build computer systems that can adapt and learn from experience.

- 4. How machines learn ? • Typically, machine learning follows three phases: 1. Training 2. Validation 3. Application

- 5. Applications of Machine learning

- 6. Types of Machine learning • Supervised Learning • Unsupervised Learning and • Reinforcement Learning

- 7. Supervised learning • Supervised learning is a type of machine learning in which machines are trained using well "labelled" training data, and on basis of that data, machines predict the output. • The labelled data means some input data is already tagged with the correct output. • In supervised learning, the training data provided to the machines work as the supervisor that teaches the machines to predict the output correctly.

- 8. • The aim of a supervised learning algorithm is to find a mapping function to map the input variable(x) with the output variable(y). • In the real-world, supervised learning can be used for Risk Assessment, Image classification, Fraud Detection, spam filtering, etc. How Supervised Learning Works? • In supervised learning, models are trained using labelled dataset, where the model learns about each type of data. Once the training process is completed, the model is tested on the basis of test data (a subset of the training set), and then it predicts the output.

- 9. Training data Learning algorithm Model Test data Accur acy The working of Supervised learning can be easily understood by the below example

- 10. Steps Involved in Supervised Learning: • First Determine the type of training dataset • Collect/Gather the labelled training data. • Split the training dataset into training dataset, test dataset & validation dataset. • Determine the input features of the training dataset, which should have enough knowledge so that the model can accurately predict the output. • Determine the suitable algorithm for the model • Execute the algorithm on the training dataset. • Evaluate the accuracy of the model by providing the test set. If the model predicts the correct output, which means our model is accurate.

- 11. Unsupervised Learning • Unsupervised learning is a type of machine learning in which models are trained using unlabeled dataset and are allowed to act on that data without any supervision • Unsupervised learning is helpful for finding useful insights from the data. • Unsupervised learning is much similar as a human learns to think by their own experiences, which makes it closer to the real AI.

- 12. • Unlabeled input data is given as input. First, it will interpret the raw data to find the hidden patterns from the data and then will apply suitable algorithms, then processing the data to generate output.

- 13. Differences between Supervised and Unsupervised learning Supervised Learning Unsupervised Learning Supervised learning algorithms are trained using labeled data. Unsupervised learning algorithms are trained using unlabeled data. Supervised learning model takes direct feedback to check if it is predicting correct output or not. Unsupervised learning model does not take any feedback. Supervised learning model predicts the output. Unsupervised learning model finds the hidden patterns in data. In supervised learning, input data is provided to the model along with the output. In unsupervised learning, only input data is provided to the model.

- 14. Semi-Supervised Learning • Semi-Supervised learning is a type of Machine Learning algorithm that represents the intermediate ground between Supervised and Unsupervised learning algorithms. • It uses the combination of labelled and unlabeled datasets during the training period.

- 15. • The basic disadvantage of supervised learning is that it requires hand-labelling by ML specialists or data scientists, and it also requires a high cost to process. • For unsupervised learning, it has a limited spectrum for its applications. • To overcome these drawbacks of supervised learning and unsupervised learning algorithms, the concept of Semi-supervised learning is introduced.

- 16. • Semi-supervised learning models are becoming more popular in the industries. • Some familiar applications are: – Speech Analysis – Web content classification – Protein sequence classification – Text document classifier

- 17. Reinforcement Learning • User will get immediate feedback in Supervised learning and no feedback from Unsupervised learning. • But in Reinforcement Learning, user will get delayed scalar feedback. • Reinforcement learning is a type of machine learning method where an intelligent agent (computer program) interacts with the environment and learns to act within that

- 18. • For each good action, the agent gets positive feedback, and for each bad action, the agent gets negative feedback or penalty.

- 19. Elements of Reinforcement Learning • There are four main elements of Reinforcement Learning, which are given below: 1. Policy 2. Reward Signal 3. Value Function 4. Model of the environment

- 20. Regression • Regression analysis is a statistical method to model the relationship between a dependent (target) and independent (predictor) variables with one or more independent variables. • It predicts continuous/real values such as house prices, market trends, weather, Oil & gas prices. • It is mainly used for prediction, forecasting, time series modelling and determining the causal- effect relationship between variables.

- 21. Types of Regressions • Two basic types of regressions: • Linear and Multiple linear • Linear Regression: • Linear regression is a statistical regression method which is used for predictive analysis. • It is one of the very simple and easy algorithms which works on regression and shows the relationship between the continuous variables.

- 22. • Linear regression shows the linear relationship between the independent variable (X-axis) and the dependent variable (Y-axis), hence called linear regression. • If there is only one input variable (x), then such linear regression is called simple linear regression. And if there is more than one input variable, then such linear regression is called multiple linear regression. • The relationship between variables in the linear regression model can be explained using the below image.

- 23. • The regression line gives the average relationship between two variables X and Y.

- 24. • Regression line of X on Y: The best estimate for the value of X for any specific values of Y is X=a+bY • where a = X intercept, b= Slope of the line, X=dependent variable and Y=independent variable. • Regression line of Y on X: The best estimate for the value of Y for any specific values of X is Y=a+bX

- 25. Least Squares • The least square method is the process of finding the best-fitting line for a set of data points by reducing the sum of the squares of the offsets of the points from the line. • It is a crucial statistical method that is practised to find a regression line or a best-fit line for the given pattern. • This method is described by an equation with specific parameters

- 26. The given data points are to be minimized by the method of reducing residuals or offsets of each point from the line. The vertical offsets are generally used in surface, polynomial and hyperplane problems, while perpendicular offsets are utilized in common practice

- 27. Multiple Linear Regression • Multiple Linear Regression is one of the important regression algorithms which models the linear relationship between a single dependent continuous variable and more than one independent variable. • In Multiple Linear Regression, the target variable(Y) is a linear combination of multiple predictor variables x1, x2, x3, ...,xk.

- 28. • Let k represent the number of variables denoted by x1, x2, x3, ……, xk. • For this method, we assume that we have k independent variables x1, . . . , xk that we can set, then they probabilistically determine an outcome Y. • Furthermore, we assume that Y is linearly dependent on the factors according to • Y = β0 + β1x1 + β2x2 + · · · + βkxk + ε

- 29. Difference Between Linear and Multiple Regression • A simple linear regression can accurately capture the relationship between two variables in simple relationships. • On the other hand, multiple linear regression can capture more complex interactions that require more thought. • When predicting a complex process's outcome, it is best to use multiple linear regression instead of simple linear regression.

- 30. Bayesian Linear Regression • Bayesian Regression can be very useful when we have insufficient data in the dataset or the data is poorly distributed. • The output of a Bayesian Regression model is obtained from a probability distribution, as compared to regular regression techniques where the output is just obtained from a single value of each attribute

- 31. • The aim of Bayesian Linear Regression is not to find the model parameters, but rather to find the ‘posterior‘ distribution for the model parameters. • Not just the output y, but the model parameters are also assumed to come from a distribution.

- 32. Advantages of Bayesian Regression • Very effective when the size of the dataset is small. • Particularly well-suited for on-line based learning • Bayesian approach is a tried and tested approach and is very robust, mathematically.

- 33. Disadvantages of Bayesian Regression • The inference of the model can be time- consuming. • If there is a large amount of data available for our dataset, the Bayesian approach is not worth

- 34. Gradient Descent • Gradient Descent is one of the most commonly used optimization algorithms to train machine learning models by means of minimizing errors between actual and expected results. • Further, gradient descent is also used to train Neural Networks. • The main objective of using a gradient descent algorithm is to minimize the cost function using iteration

- 35. How does Gradient Descent work ? • Before starting the working principle of gradient descent, we should know some basic concepts to find out the slope of a line from linear regression. • The equation for simple linear regression is given as: Y=mX+c • where 'm' represents the slope of the line, and 'c' represents the intercepts on the y-axis

- 36. • The starting point( as shown in fig.) is used to evaluate the performance as it is considered just as an arbitrary point. • At this starting point, we will derive the first derivative or slope and then use a tangent line to calculate the steepness of this slope. • Further, this slope will inform the updates to the parameters (weights and bias)

- 37. Steepest Descent • The best way to define the local minimum or local maximum of a function using gradient descent is as follows: • If we move towards a negative gradient or away from the gradient of the function at the current point, it will give the local minimum of that function. • Whenever we move towards a positive gradient or towards the gradient of the function at the current point, we will get the local maximum of that function.

- 38. • This entire procedure is known as Gradient Ascent, which is also known as steepest descent. • To achieve this goal, it performs two steps iteratively:

- 39. • Calculates the first-order derivative of the function to compute the gradient or slope of that function. • Move away from the direction of the gradient, which means slope increased from the current point by alpha times, where Alpha is defined as Learning Rate.

- 40. • Learning Rate: It is defined as the step size taken to reach the minimum or lowest point. • This is typically a small value that is evaluated and updated based on the behaviour of the cost function. • If the learning rate is high, it results in larger steps. • If learning rate is low, it shows the small step sizes, which compromises overall efficiency but gives the advantage of more precision.

- 42. Linear Classification Model • A Classification algorithm makes its classification based on a linear predictor function combining a set of weights with the feature vector. • Linear Discriminant analysis: Linear Discriminant analysis is one of the most popular dimensionality reduction techniques used for supervised classification problems in machine learning.

- 43. • Whenever there is a requirement to separate two or more classes having multiple features efficiently, the LDA model is considered the most common technique to solve such classification problems. • For e.g., if we have two classes with multiple features and need to separate them efficiently. When we classify them using a single feature, then it may show overlapping.

- 45. • LDA algorithm works based on 6 steps as follows: Step 1 - First calculate means and std deviation of each feature. Step 2 - Class scalar matrix is calculated. Step 3 - LDA chooses k eigenvectors and their corresponding eigenvalues for the scatter matrices. Step 4 - Creating a new matrix that will contain the eigenvectors mapped to the k eigenvalues. Step 5 - Obtaining new features by taking the dot product of the data and the matrix from Step 4. Step 6 – LDA can be used for classification or dimensionality reduction.

- 46. Benefits of LDA • LDA is used for classification problems • LDA is used for dimensionality reduction

- 47. Logistic regression • Logistic regression is one of the most popular Machine Learning algorithms, which comes under the Supervised Learning technique. • It is used for predicting the categorical dependent variable using a given set of independent variables.

- 48. • Logistic regression predicts the output of a categorical dependent variable. • Therefore the outcome must be a categorical or discrete value. • It can be either Yes or No, 0 or 1, true or False, etc. but instead of giving the exact value as 0 and 1, it gives the probabilistic values which lie between 0 and 1.

- 49. Types of Logistic Regression 1. Binary Logistic Regression • The categorical response has only two 2 possible outcomes. Example: True or False 2. Multinomial Logistic Regression • Three or more categories without ordering. Example: Predicting which food is preferred more (Indian, Chinese, Contenential) 3. Ordinal Logistic Regression • Three or more categories with ordering. Example: Movie rating from 1 to 5

- 50. • In Logistic regression, instead of fitting a regression line, we fit an "S" shaped logistic function, which predicts two maximum values (0 or 1). • The curve from the logistic function indicates the likelihood of something. • The below image is showing the logistic function:

- 52. • Logistic Regression equation of the straight line can be written as : • Logistic Regression y can be between 0 and 1

- 53. Naive Bayes • Naive Bayes algorithm is a supervised learning algorithm, which is based on Bayes theorem and used for solving classification problems. • It is mainly used in text classification that includes a high-dimensional training dataset. • Naïve Bayes Classifier is one of the simple and most effective Classification algorithms which helps in building the fast machine learning models that can make quick predictions.

- 54. • Some popular examples of Naïve Bayes Algorithm are spam filtration, Sentimental analysis, and classifying articles. • Conditional Probability • Conditional probability is possibility of an event or outcome happening, based on the existence of a previous event or outcome.

- 55. • Suppose A and B be the two independent events with probabilities respectively P(A) and P(B) such that the probability of occurrence of event B given A is given by: P(B|A) = P(A ∩ B)/P(A) • Similarly, the probability of occurrence of event A given B is given by: P(A|B) = P(A ∩ B)/P(B)

- 56. Joint Probability • Joint probability is a statistical measure that calculates the likelihood of two events occurring together and at the same point in time. • Formula for Joint Probability • The following formula represents the joint probability of events with intersection. • P (A⋂B) where, A, B= Two events • P(A and B)=P(A)P(B)

- 57. Bayes' Theorem • Bayes' Theorem states that the conditional probability of an event, based on the occurrence of another event, is equal to the likelihood of the second event given the first event multiplied by the probability of the first event. • Bayes' Theorem gives relation between P(A|B) and P(B|A).

- 58. • The above equation is called as Bayes' rule or Bayes' theorem. • This equation is basic of most modern AI systems for probabilistic inference. • P(A|B) is known as posterior, which we need to calculate, and it will be read as Probability of hypothesis A when we have occurred an evidence B.

- 59. • P(A) is called the prior probability, probability of hypothesis before considering the evidence • P(B) is called marginal probability, pure probability of an evidence. • In the equation (a), in general, we can write P (B) = P(A)*P(B|Ai), hence the Bayes' rule can be written as:

- 60. Support Vector Machine(SVM) • Support Vector Machine(SVM) is algorithm used for both classification and regression. • The objective of the SVM algorithm is to find a hyperplane in an N-dimensional space that distinctly classifies the data points. • The dimension of the hyperplane depends upon the number of features. • If the number of input features is two, then the hyperplane is just a line. If the number of input features is three, then the hyperplane becomes a 2-D plane

- 61. • The goal of the SVM algorithm is to create the best line or decision boundary that can segregate n-dimensional space into classes so that we can easily put the new data point in the correct category in the future. • This best decision boundary is called a hyperplane.

- 62. • Bad Decision boundary