The Meet Group Safety Practices

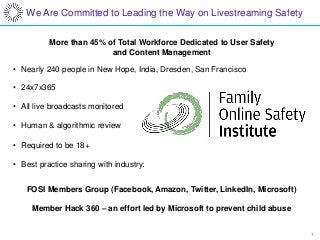

- 1. 1 We Are Committed to Leading the Way on Livestreaming Safety More than 45% of Total Workforce Dedicated to User Safety and Content Management • Nearly 240 people in New Hope, India, Dresden, San Francisco • 24x7x365 • All live broadcasts monitored • Human & algorithmic review • Required to be 18+ • Best practice sharing with industry: FOSI Members Group (Facebook, Amazon, Twitter, LinkedIn, Microsoft) Member Hack 360 – an effort led by Microsoft to prevent child abuse

- 2. 2 Large-scale Trust & Safety Operation

- 3. 3 Standards & Education • A cross-disciplinary committee meets weekly to review and update moderation standards • We educate on standards in various locations throughout the app • We make changes in response to: • Observed behavior • User feedback • New features • Changes in law • Changes in best practices • Moderation standards are fully customizable and constantly improving: https://terms.meetme.com/code-of-conduct • We do not record Live • We do not make or store recordings of broadcasts • We do not re-broadcast streams

- 4. 4 Detection & Prevention • We sample broadcaster streams algorithmically and with high frequency • We use proprietary algorithms for initial review. Our configurable algorithms clear more than 80% of broadcaster content, with an error-rate lower than that of typical human-error rate • Content that the algorithm does not clear goes to our human reviewers. • We review nearly ten million photos per day • If a user reports a broadcaster: 1) a screenshot is generated which skips algorithmic review and goes to top of the human-review queue, and 2) the broadcast is watched/listened to by a human moderator typically within 60 seconds • Humans also proactively watch a subset of broadcasts based on algorithms

- 5. 5 Enforcement & Remediation • Consequences vary based on severity of offense • Viewers • Grey chat comments not displayed • Banned from broadcast • Broadcasters • Broadcast ended • Time ban • Explanatory warning sent (driving, etc.) • Broadcast ended and profile closed (sexual content) • Broadcast ended and authorities notified (underage content, imminent danger)

- 6. 6 Continuous Improvement • We are at the forefront of livestreaming content moderation. We manage a pipeline of safety-oriented tasks, just as we manage a pipeline of engagement-oriented tasks • Pipeline includes: • Industry leading abuse-reporting prominence on all visual content and profiles • Cooling off period for new streamers • Forced acknowledgement of content message for all streamers every time • Time delay for certain streams • Enhanced transparency for moderator actions • Data science, algorithmic improvements

Notas do Editor

- 1

- It all starts with standard setting Meet weekly Transparent standards, ever evolving located. Don’t record Live