360 degree appraisals: Theory and Evidence

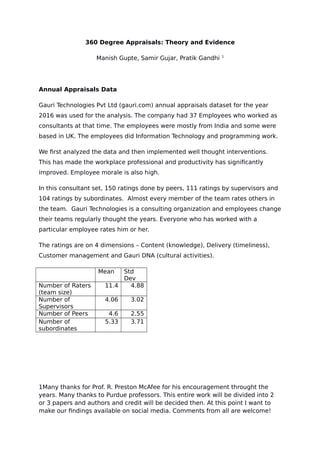

- 1. 360 Degree Appraisals: Theory and Evidence Manish Gupte, Samir Gujar, Pratik Gandhi 1 Annual Appraisals Data Gauri Technologies Pvt Ltd (gauri.com) annual appraisals dataset for the year 2016 was used for the analysis. The company had 37 Employees who worked as consultants at that time. The employees were mostly from India and some were based in UK. The employees did Information Technology and programming work. We first analyzed the data and then implemented well thought interventions. This has made the workplace professional and productivity has significantly improved. Employee morale is also high. In this consultant set, 150 ratings done by peers, 111 ratings by supervisors and 104 ratings by subordinates. Almost every member of the team rates others in the team. Gauri Technologies is a consulting organization and employees change their teams regularly thought the years. Everyone who has worked with a particular employee rates him or her. The ratings are on 4 dimensions – Content (knowledge), Delivery (timeliness), Customer management and Gauri DNA (cultural activities). Mean Std Dev Number of Raters (team size) 11.4 4.88 Number of Supervisors 4.06 3.02 Number of Peers 4.6 2.55 Number of subordinates 5.33 3.71 1Many thanks for Prof. R. Preston McAfee for his encouragement throught the years. Many thanks to Purdue professors. This entire work will be divided into 2 or 3 papers and authors and credit will be decided then. At this point I want to make our findings available on social media. Comments from all are welcome!

- 2. Evidence of Natural Group Formation or possible Collusion in Peer appraisals: We created two groups of people. First group has people who speak the local language and second are out of state employees. An indicator variable was set if the rater and ratee are from different groups. In 87 peer ratings there was no competition and these are candidates to have collusion effect. In 66 peer ratings completion was possible. We conducted a t-test and compared peer (non compete group) and supervisor ratings for the Content (Knowledge) dimension. The results are as follows. Obs in peer: 87, Obs in supervisor: 120, Mean in peer: 3.16, mean in supervisor: 2.89, p-value=0.008 The findings show, peer ratings are 9% higher than supervisor ratings. t-test between peer competition group and supervisor ratings did not show a significant difference. This suggests employees find it easier to work with people who are from their natural group. Also, game playing and collusion is also likely. We then computed the mean peer rating (in each of the individual groups) and mean supervisor rating. The means were for individual employees. The findings are: There was no significant difference in the timeliness. Collusion Group Competiti on Group Knowledgebility - mean Peer rating-mean supervisor rating 0.206 0.062 Timeliness - mean Peer rating-mean supervisor rating 0.22 0.083 This suggests a 6.93% upward bias in knowledgeability and 8.21% upward bias in timeliness in the collusion group. The bias is low in the competition group.

- 3. We also ran an ordinary least squares regression with a ratee’s average peer rating minus average supervisor rating as left hand side. Thus, we study effect of various factors and incentives on overall bias. The results are as follows: Knowledgebility Timeliness Estimate p-value Estima te p- value Intercept 2.7 0.003 2.51 0.000 3 Mean Supervisor rating -0.77 0.002 -0.705 0.002 Num possible colluders -0.11 0.29 -0.181 0.067 Num supervisor rators 0.035 0.46 0.049 0.277 When supervisor rating is high collusion is less likely. And when number of colluders is more collusion is less likely in timeliness but it still happens in knowledgeability. As knowledgeability is difficult to quantify the inability to exert peer pressure and influence more rators does not affect knowledgeability bias but it reduces the timeliness bias. It is more difficult to collude on timeliness than on knowledgeability. Theory How knowledgeable a person is, is a subjective question when compared to aspects like timeliness. So, the bias in peer ratings could be due to collusion. <Principal agent model with multiple signals revealed by peers. Collusion and peer pressure.> Also knowledge is difficult to verify but timeliness can be verified easier. The upward bias is on the knowledge dimension easier. This suggest an effect like Holmstrom Milgrom. Holmstrom and Milgrom have shown agents focus their efforts on signal which are better. In the same way agents distort ratings more where principal’s signal is poorer. Biases in individual ratings: Collusion as well as competition

- 4. We consider individual peer ratings now on knowledgebility. The left hand side in peer rating – mean supervisor rating for that employee. So, individual game playing and biases can be detected. The histogram of this bias is as follows: This histogram indicates there are both upward and downward and downward biases. OLS results with individual peer bias as LHS are as follows: Estima te t- value p- value Intercept 2.71 6.31 0 Mean Supervisor rating -0.82 -6.2 0 1 if Collusion is possible 0.22 1.87 0.064 Number peers an employee has mentioned strengths of -0.06 -2.13 0.035 The Adj r-sqr is 0.25.

- 5. There is evidence that the bias is upward when collusion is possible. Higher supervisor ratings are correlated with downward bias. And more positive and engaged employees (those who list more strengths) seem to discern their peer more and the bias shifts downward. We then created indicator variables for collusion and competition biases. If downward bias is more than -0.2 then it’s consider competition and if the upward bias is more than 0.2 it’s collusion. OLS results for Competition LHS are as follows: Estima te t- value p- value Intercept -0.836 -3.23 0.001 5 Mean supervisor rating 0.35 4.5 0 Number of rators -0.026 -1.735 0.085 Number of peers strengths are mentioned for 0.045 2.56 0.011 Adj R-sqr is 0.156. This result indicated downward bias due to competition concerns is possible but as the number of rators increases the possibility of competition adversely affecting ratings falls. Stronger employees (those with higher supervisor ratings) experience competition pressure. When rators are more positive then tend to compete harder too. OLS results for Collusion as LHS are as follows: Estima te t- value p- value Intercept 1.94 7.12 0 1 if Collusion possible 0.19 2.52 0.013 Mean Supervisor Ratings -0.467 -5.62 0 Number of peers strengths mentioned for -0.044 -2.47 0.015

- 6. When the rator and ratee are from the same group collusion is more likely. Weaker employee tend to collude (higher supervisor ratings mean less collusion). Also more positive employees are less likely to collude. Text Feedback Analysis Feedback on strengths and areas of improvement is collected from employees. Areas of Improvement for Knowledge Total rators Total rating s Num of Minor Improveme nts Num of Not minor Improvem ent Not Minor/Total Rators Non compete peer 30 23 7 16 0.533 Compete peer 26 16 1 15 0.577 Areas of Improvement Timeliness Total rators Total Ratin gs Num of Minor Improvem ent Num of Not minor Improvem ent Not Minor/To tal Rators Non compete peer 30 28 2 26 0.867 Compete peer 26 20 1 19 0.731 Communication and presentation were considered as minor improvement areas. Strengths Total rators Total Knowledge Tot knowledge/Rator s Total Timelines s ratings Tot Timeline ss/

- 7. Rators Non compete peer 30 73 2.433 63 2.100 Compete peer 26 45 1.731 42 1.615 Peers from the same group are more likely to state strengths than peers from different groups. Areas if improvement suggested are marginally higher too in the non-compete group when compared to compete group. This suggests employees in the same group like to like to project their peers better. The areas of improvement could be higher in the non-compete group because the peers want to genuinely help their friends. The probability of getting feedback on knowledge divided by the probability of getting feedback on timeliness is summarized below. Improveme nts Strengt hs Non compete peer 0.615385 1.1587 3 Compete peer 0.789474 1.0714 29 A number close to 1 suggest the employees are indifferent between giving feedback on timeliness and knowledge. As seen above the collusion group has a lower knowledge/timeliness on areas of improvement and higher knowledge/timeliness in strengths. This suggests peer like to shade truth when the principal’s signal is weaker. Knowledge is difficult to verify but timeliness can be easily verified. Holmstrom Milgrom have shown that agents shirk more on tasks which have a weaker signal. In the same way, agents distort ratings more on dimensions for which the principal has a weaker signal.

- 8. Evidence of Subordinates offering favour to supervisors. Fear/ respect could also be a reason. T-test results. Subordinate ratings obs: 55 Supervisor ratings obs: 108 Dimension Mean of Subordinate Mean of Supervisor P-Value Content (Knowledge) 3.12 2.84 0.01 Delivery (Timeliness) 3.09 2.78 0.03 Customer 3.06 2.66 0.00 Culture 2.92 2.74 0.18 Evidence for joint pay as an incentive mechanism for collection and elicitation of developmental feedback. We are implementing continuous feedback and when an employee was recognized for giving useful developmental feedback about another employee he gave more useful feedback. Weekly meetings with individual employees were productive. Employees were recognized if their feedback was useful and mildly reprimanded if they did not give useful feedback. This strategy is more potent that only rewarding useful feedback. (Theory required) Solutions to Bias in Ratings We are collecting coarse information to avoid biases. We are asking employees to justify their observations. And, we are collecting feedback on a continuing basis so that people do not forget what actually happened and facts can be verified easier. An econometrics based diagnostics as presented in the paper also alerts the management about possible biases and their motivations. They then verify is

- 9. something like this is actually happening and that reduces biases and game playing. <Theory> Continuous feedback has also improved employee morale and productivity. Shirking is also being detected early.