Big Data Helsinki v 3 | "Federated Learning and Privacy-preserving AI" - Oguzhan Gencoglu

"Machine learning algorithms require significant amounts of training data which has been centralized on one machine or in a datacenter so far. For numerous applications, such need of collecting data can be extremely privacy-invasive. Recent advancements in AI research approach this issue by a new paradigm of training AI models, i.e., Federated Learning. In federated learning, edge devices (phones, computers, cars etc.) collaboratively learn a shared AI model while keeping all the training data on device, decoupling the ability to do machine learning from the need to store the data in the cloud. From personal data perspective, this paradigm enables a way of training a model on the device without directly inspecting users’ data on a server. This talk will pinpoint several examples of AI applications benefiting from federated learning and the likely future of privacy-aware systems."

Recomendados

Recomendados

Mais conteúdo relacionado

Mais procurados

Mais procurados (20)

Semelhante a Big Data Helsinki v 3 | "Federated Learning and Privacy-preserving AI" - Oguzhan Gencoglu

Semelhante a Big Data Helsinki v 3 | "Federated Learning and Privacy-preserving AI" - Oguzhan Gencoglu (20)

Mais de Dataconomy Media

Mais de Dataconomy Media (20)

Último

Último (20)

Big Data Helsinki v 3 | "Federated Learning and Privacy-preserving AI" - Oguzhan Gencoglu

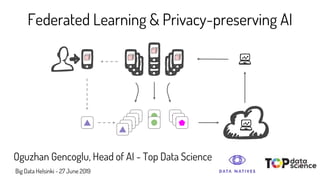

- 1. Federated Learning & Privacy-preserving AI Oguzhan Gencoglu, Head of AI - Top Data Science Big Data Helsinki - 27 June 2019

- 2. About Top Data Science ● Business : “AI as a Service” ● Located in Helsinki, Finland ● 15 people (12 data scientists with MScs and PhDs) ● Excellent customer track record - Finland, Germany, Denmark, Japan, Vietnam, Israel, USA ● 60+ machine learning solutions delivered Customers & Partners

- 3. Outline ● The Problem ● The Solution : Federated Learning ● Application Example ● Differential Privacy ● Other Privacy-preserving AI Concepts

- 4. The Problem?

- 9. Example - Gboard Hard, Andrew, et al. "Federated learning for mobile keyboard prediction." arXiv preprint arXiv:1811.03604 (2018). ● Higher next-word prediction accuracy = + 24% ● More useful prediction strip = + 10% more clicks ● Better emoji recommendation = + 7% ● 11% more users share emojis

- 10. Differential Privacy a constraint on the algorithms used to publish aggregate information about a database which limits the disclosure of private information Learning common patterns in a dataset without memorizing individual examples

- 11. “ Do you pee in the shower? ” yes no

- 12. Mikko’s real answer HEADS TAILS yes no HEADS TAILS %50 %50 %25 %25

- 13. Relevant Concepts Quasi-identifier : pieces of information that are not of themselves unique identifiers, but are sufficiently well correlated with an entity to create a unique identifier Typical bank loan eligibility data

- 14. Relevant Concepts Exponential Mechanism : a technique for designing differentially private algorithms (McSherry & Talwar, 2007)

- 15. Differentially Private FL McMahan, Brendan, et al. "Learning Differentially Private Recurrent Language Models." (2018).

- 16. Tools & Libraries ● github.com/tensorflow/federated ● github.com/tensorflow/privacy ● github.com/uber/sql-differential-privacy ● github.com/IBM/differential-privacy-library

- 17. Other Privacy-preserving AI Concepts

- 18. ML on Encrypted Data f3a9d 71g3e f3a9d 71g3e End-User Third Party Benign Tumor Trained Model Encrypted Prediction Input Data Encrypted Input Decrypted Prediction ● The end-user encrypts her sensitive data and sends it to a third-party host. ● As end-user owns the private key, third-party cannot decrypt the input nor output prediction. ● Third-party produces an encrypted prediction which is returned to the end-user. ● Privacy is preserved in the entire pipeline for both inputs and outputs.

- 19. Homomorphic Encryption ● Homomorphic Encryption (HE) is a form of encryption that allows computation (eg multiplication and addition) on ciphertexts, generating an encrypted result which, when decrypted, matches the result of the operations as if they had been performed on the plaintext. 3 + 5 = 8 d8d4h… + 8ke3s1… = 1u3y7... Plain Domain Cipher Domain Note : Computations in the cipher domain are very costly in terms of speed and memory.

- 20. Membership Inference Attacks An interesting insight: the accuracy of the inference attack increases with increasing number of classes Given a black-box machine learning model and a data record, determining whether this record was used as part of the model’s training dataset or not, was shown to be possible with extremely high accuracy [7]. As a result, we now know that just a simple query access to a black-box API that returns the model’s output on a given input, can leak significant amount of information about the individual data records on which the model were trained on.

- 21. Data Generation Dar et al., Image synthesis in multi-contrast MRI with cGANs, 2019

- 22. Data Generation Hyland et al., Real-valued time series generation with recurrent cGANs, 2017

- 23. Thank You!

Notas do Editor

- Models need to run on device : offline and quicker

- Models need to run on device : offline and quicker