Determining Data Quality for NOvA experiment

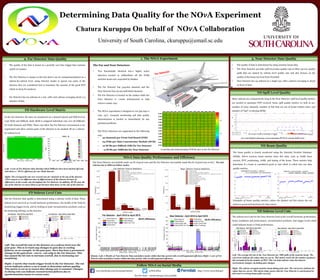

- 1. NO!A Data Quality Performance and Efficiency The Near Detector successfully made 79.7% of good runs and the Far Detector successfully made 86.2% of good runs so far.! The bad subruns due to different failure modes: Follow NO!A on Social Media www.facebook.com/novaexperiment/ See live data! nusoft.fnal.gov/nova/public @NOvANuz http://www-nova.fnal.gov Bottom: Left: 6 Months of Near Detector Data and failure modes within that time period with overall/segmented efficiency Right: 1 year of Far Detector data and failure modes within that time period, with overall/segmented efficiency 1.8% 6.3% 0.06% 0.8% 0.4% 0.09% 8.3% 0.01% 1. The NO!A Experiment 15.8% 3.1% < 0.1% 14.9% 0.0% 26.1% 14.1% 14.9% 4.9% 0.2% wire (beam direction) • Two functionally identical low-z highly active detectors located 14 milliradians off the NuMi neutrino beam axis, separated by 809km • The Far Detector has 344,064 channels and the Near Detector has 20,192 individual channels. • The Far Detector is located on the surface while the Near Detector is ~100m underground to help remove cosmic rays. • The NOvA experiment is designed to run 365 days a year, 24/7. Constant monitoring and data quality determination is needed to immediately fix any potential problems • The NOvA detectors are segmented in the following way: wire (beam direction) wire (beam direction) 2. Far Detector Data Quality Determining Data Quality for the NO!A Experiment Chatura Kuruppu On behalf of NO!A Collaboration University of South Carolina, ckuruppu@email.sc.edu The Far and Near Detectors• The quality of the data is based on a periodic zero bias trigger that contains 150Hz of cosmics. • The Far Detector is unique in the fact that it can be compartmentalized on a subrun-by-subrun level, using detector masks to ignore any parts of the detector that are considered bad to maximize the amount of the good POT which we keep for analyzes. • Far Detector has 64 subruns in a run, with each subrun averaging about 2-3 minutes of data 3. Near Detector Data Quality • The quality of data is determined by using neutrino beam data. • The Near Detector provides spill level data quality cuts to filter out low quality spills that are missed by subrun level quality cuts and also focuses on the quality of the beam received from Fermilab • Near Detector has 24 subruns in a single run, with a subrun averaging to about an hour of data. FD Hardware Level Matrix In the Far Detector, hit rates are monitored on a channel (pixel) and FEB level in each DCM and DiBlock. Each DCM is assigned individual cuts over all DiBlocks for both channels and FEBs. These cuts allow the Far Detector environment to be segmented and allow certain parts of the detector to be masked off on a subrun- by-subrun level. FD Subrun Level Cuts The Far Detector data quality is determined using a subruns worth of data. These subrun level cuts look at overall hardware performance, the health of the DAQ by monitoring trigger levels, and by looking at basic reconstruction products, such as hit clustering (slicing), in the detector. ND Spill Level Quality Since subruns are comparatively long in the Near Detector, spill level quality metrics are needed to maximize POT received. Some spill quality metrics we look at are: number of noisy channels, number of hits that are out of beam window time, and number of “bad” or missing DCMs ND Subrun Level Cuts The subrun level cuts for the Near Detector look at the overall hardware performance, beam conditions and performance, reconstruction products, and trigger levels which could indicate beam or DAQ performance. Left: The average hit rate in the Near Detector per 1000 spills of the neutrino beam. The red arrows indicate the values that are cut on. This metric tracks the the number of physics hits normalized by the number of POT. This can help indicate when the detector environment changes, including gain changes. Right: The subrun averaged neutrino beam timing peak start. The red arrows indicate the values that are cut on. This metric helps ensure that the Near Detector is synchronized in time and is receiving beam spills correctly. ND Beam Quality The beam quality is closely monitored using the Intensity Frontier Database (IFDB). NOvA receives beam metrics from this data, such as NuMI horn current, POT, positioning, width, and timing of the beam. These metrics help determine if a beam is considered good or bad which is rolled into our data quality metrics. Examples of beam quality metrics, where the dashed red line shows the cut between good and bad beam for that metric Left: A year of Far Detector data showing which DiBlocks have been masked off (red) and shows a ~99.4% efficiency for our whole detector. Right: The averaged hit rate over several runs for channels in the top of the detector. NOvA expects to see different rates in different parts of the detector because of differences in the cosmic rate throughout the Far Detector. In addition, DCMs near the top of the detector are more likely to get hit more than those on the side of the detector. Left: The overall hit rate in the detector on a subrun level over the past year. This is to track any changes in gain due to cooling failures, as an example. In the past year, there has been a permanent change in the gain from ~100 to ~150 only in the Far Detector. This has caused the hit rate to increase overall, due to increasing our sensitivity. Right: A metric that tracks trigger levels in the Far Detector. The red arrow indicates where a cut is made to determine subrun quality. This metric is cut on to ensure that slicing rate is constant. Changes in slicing rate can indicate reconstruction failures due to synchronization issues or excess noise. • 32 channels per Front End Board (FEB) • 64 FEBs per Data Concentrator Module (DCM) • 12 DCMs per DiBlock (DB) for Far Detector • ~4 DCMs per DiBlocks for Near Detector A real time plot demonstrating FEB hit rates in the Far Detector An event display showing a non-reporting DCM in a beam Spill event Far Detector Near Detector NDOS Prototype