Outlier Detection and Impact

- 2. Introduction 1. Who cares? 2. What is an Outlier? 3. How does it impact regression? 4. What causes Outliers? 5. How can we detect them? 6. What to do with Outliers? 7. Recap: Why/when do they matter?

- 3. Why Market Forecasts Keep Missing the Mark “Fish gotta swim, birds gotta fly and analysts and market strategists gotta try predicting what stocks will do every year. But you don't gotta act on those predictions -- at least not before you ask how likely they are to hit the bullseye.” The Wall Street Journal(2009)1 http://www.wsj.com/news/articles/SB123275782424412007

- 4. Significant Outliers If you take the daily returns of the Dow from 1900 to 2008 and you subtract the 10 best days, you end up with about 60 percent less money than if you had stayed invested the entire time If you remove the worst 10 days from history, you would have ended up with three times more money.yed invested the entire time.

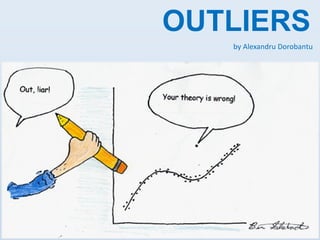

- 5. What is an Outlier? data point that is far outside the norm for a variable or population; observation that “deviates so much from other observations as to arouse suspicions that it was generated by a different mechanism”; values that are “dubious in the eyes of the researcher”.

- 6. How outliers impact ordinary regression Even worse, with multiple regression, an outlier in x-space may not look particularly unusual for any single x-variable. If there's a possibility of such a point, it's potentially a very risky thing to use least squares regression on.

- 8. Data errors Outliers are often caused by human error, such as errors in data collection, recording, or entry.

- 9. Intentional or motivated mis-reporting. • Social desirability and self-presentation motives can be powerful • This can also happen for obvious reasons when data are sensitive (e.g., teenagers under-reporting drug or alcohol use, mis- reporting of sexual behavior). http://www.livescience.com/7038-men-report-sex-partners-women.html

- 10. Sampling error It is possible that a few members of a sample were inadvertently drawn from a different population than the rest of the sample.

- 11. Standardization failure If something anomalous happened during a particular subject’s experience it will influence the study outcome.

- 12. Faulty distributional assumptions Incorrect assumptions about the distribution of the data can also lead to the presence of suspected outliers

- 13. Legitimate cases sampled from the correct population It is possible that an outlier can come from the population being sampled legitimately through random chance. It is important to note that sample size plays a role in the probability of outlying values.

- 14. Outliers as potential focus of inquiry We don't necessarily need to remove the outliers sometimes, finding the outliers is the purpose of the study (e.g. fraud identification, immunology advancements1) http://www.medicalnewstoday.com/articles/241705.php

- 15. Identification of Outliers Simple rules of thumb: Data points three or more standard deviations from the mean Mahalanobis’ distance Cook’s D Cook’s D Mahalanobis’ distance

- 19. Mahalanobis’ distance That doesn't really look like a circle, does it? That's because this picture is distorted (as evidenced by the different spacings among the numbers on the two axes).

- 20. Mahalanobis’ distance Let's redraw it with the axes in their proper orientations--left to right and bottom to top--and with a unit aspect ratio so that one unit horizontally really does equal one unit vertically: You measure the Mahalanobis distance as Euclidean distance in this picture rather than in the original.

- 21. Dealing with Outliers Removal 2,3,4,5,6,10,100 2,3,4,5,6,10,100 Transformations (e.g.: taking the log) 2,3,4,5,6,10,100 0.3, 0.47, 0.6, 0.69, 1 , 2 Trunchiation 2,3,4,5,6,10,100 2,3,4,5,6,10,10

- 22. Robust Methods – Trimmed mean Is calculated by temporarily eliminating extreme observations at both ends of the sample (between 10%-25% of ends) 1, 6, 12, 14, 20, 24, 36, 100 Regular Mean: 26.62 1, 6, 12, 14, 20, 24, 36, 100 Trimmed Mean: 17.5 More Info

- 23. Robust Methods – Winsorized mean Highest and lowest observations are temporarily censored, and replaced with adjacent values from the remaining data 1, 6, 12, 14, 20, 24, 36, 100 Regular Mean: 26.62 6, 6, 12, 14, 20, 24, 36, 36 Winsorized Mean: 19.25 More Info

- 24. Robust Methods – LTS The Least Trimmed Squares (LTS) method attempts to minimize the sum of squared residuals over a subset, k, of those points. The n-k points which are not used do not influence the fit. More Info

- 25. Robust Methods – LMS The Least Median of Squares (LMS) replaces the mean by the much less sensitive median, witch generates a more robust estimator. 1, 6, 12, 14, 20, 24, 36, 100 Regular Mean: 26.62 Median = (20+14)/2 = 17 More info

- 26. Recap: Why do they matter? Present false information (data errors, etc.) Create new questions (new clusters, etc.) Ruin predictions (increase error-proneness ) Offer insights (anomalies, examples, etc.) Announce issues (fraud, etc.)

- 27. Recap: When do they matter? Always! It’s just that sometimes you NEED to have them and sometimes you NEED NOT to have them.

- 28. Thank you for listening! Hope I didn’t waste your time!

- 29. References 1. The power of outliers (and why researchers should ALWAYS check for them) – Jason W. Osborne and Amy Overbay, North Carolina State University 2. Best Practices in Quantitative Methods – edited by Jason Osborne 3. Outlier detection using regression – http://stats.stackexchange.com 4. Fast linear regression robust to outliers – http://stats.stackexchange.com 5. Explanation of the Mahalanobis distance – http://stats.stackexchange.com