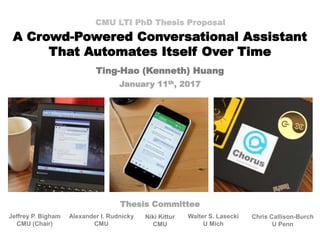

A Crowd-Powered Conversational Assistant That Automates Itself Over Time

- 1. A Crowd-Powered Conversational Assistant That Automates Itself Over Time CMU LTI PhD Thesis Proposal Ting-Hao (Kenneth) Huang January 11th, 2017 Jeffrey P. Bigham CMU (Chair) Alexander I. Rudnicky CMU Chris Callison-Burch U Penn Walter S. Lasecki U Mich Niki Kittur CMU Thesis Committee

- 2. Intro | Improving Chorus | Deployment | Automating Over Time 2 Intelligent Conversational Assistants

- 3. Intro | Improving Chorus | Deployment | Automating Over Time Challenges of Open Conversation • Combining multiple dialog systems • DialPort (Zhao, et al., 2016) • Adapting a model to many other domains • Walker, et al., 2007; Sun, et al., 2016 • Chit-chat system • Hold social conversations (Banchs, et al., 2012) • Still a very hard problem… – Alexa Prize: $2.5 Million • “… achieves the grand challenge of conversing coherently and engagingly with humans on popular topics for 20 minutes.” 3

- 4. Chorus: A Crowd-powered Conversation Assistant • A group of crowd workers collectively hold a conversation by: 1. Propose Responses 2. Vote on Responses 3. Take Notes • Reward points for each action Lasecki, W. S.; Wesley, R.; Nichols, J.; Kulkarni, A.; Allen, J. F.; and Bigham, J. P. 2013. Chorus: A crowd-powered conversational assistant. In UIST 2013, UIST ’13, 151–162. Intro | Improving Chorus | Deployment | Automating Over Time 4

- 5. What kind of conversations can Chorus have?

- 6. female, computer science PhD student in Texas we're going to visit her this weekend from Pittsburgh She's in Austin Does she have any favorite TV shows, movies, or video games? U Sure! What types of things does your friend like? U Can you suggest some birthday present for one of my friend? Intro | Improving Chorus | Deployment | Automating Over Time 6 Gift Suggestion

- 7. Pittsburgh with which company are you flying? U Let me check UHow many suitcases can I take on a flight from the US to Israel? Intro | Improving Chorus | Deployment | Automating Over Time 7 Can I ask you from where are you planning to board the flight? and which air services are you using? Travel Planning

- 8. okay wait a sec How can I help you? UWho was the prime minister of Australia when JFK was assassinated Intro | Improving Chorus | Deployment | Automating Over Time 8 Let me check it Robert Menzies Arbitrary Question

- 9. Intro | Improving Chorus | Deployment | Automating Over Time A Top-Down Approach 9 Chorus Fully-Automated System Hybrid System Minor Automated Assistance Cost High Low Latency High Low

- 10. Intro | Improving Chorus | Deployment | Automating Over Time Outline 1. Intro 2. Part I: Improving Chorus 3. Part II: Deployment 4. Part III: Automating Over Time 5. Conclusion 10

- 11. Outline 1. Intro 2. Part I: Improving Chorus – InstructableCrowd: Creating IF-THEN Rules via Conversation with the Crowd ( Huang et. al., CHI EA 2016) 3. Part II: Deployment 4. Part III: Automating Over Time 5. Conclusion Intro | Improving Chorus | Deployment | Automating Over Time 11 Ting-Hao K. Huang, Amos Azaria, Jeffrey P Bigham. InstructableCrowd: Creating IF-THEN Rules via Conversations with the Crowd. In CHI LBW 2016. (Best Paper Honorable Mention) Icon made by Pixel Buddha from www.flaticon.com

- 12. A Rule = IF(s) + THEN(s) Intro | Improving Chorus | Deployment | Automating Over Time 12 https://commons.wikimedia.org/wiki/File:IFTTT_Logo.svg, https://www.flickr.com/photos/cjmartin/9261707401, https://www.flickr.com/photos/paperon/15641138784, https://www.flickr.com/photos/chriscoyier/16673560329 , http://www.publicdomainpictures.net/view- image.php?image=23182 IF(s) THEN(s)

- 13. InstructableCrowd: Creating IF-THEN Rules via Conversation with the Crowd Intro | Improving Chorus | Deployment | Automating Over Time 13

- 14. Intro | Improving Chorus | Deployment | Automating Over Time InstructableCrowd Overview 14

- 15. Worker Interface Intro | Improving Chorus | Deployment | Automating Over Time 15

- 16. User Study • 10 participants, 6 scenarios, 10 workers per trial • Evaluation – App Selection (P/R/F1) – Attribute Filling (Accuracy) Intro | Improving Chorus | Deployment | Automating Over Time 16

- 17. Evaluation Intro | Improving Chorus | Deployment | Automating Over Time App Selection (P/R/F1) Attribute Filling (Accuracy) 17

- 18. What did we learn? • Crowd-powered conversational interface can be used to create IF-THEN rules. Intro | Improving Chorus | Deployment | Automating Over Time 18 IF(s) THEN(s) User

- 19. Intro | Improving Chorus | Deployment | Automating Over Time Outline 1. Intro 2. Part I: Improving Chorus 3. Part II: Deployment – Chorus Deployment (Huang et. al., HCOMP 2016) – Chorus Dataset (Proposed) 4. Part III: Automating Over Time 5. Conclusion 19 Ting-Hao K. Huang, Walter S. Lasecki, Amos Azaria, Jeffrey P. Bigham. "Is there anything else I can help you with?": Challenges in Deploying an On-Demand Crowd-Powered Conversational Agent. In Proceedings of Conference on Human Computation & Crowdsourcing (HCOMP 2016), 2016, Austin, TX, USA.

- 20. We deployed Chorus • Launched on May 20th, 2016. • 132 users used it during 1,028 conversational sessions • TalkingToTheCrowd.org Intro | Improving Chorus | Deployment | Automating Over Time 20

- 21. System Overview Intro | Improving Chorus | Deployment | Automating Over Time 21

- 22. 22 How to recruit workers fast on-demand?

- 23. How to recruit workers fast on-demand? • Two Common Practices – Start recruiting on-demand (Bigham, et al., 2010) – Keep workers on-call (Retainer) (Bernstein, et al., 2011) • Both are designed for short tasks Intro | Improving Chorus | Deployment | Automating Over Time 23

- 24. Retainer Model 24 / 31 Conversation Conv. Ends Wait in Retainer Time Conv. Starts Wait in Retainer Workers’ waiting time cost money. Intro | Improving Chorus | Deployment | Automating Over Time 24

- 25. Chorus’ Recruiting Method Conversation 1 Conversation 2 Post HIT Fully Occupied Conv. 1 Ends Post HIT Wait in Retainer Time Intro | Improving Chorus | Deployment | Automating Over Time 25

- 26. Is this recruiting method fast enough? • Avg first crowd response time = 88.351 sec Intro | Improving Chorus | Deployment | Automating Over Time 26 56.08% first crowd respond within 1 min

- 27. What challenges did we identify?

- 28. Intro | Improving Chorus | Deployment | Automating Over Time Challenges Identified • Malicious workers & users • Identifying the end of a conversation • When workers’ consensus is not enough 28

- 29. Intro | Improving Chorus | Deployment | Automating Over Time Challenges Identified • Malicious workers & users • Identifying the end of a conversation • When workers’ consensus is not enough 29

- 30. Intro | Improving Chorus | Deployment | Automating Over Time Malicious Users • Abusive Languages – Sexual content – Profanity – Hate speech – Threats of criminal acts • Solutions – Word detection 30

- 31. What did we learn? • Deploying a on-demand real-time crowd- powered agent is feasible. • Basic Statistics – Avg session duration = 10.493 min (SD = 14.139 min) – Avg #message per session = 17.877 (SD = 24.158) – Avg cost per conversation = $2.48 (SD = $0.99) Intro | Improving Chorus | Deployment | Automating Over Time 31

- 32. Intro | Improving Chorus | Deployment | Automating Over Time Outline 1. Intro 2. Part I: Improving Chorus 3. Part II: Deployment – Chorus Deployment (Huang et. al., HCOMP 2016) – Chorus Dataset (Proposed) 4. Part III: Automating Over Time 5. Conclusion 32

- 33. Chorus Dataset (Proposed) • Goal: Future Automation – Automatic response generation & selection – Dialog Learning (state tracking) • Data – Message, Vote (upvote / downvote), Note • Data Pre-processing – Anonymization – Inappropriate Content – Spamming Messages – Conversation Segmentation Intro | Improving Chorus | Deployment | Automating Over Time 33

- 34. Intro | Improving Chorus | Deployment | Automating Over Time Outline 1. Intro 2. Part I: Improving Chorus 3. Part II: Deployment 4. Part III: Automating Over Time – Guardian: A Crowd-Powered Dialog System for Web APIs (Huang et. al., HCOMP 2015; Huang et. al., HCOMP WIP 2014) – Automate Chorus over time (Proposed) 5. Conclusion 34 Ting-Hao K. Huang, Walter S. Lasecki, Jeffrey P. Bigham. Guardian: A Crowd-Powered Spoken Dialog System for Web APIs. In HCOMP 2015. Ting-Hao K. Huang, Walter S. Lasecki, Alan L. Ritter, Jeffrey P. Bigham. Combining Non-Expert and Expert Crowd Work to Convert Web APIs to Dialog Systems. HCOMP WIP 2014.

- 35. Intro | Improving Chorus | Deployment | Automating Over Time Empower Chorus with Multiple Dialog Systems 35

- 36. Intro | Improving Chorus | Deployment | Automating Over Time How to build a set of dialog systems quickly? 36

- 37. Use Web APIs to Empower Chorus 37 / 56 16,583+ APIS Intro | Improving Chorus | Deployment | Automating Over Time 37

- 38. Guardian: A Crowd-Powered Dialog System for Web APIs 3 2 Call Web APIHi, I’m in San Diego. Any Chinese restaurants here? 1 Talk and Extract Parameter Interpret Result to User Mandarin Wok Restaurant is good ! It’s on 4227 Balboa Ave. term = Chinese location = San Diego Yelp Search API 2.0 { ... "name": "Mandarin Wok Restaurant”,... "address":["4227 Balboa Ave”,...], …} JSON Intro | Improving Chorus | Deployment | Automating Over Time 38

- 39. How to convert a Web API to a conversational agent? term location Hi, I’m in San Diego. Any Chinese restaurants here? Intro | Improving Chorus | Deployment | Automating Over Time Which parameters to use? How to extract parameters? 39

- 40. How to convert a Web API to a conversational agent? term location Hi, I’m in San Diego. Any Chinese restaurants here? Intro | Improving Chorus | Deployment | Automating Over Time Which parameters to use? How to extract parameters? 40

- 41. Select Parameter: Step (1): Collect Questions I like Chinese food. What do you want to eat? ? ! I’m in Pittsburgh. Which city are you in? ? ! Dinner. Is it dinner or lunch? ? ! ... Yelp API Question Collection Intro | Improving Chorus | Deployment | Automating Over Time 41

- 42. Select Parameter: Step (2): Filter Parameters offset I like Chinese food. What do you want to eat? ? ! I’m in Pittsburgh. Which city are you in? ? ! Dinner. Is it dinner or lunch? ? ! ... term location sw_latitude sw_longitude category_filter Yelp API Question Collection Parameter Filtering Intro | Improving Chorus | Deployment | Automating Over Time 42

- 43. Select Parameter: Step (3): Parameter-Question Matching offset I like Chinese food. What do you want to eat? ? ! I’m in Pittsburgh. Which city are you in? ? ! Dinner. Is it dinner or lunch? ? ! ... location ? ! term ? ! ! ? ! ? ! ? ! ? ! category_filter ? ! ? ! ? ! ? ! ? ! ? ! ? ! ? ! ? ! ? ! ? ! ? ! ? ! ? ! ? ! ? ! ? !? ! ? ! ? ! ? ! ? ! ? !? ! ? ! ? ! ? ! ? !? ! ? ! ? ! ? ! ? ! ? ! ? ! term location sw_latitude sw_longitude category_filter BetterParameter Yelp API Question Collection Parameter Filtering Question-Parameter Matching Intro | Improving Chorus | Deployment | Automating Over Time 43

- 44. Which parameters to use? How to convert a Web API to a conversational agent? term location Intro | Improving Chorus | Deployment | Automating Over Time How to extract parameters? 44 Hi, I’m in San Diego. Any Chinese restaurants here?

- 45. Extract Parameters: Dialog ESP Game Hi, I’m in San Diego. Answer Aggregate Location = San Diego RecruitedPlayers Time Constraint Intro | Improving Chorus | Deployment | Automating Over Time 45

- 46. Aggregate Method 1: ESP + 1st ESP Answers do NOT Match ESP Answer Matches Intro | Improving Chorus | Deployment | Automating Over Time 46

- 47. Aggregate Method 2: 1st Only ESP Answers do NOT Match ESP Answer Matches Intro | Improving Chorus | Deployment | Automating Over Time 47

- 48. Experiment • Data – Airline Travel Information System (ATIS) • Class A: Context Independent (simple) • Class D: Context Dependent • Class X: Unevaluable • Settings – Number of workers = 10 – Time constraint = 20 seconds – 2 aggregate methods – Using Amazon Mechanical Turk Intro | Improving Chorus | Deployment | Automating Over Time 48

- 49. How good? How fast? Intro | Improving Chorus | Deployment | Automating Over Time 49 0 0.2 0.4 0.6 0.8 1 Class A Class D Class X CRF 1st only ESP + 1st 0 1 2 3 4 5 6 7 8 9 Class A Class D Class X 1st only ESP + 1st F1-score = 0.8 (Class D) < 9 sec (ESP+1st) F1-score Response Time (sec)

- 50. Guardian: A Crowd-Powered Dialog System for Web APIs 3 2 Call Web APIHi, I’m in San Diego. Any Chinese restaurants here? 1 Talk and Extract Parameter Interpret Result to User Mandarin Wok Restaurant is good ! It’s on 4227 Balboa Ave. term = Chinese location = San Diego Yelp Search API 2.0 { ... "name": "Mandarin Wok Restaurant”,... "address":["4227 Balboa Ave”,...], …} JSON Intro | Improving Chorus | Deployment | Automating Over Time 50

- 51. Web API Task Find Chinese restaurants in Pittsburgh. Check current weather by using a zip code. Find information of “Titanic”. API Only 9 / 10 9 / 10 6 / 10 API + Crowd Recover 10 / 10 9 / 10 10 / 10 Domain Referenced 0.96 0.94 0.88 End-to-end Evaluation (TCR) Intro | Improving Chorus | Deployment | Automating Over Time 51

- 52. What did we learn? • Use non-expert crowd to convert Web APIs to dialog systems are feasible. Intro | Improving Chorus | Deployment | Automating Over Time 52 Define Parameters Extract Parameters

- 53. Intro | Improving Chorus | Deployment | Automating Over Time Outline 1. Intro 2. Part I: Improving Chorus 3. Part II: Deployment 4. Part III: Automating Over Time – Guardian: A Crowd-Powered Dialog System for Web APIs (Huang et. al., HCOMP 2015; Huang et. al., HCOMP WIP 2014) – Automate Chorus over time (Proposed) 5. Conclusion 53

- 54. Intro | Improving Chorus | Deployment | Automating Over Time Empower Chorus with Multiple Dialog Systems 54

- 55. Intro | Improving Chorus | Deployment | Automating Over Time 55 / 31 Initial Chorus 55

- 56. Automatic Responder Selection Intro | Improving Chorus | Deployment | Automating Over Time 56 • Label: Upvote/Downvote • Feature: • Conversation content • Previously selected bot • Generated text • End-user’s responses • …

- 57. 57 / 31 Intro | Improving Chorus | Deployment | Automating Over Time Automatic Response Voting 57 Automatic Responder Selection • Label: Upvote/Downvote • Feature: • Conversation content • Previously selected bot • Mete data of the bot • Other response candidates • …

- 58. 58 / 31 Intro | Improving Chorus | Deployment | Automating Over Time Adjusting Worker’s Workload 58 Automatic Response Voting Automatic Responder Selection • Bootstrapping • Bot v.s. workers • Competing for votes

- 59. Intro | Improving Chorus | Deployment | Automating Over Time Preliminary Results • 3 automatic bots • Randomly selected each turn 59

- 60. Intro | Improving Chorus | Deployment | Automating Over Time Preliminary Results (Cont.) 60 Humans are good. Auto bots receive much more downvotes.

- 61. Conclusion • What did we do? – InstructableCrowd (CHI EA 2016) • Enabling Chorus to create IF-THEN rules – Chorus Deployment (HCOMP 2016) • Chorus is deployable – Guardian (HCOMP 2015; HCOMP WIP 2014) • Converting a Web API to a dialog system • What will we do? – Automate Chorus over time – Release Chorus dataset 61

- 62. Intro | Improving Chorus | Deployment | Automating Over Time Contributions 62 Fully-Automated System Hybrid System Minor Automated Assistance Deploying Chorus Automate Chorus Over Time Data for Future Automation

- 63. Intro | Improving Chorus | Deployment | Automating Over Time Timeline • January - March 2017: Automating response voting • March - May 2017: Automating responder selection • May - September 2017: Automating dynamic workload assignment • September - December 2017: Chorus Dataset • September - December 2017: Thesis writing • Spring 2018: Thesis Defense 63

- 64. Intro | Improving Chorus | Deployment | Automating Over Time Q&A TalkingToTheCrowd.org 64

- 65. Reference • Zhao, T., Lee, K., & Eskenazi, M. (2016). DialPort: Connecting the Spoken Dialog Research Community to Real User Data. arXiv preprint arXiv:1606.02562.. • Banchs, R. E., & Li, H. (2012, July). IRIS: a chat-oriented dialogue system based on the vector space model. In Proceedings of the ACL 2012 System Demonstrations (pp. 37-42). Association for Computational Linguistics. • Walker, M. A., Stent, A., Mairesse, F., & Prasad, R. (2007). Individual and domain adaptation in sentence planning for dialogue. Journal of Artificial Intelligence Research, 30, 413-456. • Sun, M. (2016). Adapting Spoken Dialog Systems Towards Domains and Users. Doctoral dissertation, YAHOO! Research. • Bigham, J. P., Jayant, C., Ji, H., Little, G., Miller, A., Miller, R. C., ... & Yeh, T. (2010, October). VizWiz: nearly real-time answers to visual questions. In Proceedings of the 23nd annual ACM symposium on User interface software and technology (pp. 333- 342). ACM. • Bernstein, M. S., Brandt, J., Miller, R. C., & Karger, D. R. (2011, October). Crowds in two seconds: Enabling realtime crowd-powered interfaces. In Proceedings of the 24th annual ACM symposium on User interface software and technology (pp. 33-42). ACM. 65

- 66. Publication List 1. "Is there anything else I can help you with?": Challenges in Deploying an On- Demand Crowd-Powered Conversational Agent Ting-Hao K. Huang, Walter S. Lasecki, Amos Azaria, Jeffrey P. Bigham. In Proceedings of Conference on Human Computation & Crowdsourcing (HCOMP 2016), 2016, Austin, TX, USA. 2. Guardian: A Crowd-Powered Spoken Dialog System for Web APIs Ting-Hao K. Huang, Walter S. Lasecki, Jeffrey P. Bigham. In Conference on Human Computation & Crowdsourcing (HCOMP 2015), pages 62–71, November, 2015, San Diego, USA. 3. InstructableCrowd: Creating IF-THEN Rules via Conversations with the Crowd Ting-Hao K. Huang, Amos Azaria, Jeffrey P Bigham. In CHI '16 Late-Breaking Work on Human Factors in Computing Systems (CHI LBW 2016), May, 2016, San Jose, CA, USA. (Best Paper Honorable Mention Award) 4. Combining Non-Expert and Expert Crowd Work to Convert Web APIs to Dialog Systems Ting-Hao K. Huang, Walter S. Lasecki, Alan L. Ritter, Jeffrey P. Bigham. Work-in-Progress paper in the Proceeding of Conference on Human Computation & Crowdsourcing (HCOMP WIP 2014), pages 22-23, November 2-4, 2014, Pittsburgh, USA. 66

- 67. Backup Slides 67

- 68. 4 Conditions Intro | Improving Chorus | Deployment | Automating Over Time 1. User Only2. Crowd Only 3. Crowd + User4. Crowd Voting 68

- 69. Intro | Improving Chorus | Deployment | Automating Over Time Worker Interface 69

- 70. Trade-offs (Class A) 4 6 8 10 12 14 16 18 20 0 2 4 6 8 10 Avg.ResponseTime(sec) # Player ESP + First (20 sec) ESP + First (15 sec) First (20 sec) First (15 sec) 0.60 0.65 0.70 0.75 0.80 0.85 0.90 0.95 1.00 0 2 4 6 8 10 F1-score # Player ESP + 1st (20 sec) ESP + 1st (15 sec) 1st (20 sec) 1st (15 sec) 0.65 0.70 0.75 0.80 0.85 0.90 0.95 5 6 7 8 9 10 11 12 F1-score Avg. Response Time (sec) 10 Players 9 Player 8 players 7 Players 6 Players 5 Players ESP + 1st (20 sec) 1st Only (20 sec) Intro | Improving Chorus | Deployment | Automating Over Time 70 More Workers, Faster More Workers, Better Quality Faster, Worse Quality

- 71. Aggregate Method 1: ESP Only ESP Answers do NOT Match Empty Label ESP Answer Matches Intro | Improving Chorus | Deployment | Automating Over Time 71

- 72. Evaluation 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 MAP MRR Question Matching Not Unnatural Ask Siri Ask a Friend Intro | Improving Chorus | Deployment | Automating Over Time 72 Question Matching outperforms all baselines.

Editor's Notes

- Good morning everyone, I am Kenneth Huang. Thanks for joining my thesis proposal. For the past few years, I have been working on a crowd-powered conversational assistant called Chorus. Because Chorus is powered by humans, it is robust in various ways that current automated dialog systems can still not match. Over that time, I (1) deployed Chorus to the public and it has had more than a thousand conversations with users, (2) I have connect Chorus to applications on your phones to enable it to interact with the real world, and, (3) I started working on creating a framework that enables Chorus to automate itself over time. This will allow to reduce cost, decrease latency, and potentially give us some insight about how to create an fully-automated dialog ststem.

- Conversation is powerful. It enables people to interact efficiently and come to a shared context quickly. A long-standing dream in the AI community is to build a machine that can converse fluidly with a human partner. Today, many conversational assistant products are available. Like Amazon Echo, Apple Siri, or Google Now, but none of them allow for fluid conversation. In fact, most of them do not allow for real conversation at all - they support one or two turns at most, and build limited context as they converse. They take speech queries or voice commands to perform pre-defined task. Unless one of these assistants has been explicitly trained for a task, its ability to handle it is incredibly limited. how we’re training users what commmands they can say rather than supporting robust dialog

- In the research community of dialog system, many researchers and projects aim to build a system that can hold open conversation with users. How ever, building such a system is still a very hard problem.

- Chorus allows for much more robust conversation by employing the crowd, people on the web who are paid to operate the system. Chorus was introduced in 2013. In Chorus, workers collectively hold a conversation by performing 3 main actions: First, each worker can propose responses. Second, each worker can vote to choose the best responses submitted by other workers. And finally, they list important facts that should be remembered so that workers can later easily catch up with the conversation to respond appropriately (that is, they build context explicitly). An incentive mechanism helps to ensure that the best action for workers is to respond with and vote on high-quality responses. (ADD NUMBERS MAYBE

- What kind of conversations can Chorus have? Let us take a look at three examples.

- Gift suggestion.

- So what kind of conversation can Chorus have? Here is an actual example. The user wants some suggestions of the birthday gift of a friend. And Chorus started the discussion by asking more details about this friends, such as “What type of things does your friend like?”

- https://en.wikipedia.org/wiki/Robert_Menzies So what kind of conversation can Chorus have? Here is an actual example. The user wants some suggestions of the birthday gift of a friend. And Chorus started the discussion by asking more details about this friends, such as “What type of things does your friend like?” Not the end. That is the beginning.

- In this dissertation, we take a top-down approach. We start with the working system Chorus, which cam hold long and sophiscated conversations with humans, and then learn piece by piece how to improve it, automate it, and gradually reduce the cost and latency of the system.

- So, again, today’s talk will be organized under these 3 main parts: 1.Expanding the Capabilities of Chorus 2.Deploy Chorus to Gather Data 3.Automating Chorus We will walk through these three part, propose a framework that enables Chorus to automate itself over time, and finally conclusion the talk with the contributions we want to make.

- One limitation of Chorus is that it can only “say” something, but not “do” something. One important function of conversational assistants today is to help the users control their devices, such as setting an alarm, or adding an event to their calendars. So in part 1, we develop a system that allows users to create IF-THEN rules via conversation with the crowd. (((( add examples

- What is an IF-THEN rule? The concept is inspired by the famous IFTTT, it enables user to combine two apps together. If app A do something, it automatically trigger app B to do something. For instance, if the weather APP tells me tomorrow will rain, then send me a notification today. It’s also be widely used to control the smart home today. For instance, a rule is called “purple rain”. If it will rain tomorrow, the app will automatically turn the light in your room to purple.

- To create this type of rules, we create InstructableCrowd.

- This is the app we created (explain the user workflow)

- Explain (1) application selection + (2) attribute filling (((((( not important I think…

- Explain app selection + att filling (again)

- We compare our system with the “User Only” setting, which means the user manually create the rule by the rule editor we made. App selection (good) Att filling is slightly worse but still good (>0.9)

- It tell us it is feasible. We enable an alternative way to create a rule and the quality is as good as users. Now, Chorus can hold sophiscated conversations, and it has the capability to set up the applications and smart homes for you. (((((((( maybe not assume this

- And next, we are going to talk about the deployment. The goal of the deployment is to collect data to train machine-learning algorithms for the future automation. ((((( transition?...

- As many of you might know, we deployed as a Google Hangouts chatbot. We deployed the system on May 20th last year. It has had 1000+ conversations with 100+ users today. It is a public deployment, you can got to TalkingToTheCrowd.org (sign consent form-ish) Take advantage of the Google Hangouts client, you can use it (everywhere, for phones, desktop, and even wearables.)

- Interface – (((((( why re-implement it??????

- ((((((((((( transition…?

- If you build a system that can talk about anything, people will say anything to it. You need to take care this problem when developing thus type of systems.

- This information is very important for us to under the influence of automated components to the system

- Furthermore, we will release the data we collected during the deployment as the Chorus Dataset. This is the first proposed work in this dissertation.

- The goal of the data release is to help the AI community to develop automated dialog systems. Such as response generation, response selection, or more complex dialog learning such as state tracking. This will be a valuable dataset that records the conversations with real user and a conversational assistant that has human-level capability. (details)

- Let’s move to the part 3: the Automation part.

- Let’s first take a look at the overview of the automation. The way we are going to automate Chorus is to have Chorus incorperate with a big set of external dialog systesm, and gradually learn when to call them to obtain responses. For instacne, (Yelp example)

- So the first question is: How to build a big set of external dialog systems quickly?

- We think of Web APIs. This page shows the ProgrammableWeb, a web site that collects Web APIs. Nowadays, it contains 16 thousands of Web APIs. We have a lot of them. they are well-defined. And a lot of them are even free.

- Guaridan’s framework contains three main steps: First, the workers have a conversation with the user, and extract the parameter values with a dialog ESP Game. Second, behind the scenes, the system will us these values to call the Yelp API and run the query. Finally, when Yelp API returns the result, it’s in a JSON file. We also use the crowd to interpret the response. We visualize the JSON file as a user friendly interface. The workers can click through the data and explore the information inside the JSON. By using Guardian, we can have a running dialog system without using any training data or even pre-knowledge of task.

- In any dialog systems, if you want to add a new service to your system, you need to solve 2 main problems: Define the slots, and fill the slots. In other word, under the context of APIs, it is to define the parameters, and to fill the parameters. For example, if you want to add Yelp Search API to your system. Firstly, you need to know what information is required by this API. That is location, and query term. And in your system, you need to have something extracting the location and query term for you, so that you can call and use the API. How do modern dialog systems usually do it?

- In any dialog systems, if you want to add a new service to your system, you need to solve 2 main problems: Define the slots, and fill the slots. In other word, under the context of APIs, it is to define the parameters, and to fill the parameters. For example, if you want to add Yelp Search API to your system. Firstly, you need to know what information is required by this API. That is location, and query term. And in your system, you need to have something extracting the location and query term for you, so that you can call and use the API. How do modern dialog systems usually do it?

- Like this! The ideas we propose here is to collect questions related to this task, and then ask the workers use questions to vote for parameters. Take the Yelp API for example, we first collect all possible questions from the crowd. Like “what do you want to eat?”, “where are you?”, “What’s your budget?”and so on. And then we ask workers to associate questions with parameters. So essentially, the workers are using questions to vote for parameters. We assume the parameters that are associated with more questions are better for dialog systems. How does this work? (((((((((( parameter names updated

- Like this! The ideas we propose here is to collect questions related to this task, and then ask the workers use questions to vote for parameters. Take the Yelp API for example, we first collect all possible questions from the crowd. Like “what do you want to eat?”, “where are you?”, “What’s your budget?”and so on. And then we ask workers to associate questions with parameters. So essentially, the workers are using questions to vote for parameters. We assume the parameters that are associated with more questions are better for dialog systems. How does this work? (((((((((( parameter names updated

- Like this! The ideas we propose here is to collect questions related to this task, and then ask the workers use questions to vote for parameters. Take the Yelp API for example, we first collect all possible questions from the crowd. Like “what do you want to eat?”, “where are you?”, “What’s your budget?”and so on. And then we ask workers to associate questions with parameters. So essentially, the workers are using questions to vote for parameters. We assume the parameters that are associated with more questions are better for dialog systems. How does this work? (((((((((( parameter names updated

- In any dialog systems, if you want to add a new service to your system, you need to solve 2 main problems: Define the slots, and fill the slots. In other word, under the context of APIs, it is to define the parameters, and to fill the parameters. For example, if you want to add Yelp Search API to your system. Firstly, you need to know what information is required by this API. That is location, and query term. And in your system, you need to have something extracting the location and query term for you, so that you can call and use the API. How do modern dialog systems usually do it?

- We propose a multi-player Dialog ESP Game to extract parameter values from a running conversation. ESP Game is originally proposed for image labeling, now we adopt the idea to dialog. In the interface, we show the dialog, we show the description of the parameter, and ask the workers to type what the other workers might type If there are two answers matching with each other, we take it as the extracted parameter value. This method works well. Now we can extract parameters without having any training data. Therefore, based on all the works we’ve done, we propose a system called “Guardian”: There are 2 ways to aagregate the answers.

- Class A: 0.9 Class D: 0.8 Less than 9 sec Even faster if you obtain the first answer

- Guaridan’s framework contains three main steps: First, the workers have a conversation with the user, and extract the parameter values with a dialog ESP Game. Second, behind the scenes, the system will us these values to call the Yelp API and run the query. Finally, when Yelp API returns the result, it’s in a JSON file. We also use the crowd to interpret the response. We visualize the JSON file as a user friendly interface. The workers can click through the data and explore the information inside the JSON. By using Guardian, we can have a running dialog system without using any training data or even pre-knowledge of task.

- We implement the system on 3 different Web APIs. Yelp API for restaurant search, Weather Underground API for weather query, and RottenTomatoes API for movie query. We design three small tasks for each API, and run 10 trials on each systems. Here we only talking about the task completion rate. By task completion we mean the system provides the valid responses that contains the information the user requires. You can see the task completion rate is almost perfect. It’s because, first, the task here is relatively simple, second, even when the results returned from the API is incorrect, most of the time, crowd workers is able to figure it out the recover the correct answers. We also compare our result with the task completion rate reported by literature. The numbers are not directly comparable, but you can still see that our system reaches the same level of task completion rate with automated systems.

- Now we have a big set of dialog systems

- (((((((( 16k bots to select!

- The Chorus is a deployed system. It collects data every day. Therefore, the automated components will get more and more reliable over time. We will have our automated model update periodically, like every day or week And it can take more responsebility from the crowd Race condition. Constraint of how many responses you can send within a single turn It also has a threshold of number of votes to accept a response. These constraints natually put human workers and machines in a race condition, and the workload of humans will likely be redcued. Also, note that in Chorus all the actions (porpose/note/vote) all has a corresponding reward points. How many work are taken by the machine.

- The other 2 are based on information retrieval tech. It takes the user’s input message, and search the most similar question in a Question-Answer dataset, and return the answer of that question. Q&A bot uses QA pairs extracted from the CNN interviews; Memory bot uses the old conversations that Chorus had with users.

- ((((((( explain a bit !

- It turned out our workflow outperforms all three baselines. When you take a look at the result, you will know the quality is much better and close to practical use.