Mixed Effects Models - Fixed Effects

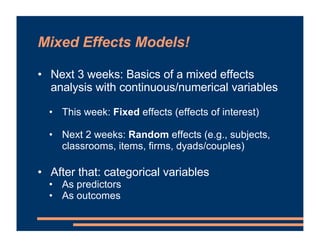

- 1. Mixed Effects Models! • Next 3 weeks: Basics of a mixed effects analysis with continuous/numerical variables • This week: Fixed effects (effects of interest) • Next 2 weeks: Random effects (e.g., subjects, classrooms, items, firms, dyads/couples) • After that: categorical variables • As predictors • As outcomes

- 2. Week 3.1: Fixed Effects ! Sample Dataset & Research Questions ! Theoretical Model ! lme4 in R ! Parameter Interpretation ! Slopes ! Intercept ! Hypothesis Testing ! t-test ! Confidence Interval ! More About Model Fitting

- 3. Introduction to Fixed Effects • Canvas: Modules " Week 3.1 • Team problem-solving task in organizations • Assemble a landline phone • 10 teams at each of 11 companies (total 110) • Dependent measure: Minutes taken to complete the task • Predictor variables: • Number of newcomers on the team • Average years of experience Lewis, Belliveau, Herndon, & Keller (2007)

- 4. Introduction to Fixed Effects • Canvas: Modules " Week 3.1 • Team problem-solving task in organizations • Assemble a landline phone • 10 teams at each of 11 companies (total 110)

- 5. Week 3.1: Fixed Effects ! Sample Dataset & Research Questions ! Theoretical Model ! lme4 in R ! Parameter Interpretation ! Slopes ! Intercept ! Hypothesis Testing ! t-test ! Confidence Interval ! More About Model Fitting

- 6. Introduction to Fixed Effects = Minutes taken to assemble phone Newcomers + + Years of Experience + Company Baseline • Predicting one variable as a function of others

- 7. Introduction to Fixed Effects = Minutes taken to assemble phone Newcomers + + Years of Experience + Company Baseline • Predicting one variable as a function of others NEXT WEEK!

- 8. Introduction to Fixed Effects = Minutes taken to assemble phone Newcomers + + Years of Experience Baseline • Predicting one variable as a function of others Fixed effects that we’re trying to model

- 9. Introduction to Fixed Effects = Minutes taken to assemble phone Newcomers + + Years of Experience Baseline • Predicting one variable as a function of others γ00 (Bryk & Raudenbush, 1992; Quene & van den Bergh, 2004, 2008) THEORY AND DEFINITION:

- 10. Introduction to Fixed Effects = Minutes taken to assemble phone Newcomers + + Years of Experience Baseline • Predicting one variable as a function of others γ00 (Bryk & Raudenbush, 1992; Quene & van den Bergh, 2004, 2008) x1i(j) x2i(j) THEORY AND DEFINITION:

- 11. Introduction to Fixed Effects • Predicting one variable as a function of others • Relationship of number of newcomers to completion time in minutes is probably not 1:1 (Bryk & Raudenbush, 1992; Quene & van den Bergh, 2004, 2008) Regression line relating number of newcomers to completion time

- 12. Introduction to Fixed Effects = Minutes taken to assemble phone Newcomers + + Years of Experience Baseline • Predicting one variable as a function of others • γ20 = slope of line relating newcomers to time taken • One of the fixed effects we want to find this out • How do newcomers affect completion time in this task? γ00 (Bryk & Raudenbush, 1992; Quene & van den Bergh, 2004, 2008) γ10x1i(j) γ20x2i(j)

- 13. Introduction to Fixed Effects = Minutes taken to assemble phone Newcomers + + Years of Experience Baseline • Can we determine the exact completion time based on team composition? γ00 (Bryk & Raudenbush, 1992; Quene & van den Bergh, 2004, 2008) γ10x1i(j) γ20x2i(j)

- 14. Introduction to Fixed Effects • Can we determine the exact completion time based on team composition? • Probably not. (Bryk & Raudenbush, 1992; Quene & van den Bergh, 2004, 2008)

- 15. Introduction to Fixed Effects = Minutes taken to assemble phone Newcomers + + Years of Experience Baseline • Can we determine the exact completion time based on team composition? • Probably not. • These variables just provide our best guess • The expected value γ00 (Bryk & Raudenbush, 1992; Quene & van den Bergh, 2004, 2008) γ10x1i(j) γ20x2i(j) E(Yi(j))

- 16. Introduction to Fixed Effects = Minutes taken to assemble phone Newcomers + + Years of Experience Baseline • To represent the actual observation, we need to add an error term • Discrepancy between expected & actual value γ00 γ10x1i(j) γ20x2i(j) yi(j) + Error

- 17. Introduction to Fixed Effects = Minutes taken to assemble phone Newcomers + + Years of Experience Baseline • To represent the actual observation, we need to add an error term • Discrepancy between expected & actual value γ00 γ10x1i(j) γ20x2i(j) yi(j) + ei(j) Error

- 18. Introduction to Fixed Effects • What if we aren’t interested in predicting specific values? • e.g., We want to know whether a variable matters or the size of its effect • But: We learn this from asking whether and how an independent variable predicts the dependent variable • If number of newcomers significantly predicts what the completion time will be, there’s a relation

- 19. Week 3.1: Fixed Effects ! Sample Dataset & Research Questions ! Theoretical Model ! lme4 in R ! Parameter Interpretation ! Slopes ! Intercept ! Hypothesis Testing ! t-test ! Confidence Interval ! More About Model Fitting

- 20. Running the Model in R L M E R inear ixed ffects egression

- 21. Running the Model in R • Time to fit our first model! • model1 <- lmer( MinutesTaken ~ 1 + Newcomers + YearsExperience + (1|CompanyID) , data=problemsolving) • Here it is as a single line: • model1 <- lmer(MinutesTaken ~ 1 + Newcomers + YearsExperience + (1|CompanyID), data=problemsolving) Name of our model, like naming a dataframe Linear mixed effects regression (function name) Dependent measure comes before the ~ Intercept (we’ll discuss this more very soon) Variables of interest (fixed effects) Random effect variable Name of the dataframe where your data is

- 22. Running the Model in R • Time to fit our first model! • model1 <- lmer( MinutesTaken ~ 1 + Newcomers + YearsExperience + (1|CompanyID) , data=problemsolving) • Quick note: This version of the model makes assumptions about the random effects that might or might not be true. We’ll deal with this in the next two weeks when we discuss random effects. Name of our model, like naming a dataframe Linear mixed effects regression (function name) Dependent measure comes before the ~ Intercept (we’ll discuss this more very soon) Variables of interest (fixed effects) Random effect variable Name of the dataframe where your data is

- 23. Running the Model in R • Where are my results? • Just like with a dataframe, we’ve saved them in something we can view later • To view the model results: • model1 %>% summary() • Or whatever your model name is • Saving the model makes it easy to compare models later or to view your results again

- 24. Sample Model Results Formula: Variables you included Data: Dataframe you ran this model on Check that these two matched what you wanted! Relevant to model fitting. Will discuss soon. Random effects = next week! Number of observations, # of clustering groups Results for fixed effects of interest Correlations between effects • Probably don’t need to worry about this unless correlations are very high (Friedman & Wall, 2005; Wurm & Fisicaro, 2014)

- 25. Week 3.1: Fixed Effects ! Sample Dataset & Research Questions ! Theoretical Model ! lme4 in R ! Parameter Interpretation ! Slopes ! Intercept ! Hypothesis Testing ! t-test ! Confidence Interval ! More About Model Fitting

- 26. Parameter Estimates • Estimates are the γ values from the model notation • In our sample: • Each additional newcomer ≈ another 2.2 minutes of solving time • Each additional year of experience ≈ 0.10 decrease in solving time • Each of these effects is while holding the others constant • Core feature of multiple regression! • Don’t need to do residualization for this (Wurm & Fisicaro, 2014)

- 27. Week 3.1: Fixed Effects ! Sample Dataset & Research Questions ! Theoretical Model ! lme4 in R ! Parameter Interpretation ! Slopes ! Intercept ! Hypothesis Testing ! t-test ! Confidence Interval ! More About Model Fitting

- 28. Parameter Estimates • What about the intercept? • Let’s go back to our theoretical model = Minutes Newcomers + + Years of Experience Baseline γ00 γ10 *x1i(j) γ20 * x2i(j) E(Yi(j))

- 29. Parameter Estimates • What about the intercept? • Let’s go back to our theoretical model = Minutes Newcomers + + Years of Experience Baseline γ00 2.16 *x1i(j) -0.09 * x2i(j) E(Yi(j))

- 30. Parameter Estimates • What about the intercept? • Let’s go back to our theoretical model • What if a team had 0 newcomers (x1i(j) = 0), and 0 years of prior experience (x2i(j) = 0)? = Minutes Newcomers + + Years of Experience Baseline γ00 2.16 * 0 -0.09 * 0 E(Yi(j))

- 31. Parameter Estimates • What about the intercept? • Let’s go back to our theoretical model • What if a team had 0 newcomers (x1i(j) = 0), and 0 years of prior experience (x2i(j) = 0)? • Even in this case, we wouldn’t expect the team to solve the problem in 0 minutes (that’s ridiculous!) = Minutes Newcomers + + Years of Experience Baseline γ00 0 0 E(Yi(j))

- 32. Parameter Estimates • What about the intercept? • Intercept is what we expect when all other predictor variables are equal to 0 • Here, a team with 0 newcomers but 0 years prior experience would be expected to solve the problem in 38.25 minutes = Minutes Newcomers + + Years of Experience Intercept 38.25 0 0 E(Yi(j))

- 33. Parameter Estimates • Equation for a line (courtesy middle school) • y = mx + b • m: Slope. How steep is the line? • How strongly does IV affect DV? Slope = 2.16 For every newcomer, average solve time increases by 2.16 min.

- 34. Parameter Estimates • Equation for a line (courtesy middle school) • y = mx + b • m: Slope. How steep is the line? • b: Intercept. Where does it cross y-axis? • What DV is expected when IV is 0? 38.25

- 35. Parameter Estimates • Default is to include the intercept in the model, and that’s appropriate • model1 <- lmer(MinutesTaken ~ 1 + Newcomers + YearsExperience + (1|CompanyID), data=problemsolving) • If we eliminated the intercept, we would be assuming that the DV is exactly 0 when all predictors are 0 • That would be a more constrained model—makes more assumptions about the data • We allowed our model to freely estimate the intercept, and we came up with 38.25

- 36. Week 3.1: Fixed Effects ! Sample Dataset & Research Questions ! Theoretical Model ! lme4 in R ! Parameter Interpretation ! Slopes ! Intercept ! Hypothesis Testing ! t-test ! Confidence Interval ! More About Model Fitting

- 37. Parameter Estimates WHERE THE @#$^@$ ARE MY P-VALUES!?

- 38. Hypothesis Testing—t test • Reminder of why we do inferential statistics • We know there’s some relationship between newcomers & problem-solving time in our sample

- 39. Hypothesis Testing—t test • Reminder of why we do inferential statistics • We know there’s some relationship between newcomers & problem-solving time in our sample • But: • Would this hold true for all people (the population) doing group problem-solving? • Or is this sampling error? (i.e., random chance)

- 40. Hypothesis Testing—t test • Newcomr effect in our sample estimated to be 2.16 min. … is this good evidence of an effect in the population? • Would want to compare relative to a measure of sampling error t = Estimate Std. error = 2.1642 0.2690

- 41. Hypothesis Testing—t test • We don’t have p-values (yet), but do we have a t statistic • Effect divided by its standard error (as with any t statistic) • A t test comparing this γ estimate to 0 • 0 is the γ expected under the null hypothesis that this variable has no effect

- 42. Point—Counterpoint Great! A t value. This will be really helpful for my inferential statistics. But you also need the degrees of freedom! And degrees of freedom are not exactly defined for mixed effects models. GOT YOU! But, we can estimate the degrees of freedom. Curses! Foiled again!

- 43. Hypothesis Testing—lmerTest • Another add-on package, lmerTest, that estimates the d.f. for the t-test • Similar to correction for unequal variance Kuznetsova, Brockhoff, & Christensen, 2017

- 44. Hypothesis Testing—lmerTest • Once we have lmerTest installed, need to load it … remember how? • library(lmerTest) • With lmerTest loaded, re-run the lmer() model, then get its summary • Will have p-values • In the future, no need to run model twice. Can load lmerTest from the beginning • This was just for demonstration purposes

- 45. Hypothesis Testing—lmerTest ESTIMATED degrees of freedom – note that it’s possible to have non-integer numbers because it’s an estimate p-value • What does 1.84e-12 mean? • Scientific notation shows up if a number is very large or very small • 1.84e-12 = 1.84 x 10-12 = .00000000000184 • This p-value is very small! Significant effect of number of newcomers on problem solving time

- 46. Hypothesis Testing—lmerTest ESTIMATED degrees of freedom – note that it’s possible to have non-integer numbers because it’s an estimate p-value • What does 1.84e-12 mean? • Scientific notation shows up if a number is very large or very small • 1.84e-12 = 1.84 x 10-12 = .00000000000184 • e-12: Move the decimal 12 places to the left • Can copy & paste the scientific notation into the R command line to get the regular number

- 47. Hypothesis Testing—lmerTest ESTIMATED degrees of freedom – note that it’s possible to have non-integer numbers because it’s an estimate p-value • What about the other variables? • Years of experience: Not significant • A numerical relation, but not significant effect it generalizes to the broader population • Intercept significantly differs from 0 • Even if 0 newcomers and 0 years of experience, still takes time to solve the problem (duh!)

- 48. Week 3.1: Fixed Effects ! Sample Dataset & Research Questions ! Theoretical Model ! lme4 in R ! Parameter Interpretation ! Slopes ! Intercept ! Hypothesis Testing ! t-test ! Confidence Interval ! More About Model Fitting

- 49. Confidence Intervals • 95% confidence intervals are: • Estimate (1.96 * std. error) • Try calculating the confidence interval for the newcomer effect • This is slightly anticonservative • In other words, with small samples, CI will be too small (elevated risk of Type I error) • But OK with even moderately large samples

- 50. Confidence Intervals • http://www.scottfraundorf.com/statistics.html • Another add-on package: psycholing • Includes summaryCI() function that does this for all fixed effects

- 51. Week 3.1: Fixed Effects ! Sample Dataset & Research Questions ! Theoretical Model ! lme4 in R ! Parameter Interpretation ! Slopes ! Intercept ! Hypothesis Testing ! t-test ! Confidence Interval ! More About Model Fitting

- 52. More on Model Fitting • We specified the formula • How does R know what the right γ values are for this model?

- 53. More on Model Fitting • Solve for x: • 2(x + 7) = 18

- 54. 2(x + 7) = 18 • Two ways you might solve this: • Use algebra • 2(x+7) = 18 • x+7 = 9 • x = 2 • Guaranteed to give you the right answer • Guess and check: • x = 10? -> 34 = 18 Way off! • x = 1? -> 9 = 18 Closer! • x = 2? -> 18 = 18 Got it! • Might have to check a few numbers ANALYTIC SOLUTION NON- ANALYTIC SOLUTION

- 55. More on Model Fitting • Two ways you might solve this: • t-test: Simple formula you can solve with algebra • Mixed effects models: Need to search for the best estimates ANALYTIC SOLUTION NON- ANALYTIC SOLUTION

- 56. More on Model Fitting • What, specifically, are we searching for? • Probability: Chance of an outcome given model • Given a fair coin, probability of heads is 50% • Likelihood: Chance of a model given data • Given 52 heads out of 100, is this likely to be a fair coin? • Looking for the parameter estimates that result in a model with the highest likelihood • Maximum likelihood estimation

- 57. More on Model Fitting • Not guessing randomly. Looks for better & better parameters until it converges on the solution • Like playing “warmer”/“colder”

- 58. Model Fitting—Implications • More complex models take more time to fit • model1 <- lmer(MinutesTaken ~ 1 + Newcomers + YearsExperience + (1|CompanyID), data=problemsolving, verbose=2) • verbose=2 shows R’s steps in the search • Probably don’t need this; just shows you how it works • Possible for model to fail to converge on a set of parameters • Issue comes up more when you have more complex models (namely, lots of random effects) • We’ll talk more in a few weeks about when this might happen & what to do about it