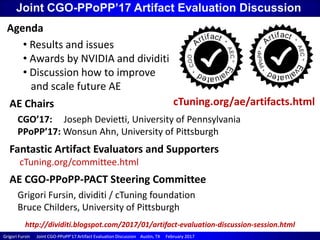

Joint CGO-PPoPP'17 Artifact Evaluation Discussion

- 1. Grigori Fursin Joint CGOGrigori Fursin Joint CGO--PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017 Joint CGO-PPoPP’17 Artifact Evaluation Discussion AE Chairs CGO’17: Joseph Devietti, University of Pennsylvania PPoPP’17: Wonsun Ahn, University of Pittsburgh AE CGO-PPoPP-PACT Steering Committee Grigori Fursin, dividiti / cTuning foundation Bruce Childers, University of Pittsburgh Agenda • Results and issues • Awards by NVIDIA and dividiti • Discussion how to improve and scale future AE Fantastic Artifact Evaluators and Supporters cTuning.org/committee.html cTuning.org/ae/artifacts.html http://dividiti.blogspot.com/2017/01/artifact-evaluation-discussion-session.html

- 2. Grigori Fursin Joint CGOGrigori Fursin Joint CGO--PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017 How CGO-PPoPP-PACT AE works time line paper accepted paper accepted artifacts submitted artifacts submitted evaluatorevaluator bidding artifacts assigned artifacts assigned evaluationsevaluations available evaluationsevaluations finalized artifactartifact decision 7..12 days to prepare artifacts according to guidelines: cTuning.org/ submission.html 2..4 days for evaluators to bid on artifacts (according to their knowledge and access to required SW/HW) 2 days to assign artifacts – ensure at least 3 reviews per artifact, reduce risks, avoid mix ups minimize conflicts of interests 2 weeks to review artifacts according to guidelines: cTuning.org/ reviewing.html 3..4 days for authors to respond to reviews and fix problems 2..3 days to finalize reviews NOTE: we consider AE a cooperative process and try to help authors fix artifacts and pass evaluation (particularly if artifacts will be open-sourced) Light communication between authors and reviewers is allowed via AE chairs (to preserve anonymity of the reviewers) Year PPoPP CGO Total Problems Rejected 2015 10 8 18 7 2 2016 12 11 23 4 0 2017 14 13 27 7 0 2..3 days to add AE stamp and AE appendix to a camera- ready paper

- 3. Grigori Fursin Joint CGOGrigori Fursin Joint CGO--PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017 AE: good, bad and ugly … Good: many interesting and open source artifacts – authors and evaluators take AE seriously! Bad: * too many artifacts – need to somehow scale AE while keeping the quality (41 evaluators, ~120 reviews to handle during 2.5 weeks) * sometimes difficult to find evaluators with appropriate skills and access to proprietary SW and rare HW * very intense schedule and not enough time for rebuttals * communication between authors and reviewers via AE chairs is a bottleneck Ugly: * too many ad-hoc scripts to prepare and run experiments * no common workflow frameworks (in contrast with some other sciences) * no common formats and APIs (benchmarks, data sets, tools) * difficult to reproduce empirical results across diverse SW/HW and inputs time line paper accepted paper accepted artifacts submitted artifacts submitted evaluatorevaluator bidding artifacts assigned artifacts assigned evaluationsevaluations available evaluationsevaluations finalized artifactartifact decision 7..12 days 2..4 days 2 days 2 weeks 3..4 days 2..3 days 2..3 days

- 4. Grigori Fursin Joint CGOGrigori Fursin Joint CGO--PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017 Joint CGO-PPoPP’17 awards a) Promote “good” (well documented, consistent and easy to use) artifacts NVIDIA donated “Pascal Titan X GPGPU card” for the highest ranked artifact b) Promote using workflow frameworks to share artifacts and experiments as customizable and reusable components with common meta description and API DIVIDITI donated $500 for the highest ranked artifact shared using Collective Knowledge workflow framework dividiti.com cKnowledge.org Collective Knowledge is being developed by the community to simplify AE process and improve sharing of artifacts as customizable and reusable Python components with extensible JSON meta-description and JSON API, assemble cross-platform workflows, automate and crowdsource empirical experiments, and enable interactive reports.

- 5. Joint CGO/PPoPP Artifact Evaluation Award for the distinguished open-source artifact shared in the Collective Knowledge format “Software Prefetching for Indirect Memory Accesses” Sam Ainsworth, Timothy M. Jones University of Cambridge The cTuning foundation and dividiti are pleased to grant February 2017

- 6. Joint CGO/PPoPP Distinguished Artifact Award Xiuxia Zhang1, Guangming Tan1, Shuangbai Xue1, Jiajia Li2, Mingyu Chen1 1 Chinese Academy of Sciences 2 Georgia Institute of Technology February 2017 for “Demystifying GPU Microarchitecture to Tune SGEMM Performance” The cTuning foundation and NVIDIA are pleased to present

- 7. Grigori Fursin Joint CGOGrigori Fursin Joint CGO--PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017 Discussion how to improve/scale future AE time line paper accepted paper accepted artifacts submitted artifacts submitted evaluatorevaluator bidding artifacts assigned artifacts assigned evaluationsevaluations available evaluationsevaluations finalized artifactartifact decision 7..12 days 2..4 days 2 days 2 weeks 3..4 days 2..3 days 2..3 days 1) Introduce two evaluation options: private and public a) traditional evaluation for private artifacts (for example, from industry, though less and less common) time line paper accepted paper accepted artifacts submitted artifacts submitted AE chairs announce public artifacts at XSEDE/GRID5000/etc AE chairs announce public artifacts at XSEDE/GRID5000/etc AE chairs monitor open artifacts are evaluated AE chairs monitor open discussions until artifacts are evaluated artifactartifact decision any time 1..2 days from a few days to 2 weeks 3..4 days 2..3 days b) open evaluation of public and open-source artifacts (if already avialable at GitHub, BitBucket, GitLab with “discussion mechanisms” during submission…) At CGO/PPoPP’17, we have sent out requests to validate several open-source artifacts to the public mailing lists from the conferences, network of excellence, supercomputer centers, etc. We found evaluators willing to help and having an access to rare hardware or supercomputers as well as required software and proprietary benchmarks Authors quickly fixed issues and answered research questions while AE chairs steered the discussion! See public reviewing examples at cTuning.org/ae/artifacts.html and adapt-workshop.org

- 8. Grigori Fursin Joint CGOGrigori Fursin Joint CGO--PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017 2) Enable public or private discussion channels between authors and reviewers for each artifact (rather than communicating via AE chairs) Useful technology: slack.com , reddit.com Evaluators can still be anonymous if they wish so … 3) Help authors prepare artifacts and workflows for unified evaluation (community service by volunteers?) This year we processed more than 120 evaluation reports. Nearly all artifacts had their own ad-hoc scripts to build and run workflows, process outputs, validate results, etc. Since it’s a huge burden for evaluators, they ask us to gradually introduce common workflows and data formats to unify evaluation. A possible solution is to introduce an optional service (based on distinguished artifacts) to help authors convert their ad-hoc scripts to some common format and thus scale AE! Furthermore, it may help researchers easily reuse and customize past artifacts, and build upon them! Discussion how to improve/scale future AE

- 9. Grigori Fursin Joint CGOGrigori Fursin Joint CGO--PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017 Discussion how to improve/scale future AE 4) Should we update Artifact Appendices? Two years ago we introduced Artifact Appendix templates to unify Artifact submissions and let authors add up to two pages of such appendices to their camera ready paper: http://cTuning.org/ae/submission.html http://cTuning.org/ae/submission_extra.html The idea is to help readers better understand what was evaluated and let them reproduce published research and build upon it. We did not receive complaints about our appendices and many researchers decided to add them to their camera ready papers (see http://cTuning.org/ae/artifacts.html). Similar AE appendices are now used by other conferences (SC,RTSS): http://sc17.supercomputing.org/submitters/technical- papers/reproducibility-initiatives-for-technical-papers/artifact-description- paper-title We suggest to get in touch with AE chairs from all related conferences to sync on future AE submission and reviewing procedures to avoid defragmentation!

- 10. Grigori Fursin Joint CGOGrigori Fursin Joint CGO--PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017 Discussion how to improve/scale future AE 5) Decide whether to evaluate all experiments or still allow partial validation or even only artifact sharing We do not yet have a common methodology to fully validate experimental results from the research papers in our domain – we also know that full validation of empirical experiments is very challenging and time consuming. At the same time, making artifacts available is also extremely valuable to the community (data sets, predictive models, architecture simulators and their models, benchmarks, tools, experimental workflows). Last year we participated in ACM workshop on reproducible research and co-authored the following ACM Result and Artifact Review and Badging policy (based on our AE experience): http://www.acm.org/publications/policies/artifact-review-badging It suggests using several separate badges: • Artifacts publicly available • Artifacts evaluated (functional, reusable) • Results validated (replicated, reproduced) We consider using above policy and badges for the next AE – feedback is welcome!

- 11. Grigori Fursin Joint CGOGrigori Fursin Joint CGO--PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017 Discussion how to improve/scale future AE 6) Evaluate artifacts for “tool” papers during main reviewing We now discuss the possibility to validate artifacts for so-called tool papers during main reviewing. Such evaluation will influence acceptance decision. Similar approach seems to be used at SuperComputing’17 (will be useful to discuss that with SC’17 AE organizers). Current problems are: • Artifact Evaluation committee may not be prepared yet (though we have a joint AEC from last years) • If we ask PC members to evaluate papers and artifacts at the same time, it’s an extra burden. Furthermore, PC members may not have required technical skills (that’s why AEC is usually assembled from postdocs and research engineers) • CGO and PPoPP use double blind reviewing. However reviewing artifacts without revealing authors identity is very non-trivial and places an extra unnecessary burden on the authors and evaluators (and may kill AE) (we should check how/if SC’16/SC’17 solve this problem since they also use double blind reviewing).

- 12. Grigori Fursin Joint CGOGrigori Fursin Joint CGO--PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017PPoPP’17 Artifact Evaluation Discussion Austin, TX February 2017 We need your feedback! Thank you!!! We need your feedback - remember that new AE procedures may affect you at the future conferences • Contact AE steering committee: http://cTuning.org/committee.html • Mailing list: https://groups.google.com/forum/#!forum/collective-knowledge Extra resources • Artifact Evaluation Website: http://cTuning.org/ae • ACM Result and Artifact Review and Badging policy: http://www.acm.org/publications/policies/artifact-review-badging • CK workflow framework: http://cKnowledge.org • Community driven artifact/paper evaluation: http://dl.acm.org/citation.cfm?doid=2618137.2618142