More Related Content

Similar to P01 introduction cvpr2012 deep learning methods for vision

Similar to P01 introduction cvpr2012 deep learning methods for vision (15)

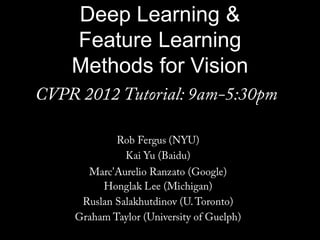

P01 introduction cvpr2012 deep learning methods for vision

- 46. Multi-scale vs Hierarchical

Feature Pyramid Input Image/ Features

Editor's Notes

- All I am going to say about Neuroscience, although techniques do have strong connections.

- Make clear that classic methods, e.g.convnets are purely supervised.

- Need to bring outdiffereceswrt to existing ML stuff, mainly unsupervised learning part. Make use of unlabaled data (lots of it).

- Restructure to bigger emphasis on unsupervised.Make clear that classic methods, e.g.convnets are purely supervised.

- Winder and Brown paper. Slightly smoothed view of things.

- Selection instead of normalization?

- Note pooling is across space, not across Gabor channelNormalization is really nonlinear (small elements not rescaled)

- Non-maximal suppression across VW. Like an L-InfnormalizationMax = k-means

- Graph not clear. Explain better. Y-axis is change in value

- Mention Leonardis & Fidler paper

- Too far for labels to trickle down (vanishing gradients)Only information from layer below.Input is supervision.

- Add overall energy

- Not separate operations Do it at the same

- Chriswilliams oral link

- Occlusion mask: bootom right quad for sofa interpretationCan’t decide locally If you knew solution, would know what features to extract.

- DPM is shape hierarchical HOG templates

- DPM is shape hierarchical HOG templates

- Song Chun ‘s clock