Extractors sapr3

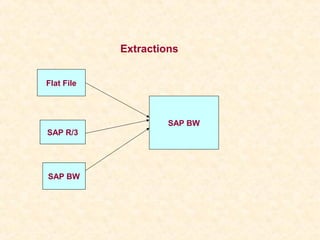

- 1. Extractions Flat File SAP BW SAP R/3 SAP BW

- 2. Extractors in SAP R/3

- 3. LIS Extraction •Introduction •Steps Involved in LIS Extraction •Internal Architecture for LIS Extraction •Conclusion •Question and Answers

- 4. LIS Extraction Introduction 1. LIS Extraction is Based on Information Structure. Information Structure VBAK M Data Base C VBAP Tables Update X X VBEP Rules S260 X VBUK X VBUP

- 5. LIS Extraction Types of Information Structure SAP defined User Defined Information Structure Information Structure Number Range S001 – S499 Number Range S500 – S999

- 6. LIS Extraction Steps to create Information Structure 1. Create Field Catalogs – (MC18) “Similar to Info Object Catalog. 2. Create Information Structure – (MC21) “Similar to info Cube”. 3. Set up the Update Rules – (MC24) “Similar to Update rules”.

- 7. LIS Extraction ( SAP Defined Information Structure ) Step :- 1 Goto T-code “LBW0’.

- 8. LIS Extraction Steps :- 2 Give the Information Structure Name

- 9. LIS Extraction Steps :- 3 See the Present Setting for the Information Structure

- 10. LIS Extraction Steps :- 4 ( IF Datasource is not generated ) Generating the Data Source

- 11. LIS Extraction Steps :- 5 Deactivating the Delta Update – “LBW1”

- 12. LIS Extraction Do the changes to the updating and Save it

- 13. LIS Extraction Steps :- 6 Running the Statistical setup to fill the data into Information Structure Goto “SBIW” and follow the path

- 14. LIS Extraction

- 15. Version Maintenance : Normally it is “000” version So load the data to version “&(A” and copy the data from this version to “000” Date of Termination : Date of termination to be in past Click on Execute

- 16. LIS Extraction We can cross check using “RSA3”

- 17. LIS Extraction Copy the data from the version “&(A” to “000” using “LBW2”

- 18. LIS Extraction Steps :- 7 Using “RSA6” transport the Data Source Steps :- 8 Replicate the Data Source in SAP BW and Assign it to Info source and Activate

- 19. LIS Extraction Steps :- 9 In SAP R/3 Goto “LBW1” and set the updating to “No Updating and Save.

- 20. LIS Extraction Steps :- 10 Go Back to SAP BW and Create the Infopackage and run the Initial Load Steps :- 11 Once the “Initial delta” is successful before running “delta” load we need to enable delta updating in SAP R/3

- 21. LIS Extraction

- 22. LIS Extraction Steps :- 12 Once the Delta is activated in SAP R/3 we can start running “Delta” loads in SAP BW.

- 23. Internal Architecture for LIS Extraction SAP R/3 SAP BW S260 Full Update / p Initial Delta Load e tu Sales Order Application’s M calS VA01, VA02, VA03 t isti C Sta V B A V1,V2 K M C S260BIW1 VBAP VBUP V B Delta Load S260BIW2 VBAK VBEP A VBUK P

- 24. LIS Extraction Conclusion •V 1, V2 •Information Structure •Logistics Information •Disadvantages of LIS

- 26. LO Extraction •Introduction •Steps Involved in LO Extraction •Internal Architecture for LO Extraction •Conclusion •Question and Answers

- 27. LO Extraction Steps :- 1 First Activate the Data Source from the Business Content using “LBWE”

- 28. LO Extraction Steps :- 2 For Customizing the Extract Structure – “LBWE”

- 29. Steps :- 3 Maintaining the Extract Structure

- 30. Steps :- 4 Generating the Data Source Once the Data Source is generated do necessary setting for •Selection •Hide •Inversion •Field Only Know in Exit And the save the Data Source

- 31. Steps :- 5 Activate the Data Source Steps :-6 Using “RSA6” transport the Data Source

- 32. Steps :- 7 Replicate the Data Source in SAP BW and Assign it to Info source and Activate Steps :- 8 Running the Statistical setup to fill the data into Set Up Tables Goto “SBIW” and follow the path

- 34. We can cross check using “RSA3” Steps :- 9 Go Back to SAP BW and Create the Infopackage and run the Initial Load. Steps :- 10 Once the “Initial delta” is successful before running “delta” load we need to set up “V3 Job” in SAP R/3 using “LBWE”.

- 35. Steps :- 10 Once the “Initial delta” is successful before running “delta” load we need to set up “V3 Job” in SAP R/3 using “LBWE”.

- 36. Steps :- 11 Once the Delta is activated in SAP R/3 we can start running “Delta” loads in SAP BW.

- 37. Internal Architecture for LIS Extraction

- 38. LO Extraction Conclusion •V3 •Delta Update Modes •advantages of LO •Comparison of LIS to LO

- 39. Delta Extraction with the V3 Update (II) Data Flow Schematic for Logistics Extraction with the V3 Update V1 Document 1 Docu. Tables V3 Collective Run Delta Docu. (for example, Request V1 Document 2 Tables daily or hourly) So all ce f V3 Module V1 od te d ng o Docu. Document n ur ata of a Tables V3 Module u le a t si a D ing for ng u oces for ess a m pda V3 Module r Ws roc ist nd p Call LU d p a Call ing an all ing Transfer i One LUW, ist ng to BW ad ex One Commit Call ex adi Re Re A B Delta Queue BW (PSA, Update Tables (Stopped qRFC) ODS, Cube) Time

- 40. Update Methods 1) Serialized V3 Update 2) Direct Update 3) Queued Delta 4) Non Serialized V3 Update

- 41. 1) Serialized V3 Update Since the V3 update actually does not recognize the serialized processing of update data, the “Serialized V3 Update” function was created through several corrections in SAP Basis in order to also be able to serialize in step A. Data Flow Schematic for Logistics Extraction with the V3 Update V1 Document 1 Docu. Tables V3 Collective Run Delta Docu. (for example, Request V1 Document 2 Tables daily or hourly) So all ce f V3 Module V1 od te d ng o Docu. ur Document n ata of a Tables V3 Module u le a t si a D ing for ng u oces for ess a m pda V3 Module r Ws roc ist nd p Call LU d p a Call ing an all ing i Transfer One LUW, ist ng to BW ad ex One Commit Call ex adi Re Re A B Delta Queue BW (PSA, Update Tables (Stopped qRFC) ODS, Cube)

- 42. • The following problems continue to occur in conjunction with the V3 update in the logistics extraction of transaction data: – The serialized V3 update can only ensure the correct sequence of extraction data for a document if the document is not repeatedly changed within the span of a second. – Furthermore, the serialized V3 update can only ensure the correct sequence of extraction data for a document if the times are permanently and exactly synchronized for all instances in a system. This is because the creation time of the update record, which is determined by the local time for the application server, is used for sorting the update data. – In addition, the serialized V3 update can only ensure the correct sequence of extraction data for a document if it previously had no errors in the V2 update. This is because the V3 update only processes the update data that is successfully processed with the V2 update. – Independently of the serialization, update errors that occur in the V2 update of a transaction and which cannot be reposted have the consequence that the V3 updates for the transaction that are still open can never be processed. This can thus lead to inconsistencies in the data in the BW system.

- 43. • Multiple Language Problems in a Serialized V3 Update: – If there are application documents within an R/3 system from several different users that are logged on to the system in different languages, the V3 collective run always processes the update entries for just one language within a process call. – Subsequently, a process call for the update entries for the documents created in the next language is started automatically. Thus, with the serialized V3 update, only the update entries can be processed that were created in a direct, timely sequence and with the same logon language. If the language is changed within the update entry sequence, the V3 collective updating process ends and is reset with the new language. With each reset, the update table VBHDR is read from the database. If a high number of entries exists in the update tables, this can lead to the processing of update data requiring so much time that, concurrently, more new update records are created than are processed.

- 44. Multiple Language Problems in a Serialized V3 Update Normal V3 Collective Run Serialized V3 Collective Run VBHDR (Each step is a full table scan) Step 1: Step 1: Language :EN: Language :EN: :EN: VA01 Step 2: :EN: VA02 Language :DE: :DE: VA02 :EN: VA01 ....... Step 3: Language :EN: :EN: VA01 Step 2: Language :DE: :DE: VA02 ....... Step 4: :DE: VA02 Language :DE: :EN: VA01 ....... Step 5: :EN: VA02 Language :EN: .......

- 45. Data Flow Schematic for Logistics Extraction with Direct Delta Do cu me Delta nt Do 1 Request cuV1 m en t2 V1 V1 Document n Docu. a ll Do es of urce Ta Tables cu ng o Do les ac bl wi ctio si taS Ex . Ta Ext th V cu Extra s a th ce b w i th tra . ro r a D V1 p nd S fo r c U o V1 U w a tion nM pd dule i g W tio Up in LU ate a d ng Transfer n pdat Re sti 1 Modu M to BW od te i ex da e ul e le B Delta Queue BW (PSA, (Stopped qRFC) ODS, Cube) Time

- 46. Data Flow Schematic for Logistics Extraction with Queued Delta V1 Document 1 Docu. Tables Extraction Collective Run Delta (recommended hourly) Request Docum Filling extr Docum from ent 2 from the V pli acti of ll Filli th ce ng e e V 1 up tio n ata of a an e ex sing o ur xtra date n from ctio So a D ng the ent n Filli n qu V1 s V1 u tr ng e eue e f in t roce ca action qu for essi xtra pda ct te io n que 1 update ue Ws oc p ap h V1 qu trie nd LU d pr Docu. Tables en g a s ing g an eue or Transfer all din Docu. One LUW, Tables to BW ex adin a One Commit eu Re Re ist A B Delta Queue BW (PSA, Extraction Queue (Stopped qRFC) ODS, Cube) Time

- 48. CO-PA Extraction

- 49. Position of the CO-PA Application • CO-PA collects all the OLTP data for calculating contribution margins ( Sales, cost of sales, overhead costs) • CO-PA also has power reporting tools and planning functions however co-pa reporting facility is limited to • Integrated cross-application reporting concept is not as differentiated as it is in BW. • OLTP system is optimized for transaction processing and high reporting work load has negative impact on the overall performance of the system.

- 50. Flow of Actual Values

- 51. Flow of Actual Values • During Billing in SD Revenues and Payments are transferred to profitability segments in Profitability Analysis at the same time sales quantities are valuated using standard cost of goods manufactured. • In Overhead cost controlling primary postings are made to objects in overhead cost controlling and assigned to relevant cost object. Actual cost of goods manufactured also assigned to cost objects and at the same time performing cost centers are credited

- 52. Flow of Actual Values • The Production Variances calculated for the cost objects i.e. the difference between the actual cost of the goods manufactured and the standard costs are divided into variance categories and settled to profitability segments (example production orders). • What are the top products and customers in our different divisions. This is one of the typical questions that can be answered with CO-PA Module.

- 53. Basic Concepts • Characteristics are the fields in an operating Concern according to which data can be differentiated in Profitability Analysis. • Each characteristic in an operating concern has a series of valid characteristic values. • Profitability is a fixed combination of valid characteristic values.

- 54. Characteristics • Some Characteristics are predefined in Operating concern like Material, Customer, Company Code. In addition to these fixed characteristics we can define upto 50 characteristics of our own. In most cases we will be able to satisfy our profitability analysis requirements with between 10 to 20 Characteristics.

- 55. Value Fields • Key Figures like revenue, cost of goods sold, overhead costs are stored in value fields.

- 57. Organizational Structure • The Value fields and Characteristics that are required to conduct detailed analysis vary from industry to Industry and between Individual Customers. • In CO-PA we can configure structure of one or more operating concerns in each individual installation. • An operating concern is an Organizational Structure that groups controlling areas together in the same way controlling areas groups company’s together

- 58. Data Base Structures in CO-PA

- 59. Data Base Structures in CO-PA • Actual Line Item table: CE1xxxx • Plan Line Items: CE2xxxx • Line Item Contain some information at document level that most cases is too detailed for analysis example CO-PA Document Number, Sales document Number, Posting date. • CO-PA Maintains summarization of data used by all CO-PA functions like reporting, planning, assessments, settlements and so on.

- 60. Data Base Structures in CO-PA • Actual Line Item table: CE1xxxx • Plan Line Items: CE2xxxx • Line Item Contain some information at document level that most cases is too detailed for analysis example CO-PA Document Number, Sales document Number, Posting date. • CO-PA Maintains summarization of data used by all CO-PA functions like reporting, planning, assessments, settlements and so on.

- 61. Data Base Structures in CO-PA • Segment Table: CE4xxxx • The Characteristics that describes the market are first separated from the rest of the line Items. • Each combination of characteristic vales is stored in profitability segment number. The Link between the profitability segment number and characteristic values is maintained in Segment Table.

- 62. Data Base Structures in CO-PA • Segment Level: CE3xxxx • The Value fields are summarized at profitability segment and period levels and stored together with these fields in Table CE3xxxx. • This table contains total values of the period for each Profitability segment number.

- 64. Storage Procedure • We can compare an operating Concern associated by segment table and segment level to an Info cube. Info cube comprises Dimension Table Segment Table) and the fact table ( Segment Level). • Unlike Fact table the segment level key contains other keys like Processing type in addition to the key field from the segment table. • Characteristics in Info Cube corresponds to characteristics( Or Attributes) in Info Cube. • Value fields can be regarded as a Key Figures.

- 65. Storage Procedure • Summarization level in Operating Concern have the same function as aggregates for an Info Cube, the difference is that aggregates for Info Cube are managed together with the Info Cube Itself while summarization levels are updated at regular intervals usually daily. • Line Items in CO-PA is comparable with Operational Data Store

- 67. Data Staging Overview • To Provide Data Source for BW all CO-PA Data Sources must be generated in the Source System. • Data Sources can be defined at Operating Concern and client level. • Data Source Contains the following Information • Name of the Operating Concern • Client • Subset of the Characteristics • Subset of the Value fields • Time Stamp which data has already been loaded in to BW.

- 68. Display IMG

- 69. Creating Data Source • Since Data Source is always defined at operating concern and Client levels a standard name is always generated which starts with 1_CO_PA_<%CL>_< %ERK . We can change this name if necessary however the prefix 1_CO_PA is Mandatory.

- 71. Data Source-Segment Table • Characteristics from the segment table are the characteristics that are maintained in transaction KEQ3. • By Default all characteristics are selected.

- 72. Data Source-Accounting Base • When we generate CO-PA Data Source select accounting based option. • Fields KOKRS, BUKRS, KSTAR are compulsory. • There are no Characteristics available for line Items because accounting based data sources do not contain characteristics. • There are no value fields or calculated key figures available • KOKRS and PERIO must be selected as selection fields.

- 75. Setting up the Delta BW: Setting up the Delta Upload Selection options for the update procedure Selecting the type of loading process © SAP AG 2002

- 76. FI-SL Extraction

- 77. Contents • FI-SL: Positioning and Overview • Data Structures in FI-SL • Generating Data Source • Extractor • Extracting Application Data

- 78. Extracting Data from FI-SL

- 79. FI SL: Positioning • FI-SL is a system in which data (Planned and Actual) from different levels of OLTP applications. EX: FI-GL, CO-CCA can be combined. • In R/3, FI-SL enhances the functions and usability of these applications • FI-SL Includes planning functions and reporting tools . FI-SL reporting is however restricted : • Cross application reporting is not as diverse as SAP BW reporting. Also OLTP system is optimized for transaction processing and a high reporting work load would have a negative impact on overall performance.

- 80. FI-SL Overview

- 81. FI-SL Overview • In FI-SL application we can define our own ledgers for reporting purpose, we can run these ledgers with any account assignment objects from various applications ( account, cost center, business area, profit center). • Modules available in SL give us several options for manipulating data that has been transferred from other applications of SAP and external systems into the SL. • Ex: Collecting Information, combining information, Forming totals, modifying totals and so on.

- 82. FI-SL Overview • SL is a receiver system in which data from various other applications can be stored. • Flexible Data Structures: The addition of an extra field to a financial accounting document is an example of FDS. This field can be field either in the application when the document is posted or through a data upload. • A ledger is updated either on company code level or the company level.

- 83. FI-SL Overview • Adjustment postings ( Direct Data entry) can be made in the FI-SL System and it allows • Post different versions of documents • Post documents with a balance not equal to zero. • enter additional currency amounts manually. • Fiscal year Variant: It determines the number of periods in a fiscal year and it enables to create weekly or monthly reports. • Validations: It allows us to check or modify the validity of data when it enters the FI-SL system

- 84. Data Flow

- 85. Data Flow • In addition to Data from FI, CO, MM and SD external data and data that is entered directly can also be posted into FI- SL. • Update takes place either online or as subsequent processing. • With Subsequent processing a predefined number of data records are transferred to the FI-SL Data base tables at a certain point in time • We can use various functions to update data in the FI-SL. • Validation: It checks whether there are any freely definable rules for the data that is going to be update. • Substitution: Replaces the data with modified data before update.

- 86. Data Flow • Field Transfer: Fields that are transferred determine which characteristics are used in the FI-SL System. • Operations available: • The Currency translation function translates amounts that have been posted to the ledger in the FI-SL System. • Balance Carry forward: At the end of the fiscal year we use this function to transfer actual values and Planned values to the previous fiscal year in to the new fiscal year. • We can create rollup ledgers containing cumulated and summarized data from one or more of the other ledgers to speed up report processing time.

- 87. Data Structures in FI-SL Data Structures in FI-SL: Table Group ZZ Client Objecttable_1 ZZO Obj.No. Sender / Receiver Obj.No. ... Client Ledger Record Type Version Summary Table ZZT Fiscal Year Obj.No. Sender Obj.No. Obj.No. Receiver Obj.No. ... "Key figures..." © SAP AG 2002

- 88. Data Structures in FI-SL: Table Group ZZ • When we create an FI-SL Table Group upto 5 tables with fixed naming conversions are created. • Summary table(….T) • Actual Line Item Table(…A) • Plan Line Item table(….P) • Object Table_1(Object/Partner) (….O) • Optional ObjectTable_2(Movement Attribute) (…C)

- 89. Data Structures in FI-SL: Table Group ZZ • In the Object Table an object number is assigned to a combination of characteristics.( Similar to Dimension Table in BW) • The Key figures values are stored in a Summary Table along with the resulting Object Numbers. Similar to the Fact Table in BW

- 90. Summary Table Summary Table ZZT in FI-SL Client Ledger Record Type to distinguish between plan/actual Version Fiscal Year Obj.No. Sender display on object table_1 Obj.No. Receiver ... Currency Key Base Unit of Measure RPMAX: Period Period block: RPMAX contains ... the period value for key figures TSLVT bal. c/f in trans. currency TSL16, HSL16 and KSL16 TSL01 Transaction totals Summary Table ZZT ... for periods in TSL16 transaction currency HSLVT bal. c/f in 2nd currency HSL01 Transaction totals ... for periods in HSL16 2. currency (e.g. local currency) KSLVT bal. c/f in 3rd currency KSL01 Transaction totals ... for periods in KSL16 3. currency (e.g. group currency) MSLVT Quantity balance c/f MSL01 Transaction totals ... for periods in MSL16 Base Unit of Measure © SAP AG 2002

- 91. Summary Table • Period Block is a particular feature in Summary Table Data Model: There is a key field RPMAX (Period) that specifies the meaning of the key figures TSL16, HSL16 and KSL16 Meaning that the RPMAX value is 016, the values for the key figures refers to the period 016 of the fiscal year variant that is set in the Customizing. • Handling Currencies: The Key Figures TSL01 To TSL16 are always assigned to Transaction Currency that is specified in RTCUR field (Currency Key)

- 92. Handling Currencies • The Key Figures HSL01 to HSL16 are assigned to the secondary currency that is specified in Customizing: • EX: Local Currency. • The Key Figures KSL01 to KSL16 are assigned to the third currency that is specified in Customizing. Ex: Company Code Currency. • The Key Figures MSL01 to MSL16 are assigned to quantity Value.

- 93. Ledgers in FI-SL • A ledger is a closed reporting area that always refers to exactly one summary table. • Ledger is a logical part of summary table. • We can define several ledgers for summary table. • A Ledger is created for summary table in customizing. • Physical storage of transaction data is always remains in the tables of table group.

- 94. Updating the FI SL in BW • BW Data source prepares data that is going to be updated in BW. • SAP is not able to ship FI- SL Data Sources with BW Business Content for non standard ledgers • To Provide FI SL Data source for BW an Extract Structure must be generated for FI-SL Summary Table and finally we have to define Data Source for the Summary Table Ledger.

- 95. Setting Up FI-SL Data Source Step1 • 1 Generate FI-SL Transfer Structure: T code: SBIW • Business Information Warehouse Settings for Application-Specific Data Sources Financial Accounting - Special Purpose Ledger. • This Structure is used to transfer Transaction Data from ledgers to BW. • Structure contains fields from Summary Tables, Object Tables.

- 96. Setting Up FI-SL Data Source Step2 • Step 2: Create Info Source (= Data Source) for Ledger. In step2 define data source for ledger and assign this data source to ledger. • Naming Conversion: The Name must start with number 3 . The System proposes the name with the prefix 3FI_SL and the name of the ledger. • Note: Data Source delivers transaction data from FI- SL Ledger on a totals record level.

- 97. Define Data Source for Ledger