Preliminary Examination Proposal Slides

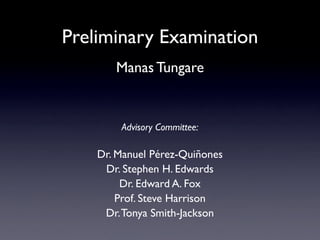

- 1. Preliminary Examination Manas Tungare Advisory Committee: Dr. Manuel Pérez-Quiñones Dr. Stephen H. Edwards Dr. Edward A. Fox Prof. Steve Harrison Dr. Tonya Smith-Jackson

- 2. Talk outline 0 ~45 min Presentation & questions Additional comments, suggestions OK to record audio? Your questions/comments are welcome at any time. Slides contain only major citations. The document contains full citations.

- 3. Talk outline • Introduction • Problem statement • A review of my work so far • Research questions • How my research plan will address these • Planned schedule

- 4. Introduction

- 5. Human Computer Interaction Personal Multi-Platform Information User Interfaces Management

- 8. Problems and workarounds • Constant need for manual synchronization • Give up using multiple computers • Copy addresses and phone numbers on sticky notes • Use USB flash drives to cart data around • Email files to themselves

- 9. Evaluation issues in PIM Paraphrased from discussions at the CHI 2008 Workshop on Personal Information Management, April 2008. • Evaluating new PIM tools • Comparing PIM tools developed by diverse research groups • Choosing suitable reference tasks for PIM • Measures that are valid across tasks

- 11. Understanding PIM • Understanding users and how they use multiple devices to accomplish PIM • Identify common device configurations in information ecosystems • Identify tasks performed on each device • Identify problems, frustrations

- 12. Mental workload in Information Ecosystems • What is the mental workload incurred by users when they are trying to use multiple devices for personal information management? • For those tasks that users have indicated are frustrating for them, do the alternate strategies result in lower mental workload? • Are multi-dimensional subjective workload assessment techniques (such as NASA TLX) an accurate indicator of operator performance in information ecosystems?

- 13. Research: Phase I Understanding users’ PIM practices across devices

- 14. Research Questions • Devices and activities • What is the distribution of users who use multiple devices? Most common devices? Common PIM tasks? Tasks bound to a device? • The use of multiple devices together • Factors in choice of new devices • Device failures

- 15. Research Questions • Devices and activities • The use of multiple devices together • Which devices were commonly used in groups? Methods employed to share data among these devices? Problems and frustrations? • Factors in choice of new devices • Device failures

- 16. Research Questions • Devices and activities • The use of multiple devices together • Factors in choice of new devices • What are some of the factors that influence users’ buying decisions for new devices? Integrating a device into current set of devices? • Device failures

- 17. Research Questions • Devices and activities • The use of multiple devices together • Factors in choice of new devices • Device failures • How often do users encounter failures in their information ecosystems? Common types of failures? Coping with failure?

- 18. Survey: August 2007 • Knowledge workers (N=220) • Highlights from preliminary results: • 96% use at least one laptop • 71% use at least one desktop • Lots of frustrated users (as expected) • Longer discussion in[Tungare and Pérez-Quiñones 2008]

- 19. Survey analysis • Content analysis to uncover common tasks • Quantitative analysis to determine typical set of devices for experiment • Recruit two students to code random subset of survey; ensure high inter-rater reliability • Design Phase II experiment based on these findings

- 20. Content analysis: example “The last device I acquired was a cell phone from Verizon. I would have liked to synchronize data from my laptop or my PDA with it but there seems to be no reasonable way to do so. I found a program that claimed to be able to break in over bluetooth but it required a fair amount of guess work as to data rates etc and I was never able to actually get it to do anything. In the end I gave up. Fortunately I dont know that many people and I usually have my PDA with me so it isnt a big deal but frankly I dont know how Verizon continues to survive with the business set...”

- 21. Content analysis: example Device 1 “The last device I acquired was a cell phone from Device 2 Device 2 Verizon. I would have liked to synchronize data from my laptop or my PDA with it but there seems to be no Task reasonable way to do so. I found a program that Problem 1 claimed to be able to break in over bluetooth but it required a fair amount of guess work as to data rates etc and I was never able to actually get it toProblem 2 do anything. In the end I gave up. Fortunately I dont know that many people and I usually have my PDA with me Conclusion so it isnt a big deal but frankly I dont know how Verizon continues to survive with the business set...”

- 22. Research: Phase II Measurement of mental workload and task performance of users while they perform representative PIM tasks

- 23. Mental workload • [...] “That portion of an operator’s limited capacity actually required to perform a particular task.” [O’Donnell and Eggemeier, 1986] • Low to moderate levels of workload are associated with acceptable levels of operator performance [Wilson and Eggemeier, 2006] • Often used as a measure of operator performance

- 24. Mental workload as a measure of operator performance • Alternative: direct measurement of task performance: • Time taken to perform task, • Number of errors, etc. • Task metrics are more difficult to measure • Need instrumentation of equipment • Scores cannot be compared across tasks

- 25. Measuring mental workload Figure 8.6 NASA Task Load Index H art and Staveland’s N ASA Task Loa d Ind ex (TLX) method assesses work loa d on five 7-point sc ales. Increments of high, me dium and low estimates for e a ch point result in 21 gra d ations on the sc ales. NASAame N Task D ate TLX Mental Demand How mentally d emanding was the task? Very Low Very High • NASA TLX: Physic al D emand How physic ally d emanding was the task? Task Load Index Very Low Very High Temporal Demand How hurrie d or rushe d was the p a c e of the task? • SWAT: Very Low Performanc e Very High How suc c essful were you in a c complishing what Subjective workload assessment technique you were aske d to do? Perfe ct F ailure Effort How hard did you have to work to a c complish your level of p erformanc e? • WP: Very Low Very High Workload Profile Frustration How inse cure, discoura g e d, irritate d, stresse d, and annoye d wereyou? Very Low Very High

- 26. Validity of workload measures • Mental workload consistently shown to be negatively correlated with performance metrics [Bertram et al. 1992] • Airline cockpits [Ballas et al. 1992] • Navigation [Schryver 1994] • Multi-device computing environments: information ecosystems [None yet!]

- 27. Research Question 1 • RQ: What is the mental workload incurred by users in certain common tasks that were considered difficult in Phase I? • Hypothesis: Subjective assessment of mental workload will be high in these tasks • Experiment: Measure mental workload for several representative tasks performed in information ecosystems

- 28. Research Question 2 • RQ: Is a decrease in mental workload a factor that motivates changes in users’ information management strategies? • Hypothesis: Users adopt strategies that will eventually lead to lowered mental workload • Experiment: Compare mental workload for tasks identified as difficult, and for their respective workarounds

- 29. Research Question 3 • RQ: Are subjective assessments of mental workload an accurate indicator of operator performance in this domain? • Hypothesis: Mental workload measured by NASA TLX (including existing dimensions, and possibly new dimensions) can be used to predict operator performance • Experiment: (Attempt to) correlate workload assessments with operator performance

- 30. Experiment design • Representative tasks from the content analysis of Phase I • Identify devices, tasks, strategies, etc. and use these to give users benchmark tasks • Measure mental workload • Other benchmark tasks too • To have a baseline

- 31. Expected contributions • Understanding users and how they use multiple devices to accomplish PIM • Comparing workloads in different information ecosystems • Formative feedback for designers • Validating NASA TLX as an accurate predictor of task performance in information ecosystems

- 32. Schedule May 08 June 08 July 08 Aug 08 Sep 08 Perform content analysis for Phase I Determine tasks Recruitment, IRB, Scheduling Study Conduct Experiments Perform analysis Write dissertation Prepare publications

- 33. Questions & comments ? ! Thank you! Note to self: Turn off audio recording before committee deliberation.

- 35. Mental workload and task performance [O’Donnell, Eggemeier 1986] Performance Mental workload

- 36. Why NASA TLX • Higher correlation with performance as compared to SWAT and WP [Rubio & Díaz, 2004] • Validated in several environments since1988 [several, 1988-present]

- 37. NASA Task Load Index NASA TLX procedure H art and Staveland’s N ASA Task Loa d Ind ex (TLX) method assesses work loa d on five 7-point sc ales. Increments of high, me dium and low estimates for e a ch point result in 21 gra d ations on the sc ales. N ame Task D ate Mental Demand How mentally d emanding was the task? Very Low Very High Physic al D emand How physic ally d emanding was the task? 20 steps Very Low Very High Temporal Demand How hurrie d or rushe d was the p a c e of the task?

- 38. NASA TLX procedure ntal D emand Frustra Me tion Leve l Pairwise Comparisons

- 39. Quantitative analysis Work Desktop Home Desktop Laptop Media player Cell phone PDA cell phone 52 32 29 25 24 22 20 19 18 Number of participants using these devices as a group

- 40. Content analysis • Techniques from [Neuendorf 2004, Krippendorf 2004] • Inter-rater reliability with 2 additional coders (expected Cohen’s ! quot; 0.6~0.7) • Purpose of content analysis is to design the experiment, not to draw conclusions • Coding: a priori versus emergent • Challenge: converging on representative tasks

- 41. Experimental setup • Explain features • Training period with example tasks • Account for experience • Stratified samples? • Participant recruitment • CHCI, CS@VT, CRC, Google (?)