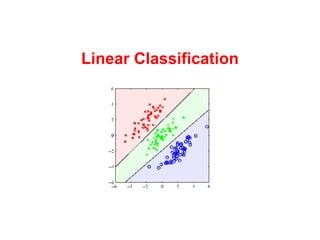

Linear Classification

- 1. Linear Classification Machine Learning; Wed Apr 23, 2008

- 2. Motivation Problem: Our goal is to “classify” input vectors x into one of k classes. Similar to regression, but the output variable is discrete. In linear classification the input space is split in (hyper-)planes, each with an assigned class.

- 3. Activation function Non-linear function assigning a class.

- 4. Activation function Non-linear function assigning a class.

- 5. Activation function Non-linear function assigning a class. Due to f the model is not linear in the weights.

- 6. Activation function Non-linear function assigning a class. Due to f the model is not linear in the weights. The decision surfaces are linear in w and x . Decision surface

- 7. Discriminant functions A simple linear discriminant function:

- 8. Discriminant functions A simple linear discriminant function:

- 9. Least square training Target vectors as bit vectors. Classification vectors the h functions.

- 10. Least square training Least square is appropriate for Gaussian distributions, but has major problems with discrete targets...

- 11. Fisher's linear discriminant Approach: maximize this distance Consider the classification a projection:

- 12. Fisher's linear discriminant Approach: maximize this distance But notice the large overlap in the histograms. The variance in the projection is larger than it need be. Consider the classification a projection:

- 13. Fisher's linear discriminant Maximize difference in mean and minimize within-class variance:

- 15. Probabilistic models Wake Up! Clever ideas here!

- 16. Probabilistic models Wake Up! Clever ideas here! Modeling

- 17. Probabilistic models Wake Up! Clever ideas here! Modeling Fitting in

- 18. Probabilistic models Wake Up! Clever ideas here! Modeling Fitting in Making choices

- 19. Probabilistic models Approach: Define conditional distribution and make decision based on the sigmoid activation

- 20. Probabilistic models Particularly simple expression for Gaussian regression

- 22. Maximum likelihood estimation Assume observed iid

- 24. Logistic regression We can also directly express the class probability as a sigmoid (without implicitly having an underlying Gaussian):

- 25. Logistic regression We can also directly express the class probability as a sigmoid (without implicitly having an underlying Gaussian): The likelihood:

- 26. Logistic regression We can maximize the log likelihood...

- 27. Logistic regression Here we only estimate M weights, not M for each mean plus O ( M 2 ) for the variance in the Gaussian approach.

- 28. Logistic regression Here we only estimate M weights, not M for each mean plus O ( M 2 ) for the variance in the Gaussian approach. We are not assuming anything about the underlying densities (we only assume we can separate them linearly).